Article - Physically Based Rendering

来源:互联网 发布:中国铁塔 网络强国 编辑:程序博客网 时间:2024/04/20 07:26

Introduction

The pursuit of realism is pushing rendering technology towards a detailed simulation of how light works and interacts with objects. Physically based rendering is a catch all term for any technique that tries to achieve photorealism via physical simulation of light.

Currently the best model to simulate light is captured by an equation known as the rendering equation. The rendering equation tries to describe how a "unit" of light is obtained given all the incoming light that interacts with a specific point of a given scene. We will see the details and introduce the correct terminology in a moment. It's important to notice that we won't try to solve thefull rendering equation, instead we will use the following simplified version:

To understand this equation we first need to understand how the light works, and then, we will need to agree on some common terms. To give you a rough idea of what the formula means, in simple terms, we could say that the formula describes the colour of a pixel given all the incoming 'coloured light' and a function that tells us how to mix them.

Physics terms

If we want to properly understand the rendering equation we need to capture the meaning of some physical quantities; the most important of these quantities is called radiance (represented with

Radiance is a tricky thing to understand, as it is a combination of other physics quantities, therefore, before formally define it, we will introduce a few other quantities.

Radiant Flux: The radiant flux is the measure of the total amount of energy, emitted by a light source, expressed in Watts. We will represent the flux with the Greek letter

Any light source emits energy, and the amount of emitted energy is function of the wavelength.

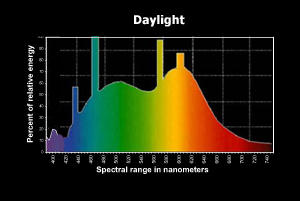

Figure 1: Daylight spectral distribution

Figure 1: Daylight spectral distributionIn figure 1 we can see the spectral distribution for day light; the radiant flux is the area of the function (to be exact, the area is the luminous flux, as the graph is limiting the wavelength to the human visible spectrum). For our purposes we will simplify the radiant flux with an RGB colour, even if this means losing a lot of information.

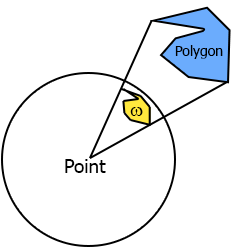

Solid angle: It's a way to measure how large an object appears to an observer looking from a point. To do this we project the silhouette of the object onto the surface of a unit sphere centred in the point we are observing from. The area of the shape we have obtained is the solid angle. In Figure 2 you can see the solid angle

Figure 2: Solid angle

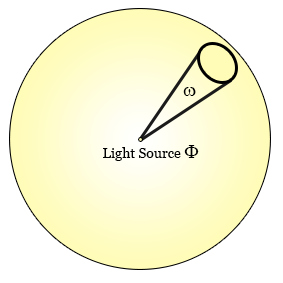

Figure 2: Solid angleRadiant Intensity: is the amount of flux per solid angle. If you have a light source that emits in all directions, how much of that light (flux) is actually going towards a specific direction? Intensity is the way to answer to that, it's the amount of flux that is going in one direction passing through a defined solid angle. The formula that describes it is

Figure 3: Light intensity

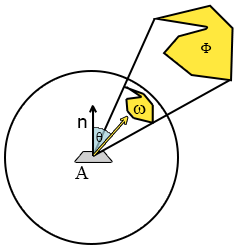

Figure 3: Light intensityRadiance: finally, we get to radiance. Radiance formula is:

where

Figure 4: Radiance components

Figure 4: Radiance componentsWe like this formula because it contains all the physical components we are interested in, and we can use it to describe a single"ray" of light. In fact we can use radiance to describe the amount of flux, passing through an infinitely small solid angle, hitting an infinitely small area, and that describes the behaviour of a light ray. So when we talk about radiance we talk about some amount of light going in some direction to some area.

When we shade a point we are interested in all the incoming light into that point, that is the sum of all the radiance that hit a hemisphere centred on the point itself; the name for this entity is irradiance. Irradiance and radiance are our main physical quantities, and we will work on both of them to achieve our physically based rendering.

The rendering equation

We can now go back on the rendering equation and try to fully understand it.

Translate to code

So, now that we have all this useful knowledge, how do we apply it to actually write something that renders to the screen? We have two main problems here.

- First of all, how can we represent all these radiance functions in the scene?

- And secondly, how do we solve the integral fast enough to be able to use this in a real-time engine?

The answer to the first question is simple, environment maps. For our purposes we'll use environment maps (cubemaps, although spherical maps would be more suited) to encode the incoming radiance from a specific direction towards a given point.

If we imagine that every pixel of the cubemap is a small emitter whose flux is the RGB colour, we can approximate

where

The answer for our second problem, how to solve the integral, is a bit more tricky, because in some cases, we won't be able to solve it quickly enough. But if the BRDF happens to depend only on the incoming radiance, or even better, on nothing (if it's constant), then we can do some nice optimization. So let's see how this happens if we plug in Lambert's BRDF, which is a constant factor (all the incoming radiance contributes to the outgoing ray after being scaled by a constant).

Lambert

Lambert's BRDF sets

So, now we have all the elements, and we can finally write a shader. I'll show that in a moment, but for now, let's see the results.

Figure 5: Left the radiance map, right the irradiance map (Lambert rendering equation)

Figure 5: Left the radiance map, right the irradiance map (Lambert rendering equation)

Now let's present the shader's code. Please note that for simplicity I'm not using the Monte Carlo integration but I've simply discretized the integral. Given infinite samples it wouldn't make any difference, but in a real case it will introduce more banding than Monte Carlo. In my tests it was good enough given that I've dropped the resolution of the cubemap to a 32x32 per face, but it's worth bearing this in mind if you want to experiment with it.

The first shader we need is the one that generates the blurry envmap (often referred to as the convolved envmap, since it is the result of the convolution of the radiance envmap and the kernel function

Since in the shader we will integrate in spherical coordinates we will change the formula to reflect that.

...float4 PixelShaderFunction(VertexShaderOutput input) : COLOR{ float3 normal = normalize( float3(input.InterpolatedPosition.xy, 1) ); if(cubeFace==2) normal = normalize( float3(input.InterpolatedPosition.x, 1, -input.InterpolatedPosition.y) ); else if(cubeFace==3) normal = normalize( float3(input.InterpolatedPosition.x, -1, input.InterpolatedPosition.y) ); else if(cubeFace==0) normal = normalize( float3( 1, input.InterpolatedPosition.y,-input.InterpolatedPosition.x) ); else if(cubeFace==1) normal = normalize( float3( -1, input.InterpolatedPosition.y, input.InterpolatedPosition.x) ); else if(cubeFace==5) normal = normalize( float3(-input.InterpolatedPosition.x, input.InterpolatedPosition.y, -1) ); float3 up = float3(0,1,0); float3 right = normalize(cross(up,normal)); up = cross(normal,right); float3 sampledColour = float3(0,0,0); float index = 0; for(float phi = 0; phi < 6.283; phi += 0.025) { for(float theta = 0; theta < 1.57; theta += 0.1) { float3 temp = cos(phi) * right + sin(phi) * up; float3 sampleVector = cos(theta) * normal + sin(theta) * temp; sampledColour += texCUBE( diffuseCubemap_Sampler, sampleVector ).rgb * cos(theta) * sin(theta); index ++; } } return float4( PI * sampledColour / index), 1 );}...

...float4 PixelShaderFunction(VertexShaderOutput input) : COLOR{ float3 irradiance= texCUBE(irradianceCubemap_Sampler, input.SampleDir).rgb; float3 diffuse = materialColour * irradiance; return float4( diffuse , 1); }...

This concludes the first part of the article on physically based rendering. I'm planning to write a second part on how to implement a more interesting BRDF like Cook-Torrance's BRDF.

- Article - Physically Based Rendering

- Physically Based Rendering

- Physically Based Rendering阅读

- Physically Based Rendering, Second Edition

- “Physically based Rendering” first round done

- Basic Theory of Physically-Based Rendering

- Basic Theory of Physically-Based Rendering

- Physically Based Rendering - Cook–Torrance

- AMD Cubemapgen for physically based rendering

- Unity3d 基于物理渲染Physically-Based Rendering之specular BRDF

- Unity3d 基于物理渲染Physically-Based Rendering之实现

- Unity3d 基于物理渲染Physically-Based Rendering之最终篇

- Unity3d 基于物理渲染Physically-Based Rendering之specular BRDF

- Unity3d 基于物理渲染Physically-Based Rendering之实现

- Unity3d 基于物理渲染Physically-Based Rendering之最终篇

- Physically Based Rendering, Third Edition 第三版电子书

- Physically Based Rendering,PBRT(光线跟踪:基于物理的渲染) 笔记

- Light and Matter-The theory of Physically-Based Rendering and Shading

- "Java:comp/env/"讲解与JNDI

- mysql → INSERT ... ON DUPLICATE KEY UPDATE

- Android代码混淆报错

- Error:(2, 0) Plugin with id 'com.github.dcendents.android-maven' not found

- 使用数组实现队列(C语言)

- Article - Physically Based Rendering

- 已安装了存在签名冲突的同名数据包

- 渗透测试:nc端口转发或反向转发

- leetcode 2:Add Two Numbers

- 多行文本垂直居中

- 弹出遮罩层后禁止滚动效果【实现代码】

- JDBC数据库基本操作

- Linux服务器定位CPU高占用率代码位置经历

- 验证码类和分页类