opencv学习笔记-openCV2与opencv3机器学习库MLL

来源:互联网 发布:win10优化版 编辑:程序博客网 时间:2024/05/21 08:42

参考:http://www.aiuxian.com/article/p-620963.html

http://blog.csdn.net/lifeitengup/article/details/8866078

opencv2机器学习库MLL

- #include <iostream>

- #include <opencv2/core/core.hpp>

- #include <opencv2/highgui/highgui.hpp>

- #include <opencv2/ml/ml.hpp>

- #define NTRAINING_SAMPLES 100 // Number of training samples per class

- #define FRAC_LINEAR_SEP 0.9f // Fraction of samples which compose the linear separable part

- using namespace cv;

- using namespace std;

- void help()

- {

- cout<< "\n--------------------------------------------------------------------------" << endl

- << "This program shows Support Vector Machines for Non-Linearly Separable Data. " << endl

- << "Usage:" << endl

- << "./non_linear_svms" << endl

- << "--------------------------------------------------------------------------" << endl

- << endl;

- }

- int main()

- {

- help();

- // Data for visual representation

- const int WIDTH = 512, HEIGHT = 512;

- Mat I = Mat::zeros(HEIGHT, WIDTH, CV_8UC3);

- //--------------------- 1. Set up training data randomly ---------------------------------------

- Mat trainData(2*NTRAINING_SAMPLES, 2, CV_32FC1);

- Mat labels (2*NTRAINING_SAMPLES, 1, CV_32FC1);

- RNG rng(100); // Random value generation class

- // Set up the linearly separable part of the training data

- int nLinearSamples = (int) (FRAC_LINEAR_SEP * NTRAINING_SAMPLES);

- // Generate random points for the class 1

- Mat trainClass = trainData.rowRange(0, nLinearSamples);

- // The x coordinate of the points is in [0, 0.4)

- Mat c = trainClass.colRange(0, 1);

- rng.fill(c, RNG::UNIFORM, Scalar(1), Scalar(0.4 * WIDTH));

- // The y coordinate of the points is in [0, 1)

- c = trainClass.colRange(1,2);

- rng.fill(c, RNG::UNIFORM, Scalar(1), Scalar(HEIGHT));

- // Generate random points for the class 2

- trainClass = trainData.rowRange(2*NTRAINING_SAMPLES-nLinearSamples, 2*NTRAINING_SAMPLES);

- // The x coordinate of the points is in [0.6, 1]

- c = trainClass.colRange(0 , 1);

- rng.fill(c, RNG::UNIFORM, Scalar(0.6*WIDTH), Scalar(WIDTH));

- // The y coordinate of the points is in [0, 1)

- c = trainClass.colRange(1,2);

- rng.fill(c, RNG::UNIFORM, Scalar(1), Scalar(HEIGHT));

- //------------------ Set up the non-linearly separable part of the training data ---------------

- // Generate random points for the classes 1 and 2

- trainClass = trainData.rowRange( nLinearSamples, 2*NTRAINING_SAMPLES-nLinearSamples);

- // The x coordinate of the points is in [0.4, 0.6)

- c = trainClass.colRange(0,1);

- rng.fill(c, RNG::UNIFORM, Scalar(0.4*WIDTH), Scalar(0.6*WIDTH));

- // The y coordinate of the points is in [0, 1)

- c = trainClass.colRange(1,2);

- rng.fill(c, RNG::UNIFORM, Scalar(1), Scalar(HEIGHT));

- //------------------------- Set up the labels for the classes ---------------------------------

- labels.rowRange( 0, NTRAINING_SAMPLES).setTo(1); // Class 1

- labels.rowRange(NTRAINING_SAMPLES, 2*NTRAINING_SAMPLES).setTo(2); // Class 2

- //------------------------ 2. Set up the support vector machines parameters --------------------

- CvSVMParams params;

- params.svm_type = SVM::C_SVC;

- params.C = 0.1;

- params.kernel_type = SVM::LINEAR;

- params.term_crit = TermCriteria(CV_TERMCRIT_ITER, (int)1e7, 1e-6);

- //------------------------ 3. Train the svm ----------------------------------------------------

- cout << "Starting training process" << endl;

- CvSVM svm;

- svm.train(trainData, labels, Mat(), Mat(), params);

- cout << "Finished training process" << endl;

- //------------------------ 4. Show the decision regions ----------------------------------------

- Vec3b green(0,100,0), blue (100,0,0);

- for (int i = 0; i < I.rows; ++i)

- for (int j = 0; j < I.cols; ++j)

- {

- Mat sampleMat = (Mat_<float>(1,2) << i, j);

- float response = svm.predict(sampleMat);

- if (response == 1) I.at<Vec3b>(j, i) = green;

- else if (response == 2) I.at<Vec3b>(j, i) = blue;

- }

- //----------------------- 5. Show the training data --------------------------------------------

- int thick = -1;

- int lineType = 8;

- float px, py;

- // Class 1

- for (int i = 0; i < NTRAINING_SAMPLES; ++i)

- {

- px = trainData.at<float>(i,0);

- py = trainData.at<float>(i,1);

- circle(I, Point( (int) px, (int) py ), 3, Scalar(0, 255, 0), thick, lineType);

- }

- // Class 2

- for (int i = NTRAINING_SAMPLES; i <2*NTRAINING_SAMPLES; ++i)

- {

- px = trainData.at<float>(i,0);

- py = trainData.at<float>(i,1);

- circle(I, Point( (int) px, (int) py ), 3, Scalar(255, 0, 0), thick, lineType);

- }

- //------------------------- 6. Show support vectors --------------------------------------------

- thick = 2;

- lineType = 8;

- int x = svm.get_support_vector_count();

- for (int i = 0; i < x; ++i)

- {

- const float* v = svm.get_support_vector(i);

- circle( I, Point( (int) v[0], (int) v[1]), 6, Scalar(128, 128, 128), thick, lineType);

- }

- imwrite("result.png", I); // save the Image

- imshow("SVM for Non-Linear Training Data", I); // show it to the user

- waitKey(0);

- }

opencv3 svm非线性分类

- #include "opencv2/opencv.hpp"

- #include "opencv2/imgproc.hpp"

- #include "opencv2/highgui.hpp"

- #include "opencv2/ml.hpp"

- using namespace cv;

- using namespace cv::ml;

- int main(int, char**)

- {

- int width = 512, height = 512;

- Mat image = Mat::zeros(height, width, CV_8UC3); //创建窗口可视化

- // 设置训练数据

- int labels[10] = { 1, -1, 1, 1,-1,1,-1,1,-1,-1 };

- Mat labelsMat(10, 1, CV_32SC1, labels);

- float trainingData[10][2] = { { 501, 150 }, { 255, 10 }, { 501, 255 }, { 10, 501 }, { 25, 80 },

- { 150, 300 }, { 77, 200 } , { 300, 300 } , { 45, 250 } , { 200, 200 } };

- Mat trainingDataMat(10, 2, CV_32FC1, trainingData);

- // 创建分类器并设置参数

- Ptr<SVM> model =SVM::create();

- model->setType(SVM::C_SVC);

- model->setKernel(SVM::LINEAR); //核函数

- //设置训练数据

- Ptr<TrainData> tData =TrainData::create(trainingDataMat, ROW_SAMPLE, labelsMat);

- // 训练分类器

- model->train(tData);

- Vec3b green(0, 255, 0), blue(255, 0, 0);

- // Show the decision regions given by the SVM

- for (int i = 0; i < image.rows; ++i)

- for (int j = 0; j < image.cols; ++j)

- {

- Mat sampleMat = (Mat_<float>(1, 2) << j, i); //生成测试数据

- float response = model->predict(sampleMat); //进行预测,返回1或-1

- if (response == 1)

- image.at<Vec3b>(i, j) = green;

- else if (response == -1)

- image.at<Vec3b>(i, j) = blue;

- }

- // 显示训练数据

- int thickness = -1;

- int lineType = 8;

- Scalar c1 = Scalar::all(0); //标记为1的显示成黑点

- Scalar c2 = Scalar::all(255); //标记成-1的显示成白点

- //绘图时,先宽后高,对应先列后行

- for (int i = 0; i < labelsMat.rows; i++)

- {

- const float* v = trainingDataMat.ptr<float>(i); //取出每行的头指针

- Point pt = Point((int)v[0], (int)v[1]);

- if (labels[i] == 1)

- circle(image, pt, 5, c1, thickness, lineType);

- else

- circle(image, pt, 5, c2, thickness, lineType);

- }

- imshow("SVM Simple Example", image);

- waitKey(0);

- }

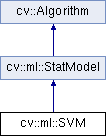

最初,支持向量机(SVM)是构建最优二进制(2类)分类器的技术。 后来该技术扩展到回归和聚类问题。 SVM是基于内核的方法的一部分。 它使用内核函数将特征向量映射到更高维的空间,并在该空间中构建最佳线性鉴别函数或适合训练数据的最优超平面。 在SVM的情况下,内核没有明确定义。 相反,需要定义超空间中任何2个点之间的距离。

解决方案是最优的,这意味着分离超平面与两类的最近特征向量之间的边界(在2类分类器的情况下)是最大的。 最靠近超平面的特征向量称为支持向量,这意味着其他向量的位置不会影响超平面(决策函数)。

OpenCV3 SVM是基于 [LibSVM]的。

- Burges. A tutorial on support vector machines for pattern recognition, Knowledge Discovery and Data Mining 2(2), 1998 (available online at http://citeseer.ist.psu.edu/burges98tutorial.html)

0 0

- opencv学习笔记-openCV2与opencv3机器学习库MLL

- OpenCV2机器学习库MLL

- OpenCV机器学习库MLL

- OpenCV机器学习库MLL

- 机器学习笔记-opencv2与opencv3手写体字母或者数字识别

- 【opencv学习之十】opencv3和opencv2读取本地摄像头

- OpenCV学习笔记 -- VS2010 + OpenCV2.3配置

- OpenCV学习笔记(二):OpenCV3.0 AKAZE特征检测与显示

- OpenCV学习笔记(8)-机器学习

- opencv学习笔记10:机器学习

- openCV学习笔记(1):opencv2.3.1与vs2010安装配置

- 《OpenCV3编程入门》学习笔记一:邂逅OpenCV

- 《OpenCV3编程入门》学习笔记二:快速上手OpenCV

- opencv3学习笔记(一)——opencv入门

- 《OpenCV3编程入门》学习笔记番外篇之OpenCV-Python使用

- OpenCV学习笔记——《OpenCV3编程入门》读书笔记

- opencv学习笔记-OpenCV3.0 决策树的使用

- OpenCV学习笔记(4)VS2015配置opencv3.2.0

- PHP源码阅读 Day.2 解读PHP底层 mysql的驱动链接

- __ATTRIBUTE__ 你知多少?

- HZAU 1202 GCD( 斐波那契数列+矩阵快速幂)

- HocTracker:动态社会网络的群组演化追踪

- 回文子序列 ssl 2662 暴力

- opencv学习笔记-openCV2与opencv3机器学习库MLL

- ~二维数组中的查找~

- Retrofit

- A C M time

- Windows下搭建Weex环境【初体验】

- Android屏幕适配全攻略(最权威的官方适配指导)

- C++中map、hash_map、unordered_map、unordered_set通俗辨析

- Win10安装SQL2012或SQL2014报错

- 2_5最近邻算法kNN(k_nearest_neighbor)——classifyPerson_2_5