flume采集log4j日志到kafka

来源:互联网 发布:自学编程的app 编辑:程序博客网 时间:2024/06/07 14:17

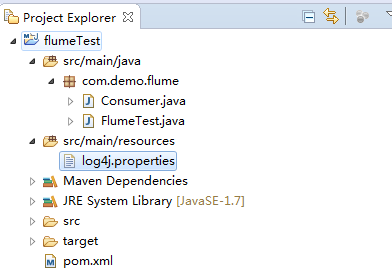

简单测试项目:

1、新建Java项目结构如下:

测试类FlumeTest代码如下:

package com.demo.flume;import org.apache.log4j.Logger;public class FlumeTest { private static final Logger LOGGER = Logger.getLogger(FlumeTest.class); public static void main(String[] args) throws InterruptedException { for (int i = 20; i < 100; i++) { LOGGER.info("Info [" + i + "]"); Thread.sleep(1000); } }}

监听kafka接收消息Consumer代码如下:

package com.demo.flume;/** * INFO: info * User: zhaokai * Date: 2017/3/17 * Version: 1.0 * History: <p>如果有修改过程,请记录</P> */import java.util.Arrays;import java.util.Properties;import org.apache.kafka.clients.consumer.ConsumerRecord;import org.apache.kafka.clients.consumer.ConsumerRecords;import org.apache.kafka.clients.consumer.KafkaConsumer;public class Consumer { public static void main(String[] args) { System.out.println("begin consumer"); connectionKafka(); System.out.println("finish consumer"); } @SuppressWarnings("resource") public static void connectionKafka() { Properties props = new Properties(); props.put("bootstrap.servers", "192.168.1.163:9092"); props.put("group.id", "testConsumer"); props.put("enable.auto.commit", "true"); props.put("auto.commit.interval.ms", "1000"); props.put("session.timeout.ms", "30000"); props.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer"); props.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer"); KafkaConsumer<String, String> consumer = new KafkaConsumer<>(props); consumer.subscribe(Arrays.asList("flumeTest")); while (true) { ConsumerRecords<String, String> records = consumer.poll(100); try { Thread.sleep(2000); } catch (InterruptedException e) { e.printStackTrace(); } for (ConsumerRecord<String, String> record : records) { System.out.printf("===================offset = %d, key = %s, value = %s", record.offset(), record.key(), record.value()); } } }}

log4j配置文件配置如下:

log4j.rootLogger=INFO,console# for package com.demo.kafka, log would be sent to kafka appender.log4j.logger.com.demo.flume=info,flumelog4j.appender.flume = org.apache.flume.clients.log4jappender.Log4jAppenderlog4j.appender.flume.Hostname = 192.168.1.163log4j.appender.flume.Port = 4141log4j.appender.flume.UnsafeMode = truelog4j.appender.flume.layout=org.apache.log4j.PatternLayoutlog4j.appender.flume.layout.ConversionPattern=%d{yyyy-MM-dd HH:mm:ss} %p [%c:%L] - %m%n # appender consolelog4j.appender.console=org.apache.log4j.ConsoleAppenderlog4j.appender.console.target=System.outlog4j.appender.console.layout=org.apache.log4j.PatternLayoutlog4j.appender.console.layout.ConversionPattern=%d [%-5p] [%t] - [%l] %m%n

备注:其中hostname为flume安装的服务器IP,port为端口与下面的flume的监听端口相对应

pom.xml引入如下jar:

<dependencies> <dependency> <groupId>org.slf4j</groupId> <artifactId>slf4j-log4j12</artifactId> <version>1.7.10</version> </dependency> <dependency> <groupId>org.apache.flume</groupId> <artifactId>flume-ng-core</artifactId> <version>1.5.0</version> </dependency> <dependency> <groupId>org.apache.flume.flume-ng-clients</groupId> <artifactId>flume-ng-log4jappender</artifactId> <version>1.5.0</version> </dependency> <dependency> <groupId>junit</groupId> <artifactId>junit</artifactId> <version>4.12</version> </dependency> <dependency> <groupId>org.apache.kafka</groupId> <artifactId>kafka-clients</artifactId> <version>0.10.2.0</version> </dependency> <dependency> <groupId>org.apache.kafka</groupId> <artifactId>kafka_2.10</artifactId> <version>0.10.2.0</version> </dependency> <dependency> <groupId>org.apache.kafka</groupId> <artifactId>kafka-log4j-appender</artifactId> <version>0.10.2.0</version> </dependency> <dependency> <groupId>com.google.guava</groupId> <artifactId>guava</artifactId> <version>18.0</version> </dependency></dependencies>

2、配置flume

flume/conf下:

新建avro.conf 文件内容如下:

当然skin可以用任何方式,这里我用的是kafka,具体的skin方式可以看官网

a1.sources=source1a1.channels=channel1a1.sinks=sink1a1.sources.source1.type=avroa1.sources.source1.bind=192.168.1.163a1.sources.source1.port=4141a1.sources.source1.channels = channel1a1.channels.channel1.type=memorya1.channels.channel1.capacity=10000a1.channels.channel1.transactionCapacity=1000a1.channels.channel1.keep-alive=30a1.sinks.sink1.type = org.apache.flume.sink.kafka.KafkaSinka1.sinks.sink1.topic = flumeTesta1.sinks.sink1.brokerList = 192.168.1.163:9092a1.sinks.sink1.requiredAcks = 0a1.sinks.sink1.sink.batchSize = 20a1.sinks.sink1.channel = channel1

如上配置,flume服务器运行在192.163.1.163上,并且监听的端口为4141,在log4j中只需要将日志发送到192.163.1.163的4141端口就能成功的发送到flume上。flume会监听并收集该端口上的数据信息,然后将它转化成kafka event,并发送到kafka集群flumeTest topic下。

3、启动flume并测试

- flume启动命令:bin/flume-ng agent --conf conf --conf-file conf/avro.conf --name a1 -Dflume.root.logger=INFO,console

- 运行FlumeTest类的main方法打印日志

- 允许Consumer的main方法打印kafka接收到的数据

阅读全文

0 0

- flume采集log4j日志到kafka

- 基于Flume+Log4j+Kafka的日志采集架构方案

- 基于Flume+Log4j+Kafka的日志采集架构方案

- log4j结合apache flume输出日志到apache kafka

- log4j+flume+kafka管理日志,查询日志

- flume-kafka整合--实时日志采集

- flume上报日志到kafka

- log4j+flume+kafka实现日志收集

- log4j输出日志到flume

- log4j输出日志到flume

- flume采集数据到kafka和hive

- flume入门 log4j 输出日志到flume

- 关于linux环境下flume采集日志发布到kafka的配置

- flume-ng 实际应用例子,flume采集log4j日志

- 从flume到kafka,日志收集

- flume实时收集日志到kafka

- 日志采集系统比较:scribe、chukwa、kafka、flume比较

- 开源日志采集系统比较:scribe、chukwa、kafka、flume

- OkHttp封装(带泛型)

- C++ 工厂+反射+配置文件

- linux下无法执行PHP命令,错误 php: command not found

- MOOC清华《VC++面向对象与可视化程序设计》第2章:Windows绘图-例(2)映像模式的使用(一)

- DAG上的动态规划--嵌套矩形

- flume采集log4j日志到kafka

- 欢迎

- 数值分析定点迭代python3实现加绘图

- 主成分分析对随机变量标准化处理

- 第四周项目3

- 实现包含min,max,push,pop函数的栈

- C语言 数据类型占多少字节,指针占多少字节

- 前端开发是什么以及我们要学习什么

- java集合