Reading Note: Progressive Growing of GANs for Improved Quality, Stability, and Variation

来源:互联网 发布:ipad下载软件付费 编辑:程序博客网 时间:2024/05/22 13:36

TITLE: Progressive Growing of GANs for Improved Quality, Stability, and Variation

AUTHOR: Tero Karras, Timo Aila, Samuli Laine, Jaakko Lehtinen

ASSOCIATION: NVIDIA

FROM: ICLR2018

CONTRIBUTION

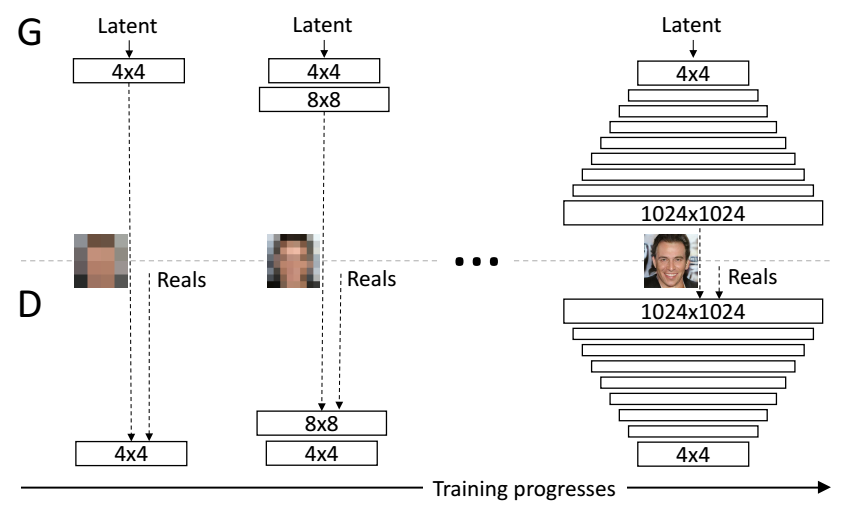

A training methodology is proposed for GANs which starts with low-resolution images, and then progressively increases the resolution by adding layers to the networks. This incremental nature allows the training to first discover large-scale structure of the image distribution and then shift attention to increasingly finer scale detail, instead of having to learn

all scales simultaneously.

METHOD

PROGRESSIVE GROWING OF GANS

The following figure illustrates the training procedure of this work.

The training starts with both the generator

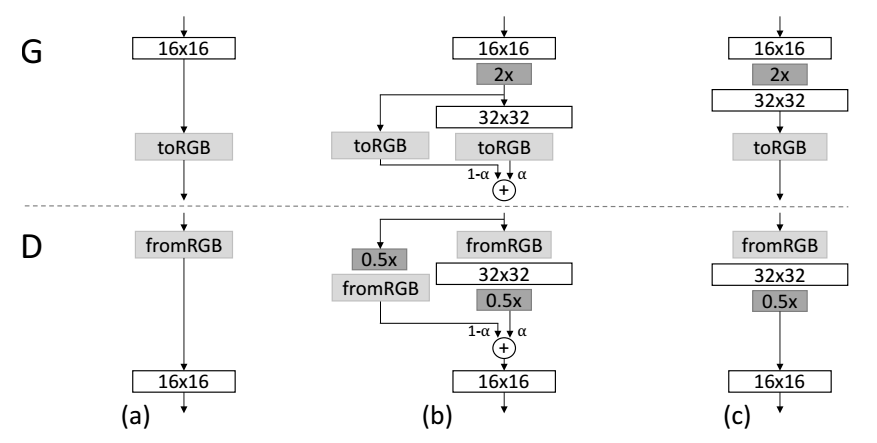

fade in is adopted when the new layers are added to double resolution of the generator

INCREASING VARIATION USING MINIBATCH STANDARD DEVIATION

- Compute the standard deviation for each feature in each spatial location over the minibatch.

- Average these estimates over all features and spatial locations to arrive at a single value.

- Consturct one additional (constant) feature map by replicating the value and concatenate it to all spatial locations and over the minibatch

NORMALIZATION IN GENERATOR AND DISCRIMINATOR

EQUALIZED LEARNING RATE. A trivial

PIXELWISE FEATURE VECTOR NORMALIZATION IN GENERATOR. To disallow the scenario where the magnitudes in the generator and discriminator spiral out of control as a result of competition, the feature vector is normalized in each pixel to unit length in the generator after each convolutional layer, using a variant of “local response normalization”, configured as

where

- Reading Note: Progressive Growing of GANs for Improved Quality, Stability, and Variation

- PROGRESSIVE GROWING OF GANS FOR IMPROVED QUALITY, STABILITY, AND VARIATION

- PROGRESSIVE GROWING OF GANs for IMPROVED QUALITY, STABILITY AND VARIATION

- Improved Techniques for Training GANs

- 阅读小结:Improved Techniques for training GANS

- READING NOTE: Joint Tracking and Segmentation of Multiple Targets

- Wiley - VERIFICATION AND VALIDATION FOR QUALITY OF UML 2.0 MODELS

- Verification and Validation for Quality of UML 2.0 Models

- Reading note of Effective C++

- Reading table information for completion of table and column names

- Bag-of-colors for Improved Image Search

- READING NOTE: Pushing the Limits of Deep CNNs for Pedestrian Detection

- Reading note for z/OS USS Implementation

- Reading Note : Parameter estimation for text analysis

- Reading Note : Gibbs Sampling for the Uninitiated

- My note for reading English reference

- Reading Note : Gibbs Sampling for the Uninitiated

- READING NOTE: Understanding Convolution for Semantic Segmentation

- 不需要ajax实现搜索数据保留翻页

- java 锁机制

- 简单选择排序(O(n2))

- 截取IP地址

- 使用main函数的参数,实现一个整数计算器,程序可以接受三个参数,第一个参数“-a”选项执行加法,“-s”选项执行减法,“-m”选项执行乘法,“-d”选项执行除法,后面两个参数为操作数。

- Reading Note: Progressive Growing of GANs for Improved Quality, Stability, and Variation

- 使用MVP注册登录模块+封装的OKhttp,拦截器+QQ第三方登录+RecyclerView+SpringView上拉加载下拉刷新网络数据

- 反射机制基础解析

- Codejam之Tidy Numbers

- 写冒泡排序可以排序多个字符串。

- HTML5使用<ruby>和<rt>来实现拼音标注效果

- 学习笔记TF064:TensorFlow Kubernetes

- Raft一致性框架_Copycat基础学习(一)

- Java互联网面试题之有XX网(互联网金融C轮企业)面试题