Asynchronous Methods for Deep Reinforcement Learning 阅读笔记

来源:互联网 发布:java面向对象 编程题 编辑:程序博客网 时间:2024/05/29 03:44

Asynchronous Methods for Deep Reinforcement Learning 阅读笔记

标签(空格分隔): 增强学习算法 论文笔记

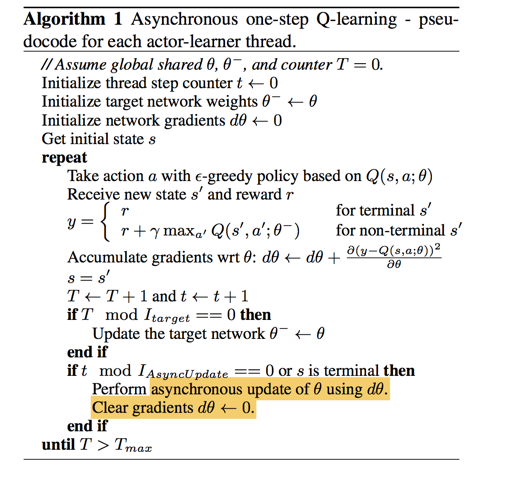

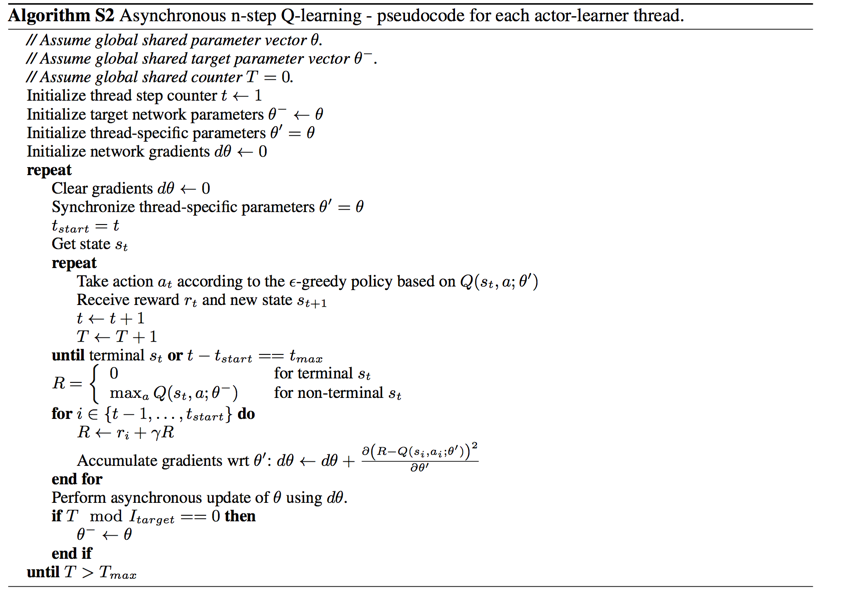

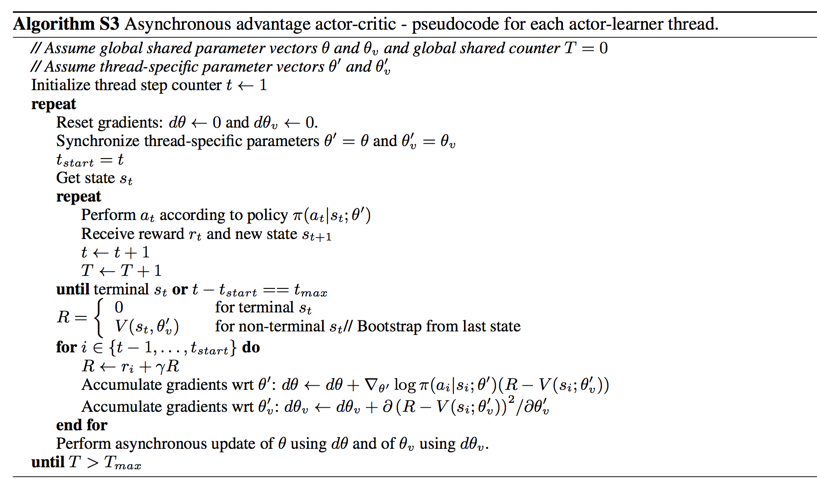

本文的贡献在于提出了异步学习的算法,并应用在A2C Q-learning等算法中该论文作者提出了异步训练(Asynchronous Methods)的方法应用到强化学习的各个算法中(Sarsa,one-step Q-learning n-step Q-learning和 advantage actor-critic)然后 作者通过实验说明将异步训练方式应用在 A2C中的效果最好,于是就有了A3C(Asynchronous advantage actor-critic).

作者在设计 Asynchronous Methods初衷是为了解决:在线学习获得的训练数据不稳定,而且数据与数据之间的相关性比较大

通常的做法是采用replay memory的机制,这种方法能够保证稳定性以及减少数据之间的相关性,但是replay memeory的机制同时也将算法限制在off-policy的范畴之内了。

关于on-policy 和off-policy:

on-policy: 训练数据都是最新的策略而非老的策略采集而来的;

off-ploicy: 训练数据是由历史的(包括最新的)策略采集而来

除此之外,增强学习需要的数据比较大,需要大量的experience,如果实用relplay memory则将会加大训练成本

因此,作者为了解决训练数据相关性比较大 replay memory 占用大量的资源,提出了Asynchronous Methods方法。

Asynchronous Methods的核心思想是用多个action-learner(相当于多个agnet)来玩一个游戏,由于游戏的初始状态是随机的,这样就能保证数据之间相关性较少且可以on-policy学习。

相对于replay memory, Asynchronous Methods优点在于:

(1)可以将算法应用在on-policy

(2)减少大量的显存,可以在多核CPU上进行训练,大大少训练成本

论文原话:

- We present asynchronous variants of four standard reinforcement learning algorithms and show that parallel actor-learners have a stabilizing effect on training allowing all four methods to successfully train neural network

- Aggregating over memory in this way reduces non-stationarity and decorre- lates updates, but at the same time limits the methods to off-policy reinforcement learning algorithms

- it uses more memory and computation per real interaction; and it requires off-policy learning algorithms that can update from data generated by an older policy.

- Instead of experience replay, we asynchronously execute multiple agents in parallel, on multiple instances of the environment.

- Keeping the learners on a single machine removes the communication costs of sending gradients and parameters and enables us to use.

然后 作者将Asynchronous Methods分别应用在Q-learning 和 n-step Q-learning 以及A2C上。

- Asynchronous Methods for Deep Reinforcement Learning 阅读笔记

- Deep Reinforcement Learning for Dialogue Generation阅读笔记

- Paper Reading 4:Massively Parallel Methods for Deep Reinforcement Learning

- FeUdal Networks for Hierarchical Reinforcement Learning 阅读笔记

- Reinforcement Learning for Relation Classification from Noisy Data阅读笔记

- [阅读笔记]Programming Models for Deep Learning

- Deep Reinforcement Learning for Dialogue Generation

- Deep Reinforcement Learning for Dialogue Generation 翻译

- Policy Gradient Methods for Reinforcement Learning with Function Approximation

- EFFICIENT METHODS AND HARDWARE FOR DEEP LEARNING

- Deep Reinforcement Learning 基础知识

- Deep Reinforcement Learning

- Deep Reinforcement learning

- cuDNN: efficient Primitives for Deep Learning 论文阅读笔记

- cuDNN: efficient Primitives for Deep Learning 论文阅读笔记

- 《Deep Label Distrubution Learning for Appearent Age Estimation》阅读笔记

- Deep Residual Learning for Image Recognition 阅读笔记

- cuDNN: efficient Primitives for Deep Learning 论文阅读笔记

- nginx,rewrite,proxy_pass,post数据,表单

- Spider Man CodeForces

- C++构造函数的三个作用

- L1-034. 点赞

- Android应用内多进程的使用及注意事项

- Asynchronous Methods for Deep Reinforcement Learning 阅读笔记

- $.ajax 中的contentType

- 使用ajax和json实现迭代数据的效果

- 使用eclipse插件创建一个web project

- Matlab报错问题

- Java 多线程

- 深度详解Retrofit2使用(二)实践

- 代码的哲学(c/c++):从常量到变量

- shell脚本中echo显示内容带颜色