多种分类器训练检测

来源:互联网 发布:股票盘后数据 编辑:程序博客网 时间:2024/06/06 02:24

点击打开链接

OCR (Optical Character Recognition,光学字符识别),我们这个练习就是对OCR英文字母进行识别。得到一张OCR图片后,提取出字符相关的ROI图像,并且大小归一化,整个图像的像素值序列可以直接作为特征。但直接将整个图像作为特征数据维度太高,计算量太大,所以也可以进行一些降维处理,减少输入的数据量。

处理过程一般这样:先对原图像进行裁剪,得到字符的ROI图像,二值化。然后将图像分块,统计每个小块中非0像素的个数,这样就形成了一个较小的矩阵,这矩阵就是新的特征了。opencv为我们提供了一些这样的数据,放在

\opencv\sources\samples\data\letter-recognition.data

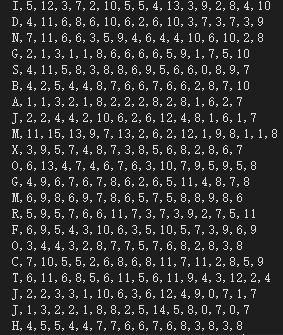

这个文件里,打开看看:

每一行代表一个样本。第一列大写的字母,就是标注,随后的16列就是该字母的特征向量。这个文件中总共有20000行样本,共分类26类(26个字母)。

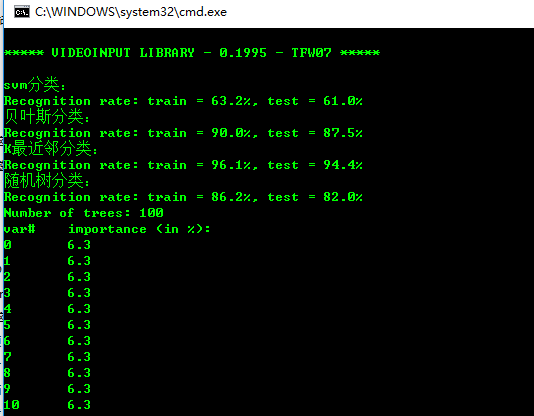

我们将这些数据读取出来后,分成两部分,第一部分16000个样本作为训练样本,训练出分类器后,对这16000个训练数据和余下的4000个数据分别进行测试,得到训练精度和测试精度。其中adaboost比较特殊一点,训练和测试样本各为10000.

完整代码为:

按 Ctrl+C 复制代码

#include "opencv2\opencv.hpp"

#include <iostream>

using namespace std;

using namespace cv;

using namespace cv::ml;

// 读取文件数据

bool read_num_class_data(const string& filename, int var_count, Mat* _data, Mat* _responses)

{

const int M = 1024;

char buf[M + 2];

Mat el_ptr(1, var_count, CV_32F);

int i;

vector<int> responses;

_data->release();

_responses->release();

FILE *f;

fopen_s(&f, filename.c_str(), "rt");

if (!f)

{

cout << "Could not read the database " << filename << endl;

return false;

}

for (;;)

{

char* ptr;

if (!fgets(buf, M, f) || !strchr(buf, ','))

break;

responses.push_back((int)buf[0]);

ptr = buf + 2;

for (i = 0; i < var_count; i++)

{

int n = 0;

sscanf_s(ptr, "%f%n", &el_ptr.at<float>(i), &n);

ptr += n + 1;

}

if (i < var_count)

break;

_data->push_back(el_ptr);

}

fclose(f);

Mat(responses).copyTo(*_responses);

return true;

}

//准备训练数据

Ptr<TrainData> prepare_train_data(const Mat& data, const Mat& responses, int ntrain_samples)

{

Mat sample_idx = Mat::zeros(1, data.rows, CV_8U);

Mat train_samples = sample_idx.colRange(0, ntrain_samples);

train_samples.setTo(Scalar::all(1));

int nvars = data.cols;

Mat var_type(nvars + 1, 1, CV_8U);

var_type.setTo(Scalar::all(VAR_ORDERED));

var_type.at<uchar>(nvars) = VAR_CATEGORICAL;

return TrainData::create(data, ROW_SAMPLE, responses,

noArray(), sample_idx, noArray(), var_type);

}

//设置迭代条件

inline TermCriteria TC(int iters, double eps)

{

return TermCriteria(TermCriteria::MAX_ITER + (eps > 0 ? TermCriteria::EPS : 0), iters, eps);

}

//分类预测

void test_and_save_classifier(const Ptr<StatModel>& model, const Mat& data, const Mat& responses,

int ntrain_samples, int rdelta)

{

int i, nsamples_all = data.rows;

double train_hr = 0, test_hr = 0;

// compute prediction error on train and test data

for (i = 0; i < nsamples_all; i++)

{

Mat sample = data.row(i);

float r = model->predict(sample);

r = std::abs(r + rdelta - responses.at<int>(i)) <= FLT_EPSILON ? 1.f : 0.f;

if (i < ntrain_samples)

train_hr += r;

else

test_hr += r;

}

test_hr /= nsamples_all - ntrain_samples;

train_hr = ntrain_samples > 0 ? train_hr / ntrain_samples : 1.;

printf("Recognition rate: train = %.1f%%, test = %.1f%%\n",

train_hr*100., test_hr*100.);

}

//随机树分类

bool build_rtrees_classifier(const string& data_filename)

{

Mat data;

Mat responses;

read_num_class_data(data_filename, 16, &data, &responses);

int nsamples_all = data.rows;

int ntrain_samples = (int)(nsamples_all*0.8);

Ptr<RTrees> model;

Ptr<TrainData> tdata = prepare_train_data(data, responses, ntrain_samples);

model = RTrees::create();

model->setMaxDepth(10);

model->setMinSampleCount(10);

model->setRegressionAccuracy(0);

model->setUseSurrogates(false);

model->setMaxCategories(15);

model->setPriors(Mat());

model->setCalculateVarImportance(true);

model->setActiveVarCount(4);

model->setTermCriteria(TC(100, 0.01f));

model->train(tdata);

test_and_save_classifier(model, data, responses, ntrain_samples, 0);

cout << "Number of trees: " << model->getRoots().size() << endl;

// Print variable importance

Mat var_importance = model->getVarImportance();

if (!var_importance.empty())

{

double rt_imp_sum = sum(var_importance)[0];

printf("var#\timportance (in %%):\n");

int i, n = (int)var_importance.total();

for (i = 0; i < n; i++)

printf("%-2d\t%-4.1f\n", i, 100.f*var_importance.at<float>(i) / rt_imp_sum);

}

return true;

}

//adaboost分类

bool build_boost_classifier(const string& data_filename)

{

const int class_count = 26;

Mat data;

Mat responses;

Mat weak_responses;

read_num_class_data(data_filename, 16, &data, &responses);

int i, j, k;

Ptr<Boost> model;

int nsamples_all = data.rows;

int ntrain_samples = (int)(nsamples_all*0.5);

int var_count = data.cols;

Mat new_data(ntrain_samples*class_count, var_count + 1, CV_32F);

Mat new_responses(ntrain_samples*class_count, 1, CV_32S);

for (i = 0; i < ntrain_samples; i++)

{

const float* data_row = data.ptr<float>(i);

for (j = 0; j < class_count; j++)

{

float* new_data_row = (float*)new_data.ptr<float>(i*class_count + j);

memcpy(new_data_row, data_row, var_count * sizeof(data_row[0]));

new_data_row[var_count] = (float)j;

new_responses.at<int>(i*class_count + j) = responses.at<int>(i) == j + 'A';

}

}

Mat var_type(1, var_count + 2, CV_8U);

var_type.setTo(Scalar::all(VAR_ORDERED));

var_type.at<uchar>(var_count) = var_type.at<uchar>(var_count + 1) = VAR_CATEGORICAL;

Ptr<TrainData> tdata = TrainData::create(new_data, ROW_SAMPLE, new_responses,

noArray(), noArray(), noArray(), var_type);

vector<double> priors(2);

priors[0] = 1;

priors[1] = 26;

model = Boost::create();

model->setBoostType(Boost::GENTLE);

model->setWeakCount(100);

model->setWeightTrimRate(0.95);

model->setMaxDepth(5);

model->setUseSurrogates(false);

model->setPriors(Mat(priors));

model->train(tdata);

Mat temp_sample(1, var_count + 1, CV_32F);

float* tptr = temp_sample.ptr<float>();

// compute prediction error on train and test data

double train_hr = 0, test_hr = 0;

for (i = 0; i < nsamples_all; i++)

{

int best_class = 0;

double max_sum = -DBL_MAX;

const float* ptr = data.ptr<float>(i);

for (k = 0; k < var_count; k++)

tptr[k] = ptr[k];

for (j = 0; j < class_count; j++)

{

tptr[var_count] = (float)j;

float s = model->predict(temp_sample, noArray(), StatModel::RAW_OUTPUT);

if (max_sum < s)

{

max_sum = s;

best_class = j + 'A';

}

}

double r = std::abs(best_class - responses.at<int>(i)) < FLT_EPSILON ? 1 : 0;

if (i < ntrain_samples)

train_hr += r;

else

test_hr += r;

}

test_hr /= nsamples_all - ntrain_samples;

train_hr = ntrain_samples > 0 ? train_hr / ntrain_samples : 1.;

printf("Recognition rate: train = %.1f%%, test = %.1f%%\n",

train_hr*100., test_hr*100.);

cout << "Number of trees: " << model->getRoots().size() << endl;

return true;

}

//多层感知机分类(ANN)

bool build_mlp_classifier(const string& data_filename)

{

const int class_count = 26;

Mat data;

Mat responses;

read_num_class_data(data_filename, 16, &data, &responses);

Ptr<ANN_MLP> model;

int nsamples_all = data.rows;

int ntrain_samples = (int)(nsamples_all*0.8);

Mat train_data = data.rowRange(0, ntrain_samples);

Mat train_responses = Mat::zeros(ntrain_samples, class_count, CV_32F);

// 1. unroll the responses

cout << "Unrolling the responses...\n";

for (int i = 0; i < ntrain_samples; i++)

{

int cls_label = responses.at<int>(i) - 'A';

train_responses.at<float>(i, cls_label) = 1.f;

}

// 2. train classifier

int layer_sz[] = { data.cols, 100, 100, class_count };

int nlayers = (int)(sizeof(layer_sz) / sizeof(layer_sz[0]));

Mat layer_sizes(1, nlayers, CV_32S, layer_sz);

#if 1

int method = ANN_MLP::BACKPROP;

double method_param = 0.001;

int max_iter = 300;

#else

int method = ANN_MLP::RPROP;

double method_param = 0.1;

int max_iter = 1000;

#endif

Ptr<TrainData> tdata = TrainData::create(train_data, ROW_SAMPLE, train_responses);

model = ANN_MLP::create();

model->setLayerSizes(layer_sizes);

model->setActivationFunction(ANN_MLP::SIGMOID_SYM, 0, 0);

model->setTermCriteria(TC(max_iter, 0));

model->setTrainMethod(method, method_param);

model->train(tdata);

return true;

}

//K最近邻分类

bool build_knearest_classifier(const string& data_filename, int K)

{

Mat data;

Mat responses;

read_num_class_data(data_filename, 16, &data, &responses);

int nsamples_all = data.rows;

int ntrain_samples = (int)(nsamples_all*0.8);

Ptr<TrainData> tdata = prepare_train_data(data, responses, ntrain_samples);

Ptr<KNearest> model = KNearest::create();

model->setDefaultK(K);

model->setIsClassifier(true);

model->train(tdata);

test_and_save_classifier(model, data, responses, ntrain_samples, 0);

return true;

}

//贝叶斯分类

bool build_nbayes_classifier(const string& data_filename)

{

Mat data;

Mat responses;

read_num_class_data(data_filename, 16, &data, &responses);

int nsamples_all = data.rows;

int ntrain_samples = (int)(nsamples_all*0.8);

Ptr<NormalBayesClassifier> model;

Ptr<TrainData> tdata = prepare_train_data(data, responses, ntrain_samples);

model = NormalBayesClassifier::create();

model->train(tdata);

test_and_save_classifier(model, data, responses, ntrain_samples, 0);

return true;

}

//svm分类

bool build_svm_classifier(const string& data_filename)

{

Mat data;

Mat responses;

read_num_class_data(data_filename, 16, &data, &responses);

int nsamples_all = data.rows;

int ntrain_samples = (int)(nsamples_all*0.8);

Ptr<SVM> model;

Ptr<TrainData> tdata = prepare_train_data(data, responses, ntrain_samples);

model = SVM::create();

model->setType(SVM::C_SVC);

model->setKernel(SVM::LINEAR);

model->setC(1);

model->train(tdata);

test_and_save_classifier(model, data, responses, ntrain_samples, 0);

return true;

}

int main()

{

string data_filename = "letter-recognition.data"; //字母数据

cout << "svm分类:" << endl;

build_svm_classifier(data_filename);

cout << "贝叶斯分类:" << endl;

build_nbayes_classifier(data_filename);

cout << "K最近邻分类:" << endl;

build_knearest_classifier(data_filename, 10);

cout << "随机树分类:" << endl;

build_rtrees_classifier(data_filename);

//cout << "adaboost分类:" << endl;

//build_boost_classifier(data_filename);

//cout << "ANN(多层感知机)分类:" << endl;

//build_mlp_classifier(data_filename);

}

按 Ctrl+C 复制代码

由于adaboost分类和 ann分类速度非常慢,因此我在main函数里把这两个分类注释掉了,大家有兴趣和时间可以测试一下。

结果:

从结果显示来看,测试的四种分类算法中,KNN(最近邻)分类精度是最高的。所以说,对ocr进行识别,还是用knn最好。

分类: opencv

阅读全文

0 0

- 多种分类器训练检测

- 基于Adaboost检测的分类器训练

- 目标检测级联分类器训练

- 目标检测(从样本处理到训练检测)训练级联分类器

- 目标检测(从样本处理到训练检测)训练级联分类器

- 自己训练SVM分类器进行HOG行人检测

- 自己训练SVM分类器进行HOG行人检测

- 自己训练SVM分类器进行HOG行人检测

- 自己训练SVM分类器进行HOG行人检测

- 目标检测之训练opencv自带的分类器

- 自己训练SVM分类器进行HOG行人检测

- 自己训练SVM分类器进行HOG行人检测

- SVM分类器训练的HOG行人检测

- 自己训练SVM分类器进行HOG行人检测

- 自己训练SVM分类器进行HOG行人检测

- opencv人脸检测分类器训练小结

- 使用opnalpr训练目标检测级联分类器

- 自己训练SVM分类器进行HOG行人检测

- 【SMS】SMS协议介绍之SMS承载层(Bear Layer)

- 创业经验,休闲食品代理用销量丰富利润

- 身份证识别

- 完成Ueditor的上传视频功能及ajax+SSM+获取url

- WindowsLoad

- 多种分类器训练检测

- 深入剖析-关于分页语句的性能优化

- C++ 函数

- (二)、JavaScript页面访问记录(History 对象)

- QT开发之TabWidget控件

- 【软工】软件计划

- mybatis关联

- 将公钥加入服务器,一次输入密码,后续不需要再次输入密码

- 请求发送者与接收者解耦——命令模式(六)