hashmap

来源:互联网 发布:python算法精解 编辑:程序博客网 时间:2024/04/29 18:28

Hash table

From Wikipedia, the free encyclopedia

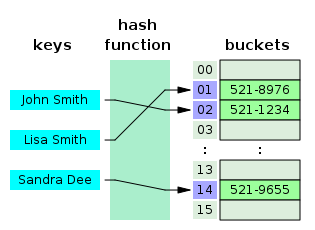

In computer science, a hash table or hash map is a data structure that uses a hash function to efficiently map certain identifiers or keys (e.g., person names) to associated values (e.g., their telephone numbers). The hash function is used to transform the key into the index (the hash) of an array element (the slot or bucket) where the corresponding value is to be sought.

Ideally the hash function should map each possible key to adifferent slot index, but this ideal is rarely achievable in practice(unless the hash keys are fixed; i.e. new entries are never added tothe table after creation). Most hash table designs assume that hash collisions — pairs of different keys with the same hash values — are normal occurrences and must be accommodated in some way.

In a well-dimensioned hash table, the average cost (number of instructions)for each lookup is independent of the number of elements stored in thetable. Many hash table designs also allow arbitrary insertions anddeletions of key-value pairs, at constant average (indeed, amortized[1]) cost per operation.[2][3]

In many situations, hash tables turn out to be more efficient than search trees or any other table lookup structure. For this reason, they are widely used in many kinds of computer software, particularly for associative arrays, database indexing, caches, and sets.

Hash tables should not be confused with the hash lists and hash trees used in cryptography and data transmission.

Contents

[hide]- 1 Hash function

- 1.1 Choosing a good hash function

- 1.2 Perfect hash function

- 2 Collision resolution

- 2.1 Load factor

- 2.2 Separate chaining

- 2.2.1 Separate chaining with list heads

- 2.2.2 Separate chaining with other structures

- 2.3 Open addressing

- 2.4 Coalesced hashing

- 2.5 Robin Hood hashing

- 2.6 Cuckoo hashing

- 3 Dynamic resizing

- 3.1 Resizing by copying all entries

- 3.2 Incremental resizing

- 3.3 Other solutions

- 4 Performance analysis

- 5 Features

- 5.1 Advantages

- 5.2 Drawbacks

- 6 Uses

- 6.1 Associative arrays

- 6.2 Database indexing

- 6.3 Caches

- 6.4 Sets

- 6.5 Object representation

- 6.6 Unique data representation

- 7 Implementations

- 7.1 In programming languages

- 7.2 Independent packages

- 8 See also

- 8.1 Related data structures

- 9 References

- 10 Further reading

- 11 External links

[edit] Hash function

At the heart of the hash table algorithm is a simple array of items; this is often simply called the hash table.Hash table algorithms calculate an index from the data item's key anduse this index to place the data into the array. The implementation ofthis calculation is the hash function, f:

index = f(key,arrayLength)

The hash function calculates an index within the array from the data key. arrayLength is the size of the array.

[edit] Choosing a good hash function

A good hash function and implementation algorithm are essential for good hash table performance, but may be difficult to achieve[citation needed] Poor hashing usually degrades hash table performance by a constant factor,[citation needed], but hashing is often only a small part of the overall computation.

A basic requirement is that the function should provide a uniform distributionof hash values. A non-uniform distribution increases the number ofcollisions, and the cost of resolving them. Uniformity is sometimesdifficult to ensure by design, but may be evaluated empirically by the chi-squared test.[4]

The distribution needs to be uniform only for the table sizes s that will occur in the application. In particular, if one uses dynamic resizing with exact doubling and halving of s, the hash function needs to be uniform only when s is a power of two. On the other hand, some hashing algorithms provide uniform hashes only when s is a prime number.[5]

For open addressing schemes, the hash function should also avoid clustering,the mapping of two or more keys to consecutive slots. Such clusteringmay cause the lookup cost to skyrocket, even if the load factor is lowand collisions are infrequent. The very popular multiplicative hashadvocated by Donald Knuth in The Art of Computer Programming[2] is claimed to have particularly poor clustering behavior. [5]

Cryptographic hash functions are believed to provide good hash functions for any table size s, either by modulo reduction or by bit masking. They may also be appropriate if there is a risk of malicious users trying to sabotage a network service by submitting requests designed to generate a large number of collisions in the server's hash tables[citation needed].However, these presumed qualities are hardly worth their much largercomputational cost and algorithmic complexity, and the risk of sabotagecan be avoided by cheaper methods (such as applying a secret salt to the data, or using a universal hash function).

Some authors claim that good hash functions should have the avalanche effect;that is, a single-bit change in the input key should affect, onaverage, half the bits in the output. Some popular hash functions failto pass this test.[4]

[edit] Perfect hash function

If all of the keys that will be used are known ahead of time, a perfect hash function can be used to create a perfect hash table, in which there will be no collisions. If minimal perfect hashing is used, every location in the hash table can be used as well.

Perfect hashing allows for constant time lookups in the worst case.This is in contrast to most chaining and open addressing methods, wherethe time for lookup is low on average, but may be very large(proportional to the number of entries) for some sets of keys.

[edit] Collision resolution

Collisions are practically unavoidable when hashing a random subsetof a large set of possible keys. For example, if 2500 keys are hashedinto a million buckets, even with a perfectly uniform randomdistribution, according to the birthday paradox there is a 95% chance of at least two of the keys being hashed to the same slot.

Therefore, most hash table implementations have some collisionresolution strategy to handle such events. Some common strategies aredescribed below. All these methods require that the keys (or pointersto them) be stored in the table, together with the associated values.

[edit] Load factor

The performance of most collision resolution methods does not depend directly on the number n of stored entries, but depends strongly on the table's load factor, the ratio n/s between n and the size sof its bucket array. With a good hash function, the average lookup costis nearly constant as the load factor increases from 0 up to 0.7 or so.Beyond that point, the probability of collisions and the cost ofhandling them increases.

On the other hand, as the load factor approaches zero, the size ofthe hash table increases with little improvement in the search cost,and memory is wasted.

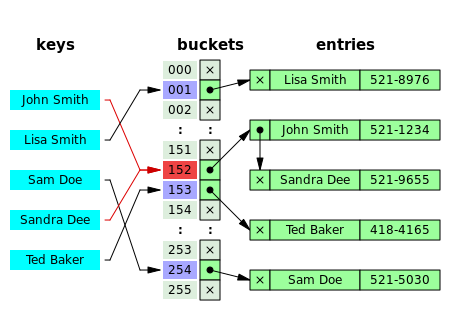

[edit] Separate chaining

In the strategy known as separate chaining, direct chaining, or simply chaining, each slot of the bucket array is a pointer to a linked listthat contains the key-value pairs that hashed to the same location.Lookup requires scanning the list for an entry with the given key.Insertion requires appending a new entry record to either end of thelist in the hashed slot. Deletion requires searching the list andremoving the element. (The technique is also called open hashing or closed addressing, which should not be confused with 'open addressing' or 'closed hashing'.)

Chained hash tables with linked lists are popular because theyrequire only basic data structures with simple algorithms, and can usesimple hash functions that would be unsuitable for other methods.

The cost of a table operation is that of scanning the entries of theselected bucket for the desired key. If the distribution of keys is sufficiently uniform, the average cost of a lookup depends only on the average number of keys per bucket — that is, on the load factor.

Chained hash tables remain effective even when the number of entries n is much higher than the number of slots. Their performance degrades more gracefully(linearly) with the load factor. For example, a chained hash table with1000 slots and 10,000 stored keys (load factor 10) will be 5 to 10times slower than a 10,000-slot table (load factor 1); but still 1000times faster than a plain sequential list, and possibly even fasterthan a balanced search tree.

For separate-chaining, the worst-case scenario is when all entrieswere inserted into the same bucket, in which case the hash table isineffective and the cost is that of searching the bucket datastructure. If the latter is a linear list, the lookup procedure mayhave to scan all its entries; so the worst-case cost is proportional tothe number n of entries in the table.

The bucket chains are often implemented as ordered lists,sorted by the key field; this choice approximately halves the averagecost of successful lookups, compared to an unordered list. However, ifit is expected that some keys will be much more likely to come up thanothers, an unordered list with move-to-front heuristicmay be more effective. More sophisticated data structures, such asbalanced search trees, are worth considering only if the load factor islarge (about 10 or more), or if the hash distribution is likely to bevery non-uniform, or if one must guarantee good performance even in theworst-case. However, using a larger table and/or a better hash functionmay be even more effective in those cases.

Chained hash tables also inherit the disadvantages of linked lists.When storing small keys and values, the space overhead of the next pointer in each entry record can be significant. An additional disadvantage is that traversing a linked list has poor cache performance, making the processor cache ineffective.

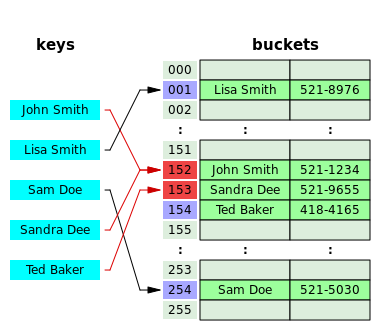

[edit] Separate chaining with list heads

Some chaining implementations store the first record of each chain in the slot array itself. [3]The purpose is to increase cache efficiency of hash table access. Inorder to save memory space, such hash tables are often designed to haveabout as many slots as stored entries, meaning that many slots willhave two or more entries.

[edit] Separate chaining with other structures

Instead of a list, one can use any other data structure that supports the required operations. By using a self-balancing tree, for example, the theoretical worst-case time of a hash table can be brought down to O(log n) rather than O(n).However, this approach is worth the trouble and extra memory cost onlyif long delays must be avoided at all costs (e.g. in a real-timeapplication), or if one expects to have many entries hashed to the sameslot (e.g. if one expects extremely non-uniform or even malicious keydistributions).

The variant called array hashing uses a dynamic array to store all the entries that hash to the same bucket.[6][7]Each inserted entry gets appended to the end of the dynamic array thatis assigned to the hashed slot. This variation makes more effective useof CPU caching, since the bucket entries are stored in sequential memory positions. It also dispenses with the nextpointers that are required by linked lists, which saves space when theentries are small, such as pointers or single-word integers.

An elaboration on this approach is the so-called dynamic perfect hashing [8], where a bucket that contains k entries is organized as a perfect hash table with k2 slots. While it uses more memory (n2 slots for n entries, in the worst case), this variant has guaranteed constant worst-case lookup time, and low amortized time for insertion.

[edit] Open addressing

In another strategy, called open addressing,all entry records are stored in the bucket array itself. When a newentry has to be inserted, the buckets are examined, starting with thehashed-to slot and proceeding in some probe sequence, until anunoccupied slot is found. When searching for an entry, the buckets arescanned in the same sequence, until either the target record is found,or an unused array slot is found, which indicates that there is no suchkey in the table. [9]The name "open addressing" refers to the fact that the location("address") of the item is not determined by its hash value. (Thismethod is also called closed hashing; it should not be confused with "open hashing" or "closed addressing" which usually mean separate chaining.)

Well known probe sequences include:

- linear probing in which the interval between probes is fixed (usually 1).

- quadratic probing in which the interval between probes increases by some constant (usually 1) after each probe.

- double hashing in which the interval between probes is computed by another hash function.

A drawback of all these open addressing schemes is that the numberof stored entries cannot exceed the number of slots in the bucketarray. In fact, even with good hash functions, their performanceseriously degrades when the load factor grows beyond 0.7 or so. Formany applications, these restriction mandate the use of dynamicresizing, with its attendant costs.

Open addressing schemes also put more stringent requirements on thehash function: besides distributing the keys more uniformly over thebuckets, the function must also minimize the clustering of hash valuesthat are consecutive in the probe order. Even experienced programmersmay find such clustering hard to avoid.

Open addressing only saves memory if the entries are small (lessthan 4 times the size of a pointer) and the load factor is not toosmall. If the load factor is close to zero (that is, there are far morebuckets than stored entries), open addressing is wasteful even if eachentry is just two words.

Open addressing avoids the time overhead of allocating each newentry record, and can be implemented even in the absence of a memoryallocator. It also avoids the extra indirection required to access thefirst entry of each bucket (which is usually the only one). It also hasbetter locality of reference,particularly with linear probing. With small record sizes, thesefactors can yield better performance than chaining, particularly forlookups.

Hash tables with open addressing are also easier to serialize, because they don't use pointers.

On the other hand, normal open addressing is a poor choice for large elements, since these elements fill entire cache lines(negating the cache advantage), and a large amount of space is wastedon large empty table slots. If the open addressing table only storesreferences to elements (external storage), it uses space comparable tochaining even for large records but loses its speed advantage.

Generally speaking, open addressing is better used for hash tableswith small records that can be stored within the table (internalstorage) and fit in a cache line. They are particularly suitable forelements of one word or less. In cases where the tables are expected tohave high load factors, the records are large, or the data isvariable-sized, chained hash tables often perform as well or better.

Ultimately, used sensibly, any kind of hash table algorithm is usually fast enough;and the percentage of a calculation spent in hash table code is low.Memory usage is rarely considered excessive. Therefore, in most casesthe differences between these algorithms is marginal, and otherconsiderations typically come into play.

[edit] Coalesced hashing

A hybrid of chaining and open addressing, coalesced hashing links together chains of nodes within the table itself. [9]Like open addressing, it achieves space usage and (somewhat diminished)cache advantages over chaining. Like chaining, it does not exhibitclustering effects; in fact, the table can be efficiently filled to ahigh density. Unlike chaining, it cannot have more elements than tableslots.

[edit] Robin Hood hashing

One interesting variation on double-hashing collision resolution is that of Robin Hood hashing[10].The idea is that a key already inserted may be displaced by a new keyif its probe count is larger than the key at the current position. Thenet effect of this is that it reduces worst case search times in thetable. This is similar to Knuth's ordered hash tables except thecriteria for bumping a key does not depend on a direct relationshipbetween the keys. Since both the worst case and the variance on thenumber of probes is reduced dramatically an interesting variation is toprobe the table starting at the expected successful probe value andthen expand from that position in both directions.[11]

[edit] Cuckoo hashing

Another alternative open-addressing solution is cuckoo hashing, which ensures constant lookup time in the worst case, and constant amortized time for insertions and deletions.

[edit] Dynamic resizing

In order to keep the load factor in a certain range, e.g. between1/4 and 3/4, many table implementations may expand the tabledynamically when items are inserted, and may shrink it when items aredeleted. This is sometimes referred to as a rehash of the table. In Java's HashMap class, for example, the default load factor threshold for table expansion is 0.75.

[edit] Resizing by copying all entries

A common approach is to automatically trigger a complete resizing when the load factor exceeds some threshold rmax. Then a new larger table is allocated,all the entries of the old table are removed and inserted into this newtable, and the old table is returned to the free storage pool.Symmetrically, when the load factor falls below a second threshold rmin, all entries are moved to a new smaller table.

If the table size increases or decreases by a fixed percentage at each expansion, the total cost of these resizings, amortized over all insert and delete operations, is still a constant, independent of the number of entries n and of the number m of operations performed.

For example, consider a table that was created with the minimumpossible size and is doubled each time the load ratio exceeds somethreshold. If m elements are inserted into that table, thetotal number of extra re-insertions that occur in all dynamic resizingsof the table is at most m−1. In other words, dynamic resizing roughly doubles the cost of each insert or delete operation.

[edit] Incremental resizing

Some hash table implementations, notably in real-time systems,cannot pay the price of enlarging the hash table all at once, becauseit may interrupt time-critical operations. If one cannot avoid dynamicresizing, a solution is to perform the resizing gradually:

- During the resize, allocate the new hash table, but keep the old table unchanged.

- In each lookup or delete operation, check both tables.

- Perform insertion operations only in the new table.

- At each insertion also move k elements from the old table to the new table.

- When all elements are removed from the old table, deallocate it.

To ensure that the old table will be completely copied over beforethe new table itself needs to be enlarged, it is necessary to increasethe size of the table by a factor of at least (k + 1)/k during the resizing.

[edit] Other solutions

Linear hashing [12]is a hash table algorithm that permits incremental hash tableexpansion. It is implemented using a single hash table, but with twopossible look-up functions.

Another way to decrease the cost of table resizing is to choose ahash function in such a way that the hashes of most values do notchange when the table is resized. This approach, called consistent hashing, is prevalent in disk-based and distributed hashes, where resizing is prohibitively costly.

[edit] Performance analysis

In the simplest model, the hash function is completely unspecifiedand the table does not resize. For the best possible choice of hashfunction, a table of size n with open addressing will have no collisions and hold up to n elements, with a single comparison for successful lookup, and a table of size n with chaining and k keys will have the minimum max(0, k-n) collisions and O(1 + k/n)comparisons for lookup. For the worst choice of hash function, everyinsertion will cause a collision, and hash tables degenerate to linearsearch, with Ω(k) amortized comparisons per insertion and up to k comparisons for an successful lookup.

Adding rehashing to this model is straightforward. As in a dynamic array, geometric resizing by a factor of b implies that only k/bi keys are inserted i or more times, so that the total number of insertions is bounded above by bk/(b-1), which is O(k). By using rehashing to maintain k < n,tables using both chaining and open addressing can have unlimitedelements and perform successful lookup in a single comparison for thebest choice of hash function.

In more realistic models the hash function is a random variableover a probability distribution of hash functions, and performance iscomputed on average over the choice of hash function. When thisdistribution is uniform, the assumption is called "simple uniform hashing" and it can be shown that hashing with chaining requires Θ(1 + k/n) comparisons on average for an unsuccessful lookup, and hashing with open addressing requires Θ(1/(1 - k/n)).[13] Both these bounds are constant if we maintain k/n < c using table resizing, where c is a fixed constant less than 1.

[edit] Features

[edit] Advantages

The main advantage of hash tables over other table data structuresis speed. This advantage is more apparent when the number of entries islarge (thousands or more). Hash tables are particularly efficient whenthe maximum number of entries can be predicted in advance, so that thebucket array can be allocated once with the optimum size and neverresized.

If the set of key-value pairs is fixed and known ahead of time (soinsertions and deletions are not allowed), one may reduce the averagelookup cost by a careful choice of the hash function, bucket tablesize, and internal data structures. In particular, one may be able todevise a hash function that is collision-free, or even perfect (seebelow). In this case the keys need not be stored in the table.

[edit] Drawbacks

Hash tables can be more difficult to implement than self-balancing binary search trees.Choosing an effective hash function for a specific application is morean art than a science. In open-addressed hash tables it is fairly easyto create a poor hash function.

Although operations on a hash table take constant time on average,the cost of a good hash function can be significantly higher than theinner loop of the lookup algorithm for a sequential list or searchtree. Thus hash tables are not effective when the number of entries isvery small. (However, in some cases the high cost of computing the hashfunction can be mitigated by saving the hash value together with thekey.)

For certain string processing applications, such as spell-checking, hash tables may be less efficient than tries, finite automata, or Judy arrays.Also, If each key is represented by a small enough number of bits,then, instead of a hash table, one may use the key directly as theindex into an array of values. Note that there are no collisions inthis case.

The entries stored in a hash table can be enumerated efficiently (atconstant cost per entry), but only in some pseudo-random order.Therefore, there is no efficient way to efficiently locate an entrywhose key is nearest to a given key. Listing all n entries in some specific order generally requires a separate sorting step, whose cost is proportional to log(n) per entry. In comparison, ordered search trees have lookup and insertion cost proportional to log(n), but allow finding the nearest key at about the same cost, and ordered enumeration of all entries at constant cost per entry.

If the keys are not stored (because the hash function iscollision-free), there may be no easy way to enumerate the keys thatare present in the table at any given moment.

Although the average cost per operation is constant andfairly small, the cost of a single operation may be quite high. Inparticular, if the hash table uses dynamic resizing,an insertion or deletion operation may occasionally take timeproportional to the number of entries. This may be a serious drawbackin real-time or interactive applications.

Hash tables in general exhibit poor locality of reference—thatis, the data to be accessed is distributed seemingly at random inmemory. Because hash tables cause access patterns that jump around,this can trigger microprocessor cache misses that cause long delays. Compact data structures such as arrays, searched with linear search, may be faster if the table is relatively small and keys are integers or other short strings. According to Moore's Law,cache sizes are growing exponentially and so what is considered "small"may be increasing. The optimal performance point varies from system tosystem.

Hash tables become quite inefficient when there are many collisions.While extremely uneven hash distributions are extremely unlikely toarise by chance, a malicious adversarywith knowledge of the hash function may be able to supply informationto a hash which creates worst-case behavior by causing excessivecollisions, resulting in very poor performance (i.e., a denial of service attack). In critical applications, either universal hashing can be used or a data structure with better worst-case guarantees may be preferable.[14]

[edit] Uses

[edit] Associative arrays

Hash tables are commonly used to implement many types of in-memory tables. They are used to implement associative arrays (arrays whose indices are arbitrary strings or other complicated objects), especially in interpreted programming languages like AWK, Perl, and PHP.

[edit] Database indexing

Hash tables may also be used for disk-based persistent data structures and database indices, although balanced trees are more popular in these applications.

[edit] Caches

Hash tables can be used to implement caches,auxiliary data tables that are used to speed up the access to data thatis primarily stored in slower media. In this application, hashcollisions can be handled by discarding one of the two collidingentries — usually the one that is currently stored in the table.

[edit] Sets

Besides recovering the entry which has a given key, many hash tableimplementations can also tell whether such an entry exists or not.

Those structures can therefore be used to implement a set data structure,which merely records whether a given key belongs to a specified set ofkeys. In this case, the structure can be simplified by eliminating allparts which have to do with the entry values. Hashing can be used toimplement both static and dynamic sets.

[edit] Object representation

Several dynamic languages, such as Python, JavaScript, and Ruby,use hash tables to implement objects. In this representation, the keysare the names of the members and methods of the object, and the valuesare pointers to the corresponding member or method.

[edit] Unique data representation

Hash tables can be used by some programs to avoid creating multiplecharacter strings with the same contents. For that purpose, all stringsin use by the program are stored in a single hash table, which ischecked whenever a new string has to be created. This technique wasintroduced in Lisp interpreters under the name hash consing, and can be used with many other kinds of data (expression trees in a symbolic algebra system, records in a database, files in a file system, binary decision diagrams, etc.)

[edit] Implementations

[edit] In programming languages

Many programming languages provide hash table functionality. either as built-in associative arrays or as standard library modules. In C++, for example, the hash_map and unordered_map classes provide hash tables for keys and values of arbitrary type. See the associative array article for a complete list.

In PHP 5, the Zend 2 engine uses one of the hash functions from Daniel J. Bernsteinto generate the hash values used in managing the mappings of datapointers stored in a HashTable. In the PHP source code, it is labelledas "DJBX33A" (Daniel J. Bernstein, Times 33 with Addition).

[edit] Independent packages

- Google Sparse HashThe Google SparseHash project contains several hash-map implementationsin use at Google, with different performance characteristics, includingan implementation that optimizes for space and one that optimizes forspeed. The memory-optimized one is extremely memory-efficient with only2 bits/entry of overhead.

- SunriseDDAn open source C library for hash table storage of arbitrary dataobjects with lock-free lookups, built-in reference counting andguaranteed order iteration. The library can participate in externalreference counting systems or use its own built-in reference counting.It comes with a variety of hash functions and allows the use of runtimesupplied hash functions via callback mechanism. Source code is welldocumented.

- uthash This is an easy-to-use hash table for C structures.

- A number of language runtimes and/or standard libraries use hash tables to implement their support for associative arrays.

- Software written to minimize memory usage can conserve memory bykeeping all allocated strings in a hash table. If an already existingstring is found a pointer to that string is returned; otherwise, a newstring is allocated and added to the hash table. (This is the normaltechnique used in Lisp for the names of variables and functions; seethe documentation for the intern and intern-soft functions if you areusing that language.) The data compression achieved in this manner isusually around 40%.[citation needed]

[edit] See also

- Rabin-Karp string search algorithm

- Stable hashing

- Consistent hashing

- Extendible hashing

- Lazy deletion

- Pearson hashing

[edit] Related data structures

There are several data structures that use hash functions but cannot be considered special cases of hash tables:

- Bloom filter, a structure that implements an enclosing approximation of a set, allowing insertions but not deletions.

- Distributed hash table, a resilient dynamic table spread over several nodes of a network.

[edit] References

- ^ Charles E. Leiserson, Amortized Algorithms, Table Doubling, Potential Method Lecture 13, course MIT 6.046J/18.410J Introduction to Algorithms - Fall 2005

- ^ a b Donald Knuth (1998). The Art of Computer Programming'. 3: Sorting and Searching (2nd ed.). Addison-Wesley. pp. 513–558. ISBN 0-201-89685-0.

- ^ a b Cormen, Thomas H.; Leiserson, Charles E.; Rivest, Ronald L.; Stein, Clifford (2001). Introduction to Algorithms (second ed.). MIT Press and McGraw-Hill. pp. 221–252. ISBN 978-0-262-53196-2.

- ^ a b Bret Mulvey, Hash Functions. Accessed April 11, 2009

- ^ a b Thomas Wang (1997), Prime Double Hash Table. Accessed April 11, 2009

- ^ Askitis, Nikolas; Zobel, Justin (2005). Cache-conscious Collision Resolution in String Hash Tables. 3772. 91–102. doi:. ISBN 1721172558. http://www.springerlink.com/content/b61721172558qt03/.

- ^ Askitis, Nikolas (2009). Fast and Compact Hash Tables for Integer Keys. 91. 113–122. ISBN 978-1-920682-72-9. http://www.crpit.com/VolumeIndexU.html#Vol91.

- ^Erik Demaine, Jeff Lind. 6.897: Advanced Data Structures. MIT ComputerScience and Artificial Intelligence Laboratory. Spring 2003. http://courses.csail.mit.edu/6.897/spring03/scribe_notes/L2/lecture2.pdf

- ^ a b Tenenbaum, Aaron M.; Langsam, Yedidyah; Augenstein, Moshe J. (1990). Data Structures Using C. Prentice Hall. pp. 456–461, pp. 472. ISBN 0-13-199746-7.

- ^ Celis, Pedro (1986). Robin Hood hashing. Technical Report Computer Science Department, University of Waterloo CS-86-14.

- ^ Viola, Alfredo. "Exact distribution of individual displacements in linear probing hashing". Transactions on Algorithms (TALG) (ACM) Vol 1 (Issue 2, October 2005): Pages: 214–242. doi:. ISSN:1549-6325.

- ^ Litwin,Witold (1980). "Linear hashing: A new tool for file and tableaddressing". Proc. 6th Conference on Very Large Databases. pp. 212-223.

- ^ Doug Dunham. CS 4521 Lecture Notes. University of Minnesota Duluth. Theorems 11.2, 11.6. Last modified 21 April 2009.

- ^ Crosby and Wallach's Denial of Service via Algorithmic Complexity Attacks.

[edit] Further reading

- Michael T. Goodrich and Roberto Tamassia. Data Structures and Algorithms in Java, 4th edition. John Wiley & Sons, Inc. ISBN 0-471-73884-0. Chapter 9: Maps and Dictionaries. pp. 369–418

[edit] External links

- A Hash Function for Hash Table Lookup by Bob Jenkins.

- Hash functions by Paul Hsieh

- Libhashishis one of the most feature rich hash libraries (built-in hashfunctions, several collision strategies, extensive analysisfunctionality, ...)

- NIST entry on hash tables

- Open addressing hash table removal algorithm from ICI programming language, ici_set_unassign in set.c (and other occurrences, with permission).

- The Perl Wikibook - Perl Hash Variables

- A basic explanation of how the hash table works by Reliable Software

- Lecture on Hash Tables

- Hash-tables in C — two simple and clear examples of hash tables implementation in C with linear probing and chaining

- C Hash Table

- Implementation of HashTable in C

- MIT's Introduction to Algorithms: Hashing 1 MIT OCW lecture Video

- MIT's Introduction to Algorithms: Hashing 2 MIT OCW lecture Video

- HashMap

- HashMap

- HashMap

- HashMap

- HashMap

- HashMap

- HashMap

- HashMap

- HashMap

- HashMap

- HashMap

- hashmap

- HashMap

- HashMap

- HashMap

- hashmap

- HashMap

- Hashmap

- 客户就是客户,技术人员在客户那里话不要太多,言多语失

- Connection reset by peer: socket write error

- 拆分字符串

- 并行计算—集群系统问题

- 使用正规表达式编写更好的 SQL

- hashmap

- vi基本操作(1) -- 基本编辑命令

- sqlldr 数据导入

- Learning--初识Fragment Bundles

- 正确使用css

- Flex 性能:将每个Flex应用程序作为Portal (门户)应用程序开发

- IO_STACK_LOCATION 结构

- 9月9日,朵朵再次去幼儿园了,还是哭着进去的

- MyEclipse 中cvs详解