Controlling User Logging in Hadoop

来源:互联网 发布:网络语我方了什么意思 编辑:程序博客网 时间:2024/05/18 07:57

Imagine that you’re a Hadoop administrator, and to make things interesting you’re managing a multi-tenant Hadoop cluster where data scientists, developers and QA are pounding your cluster. One day you notice that your disks are filling-up fast, and after some investigating you realize that the root cause is your MapReduce task attempt logs.

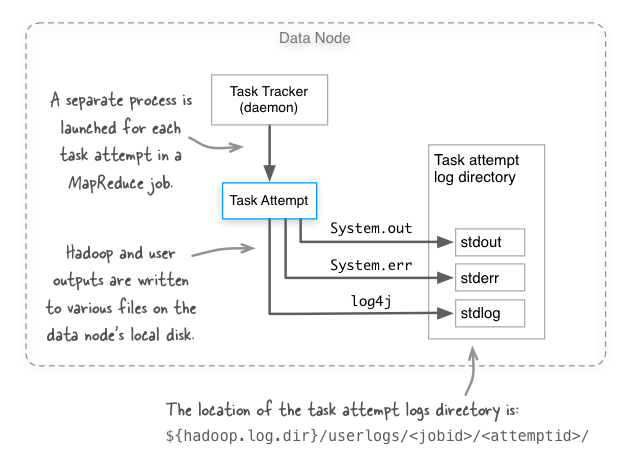

How do you guard against this sort of thing happening? Before we get to that we need to understand where these files exist, and how they’re written. The figure below shows the three log files that are created for each task attempt in MapReduce. Notice that the logs are written to the local disk of the task attempt.

OK, so how does Hadoop normally make sure that our disks don’t fill-up with these task attempt logs? I’ll cover three approaches.

Approach 1: mapred.userlog.retain.hours

Hadoop has a mapred.userlog.retain.hours configurable, which is defined in mapred-default.xml as:

The maximum time, in hours, for which the user-logs are to be retained after the job completion.

Great, but what if your disks are filling up before Hadoop has had a chance to automatically clean them up? It may be tempting to reduce mapred.userlog.retain.hours to a smaller value, but before you do that you should know that there’s a bug with the Hadoop versions 1.x and earlier (see MAPREDUCE-158), where the logs for long-running jobs that run longer than mapred.userlog.retain.hours are accidentally deleted. So maybe we should look elsewhere to solve our overflowing logs problem.

Approach 2: mapred.userlog.limit.kb

Hadoop has another configurable, mapred.userlog.limit.kb, which can be used to limit the file size ofstdlog, which is the log4j log output file. Let’s peek again at the documentation:

The maximum size of user-logs of each task in KB. 0 disables the cap.

The default value is 0, which means that log writes go straight to the log file. So all we need to do is to set a non-negative value and we’re set, right? Not so fast - it turns out that this approach has two disadvantages:

- Hadoop and user logs are actually cached in memory, so you’re taking away

mapred.userlog.limit.kbkilobytes worth of memory from your task attempt’s process. - Logs are only written out when the task attempt process has completed, and only contain the last

mapred.userlog.limit.kbworth of log entries, so this can make it challenging to debug long-running tasks.

OK, so what else can we try? We have one more solution, log levels.

Approach 3: Changing log levels

Ideally all your Hadoop users got the memo about minimizing excessive logging. But the reality of the situation is that you have limited control over what users decide to log in their code, but what you do have control over is the task attempt log levels.

If you had a MapReduce job that was aggressively logging in package com.example.mr, then you may be tempted to use the daemonlog CLI to connect to all the TaskTracker daemons and change the logging to ERROR level:

hadoop daemonlog -setlevel <host:port> com.example.mr ERRORYet again we hit a roadblock - this will only change the logging level for the TaskTracker process, and not for the task attempt process. Drat! This really only leaves one option, which is to update your${HADOOP_HOME}/conf/log4j.properties on all your data nodes by adding the following line to this file:

log4j.logger.com.example.mr=ERRORThe great thing about this change is that you don’t need to restart MapReduce, since any new task attempt processes will pick up your changes to log4j.properties.

- Controlling User Logging in Hadoop

- Controlling text size in Safari for iOS without disabling user zoom

- Controlling GPIO from Linux User Space

- elasticsearch---search in depth之controlling relevance

- Logging in Tomcat

- logging in android

- Redo Logging in InnoDB

- 处理教材:Controlling Execution of "Thinking in Java"

- Controlling which congestion control algorithm is used in Linux

- Controlling Object Visibility and Editability in Unity Using HideFlags

- login logon logging in logging on signing in signing on

- User Defined Hadoop DataType

- 4,org.apache.hadoop.security.AccessControlException: Permission denied: user=root, access=WRITE, in

- Improved logging in Objective-C

- Improved logging in Objective-C

- Good logging practice in Python

- User Event in MFC

- Add user in ubuntu

- 在WinDBG中查看函数的反汇编代码的命令

- C++的

- 索尼与Facebook:虚拟实际之战正式开端

- Java单例模式

- linux中环境变量的配置

- Controlling User Logging in Hadoop

- 三大框架SSH(struts2+spring+hibernate)整合时相关配置文件的模板

- u盘无法打开

- oracle系统表v$session、v$sql,v$sqlarea字段中文说明

- 关于电脑无法找到BIOS解决方法

- 转换人民币大小金额

- jauery的ajax

- 下一代CIO

- Mac os升级之后VMware打不开boot Camp