Switching Performance – Connecting Linux Network Namespaces

来源:互联网 发布:mac和windows快捷键 编辑:程序博客网 时间:2024/05/29 18:23

In a previous article I have shown several solutions to interconnect Linux network namespaces (http://www.opencloudblog.com). Four different solutions can be used – but which is the best solution with respect to performance and resource usage? This is quite interesting when you are running Openstack Networking Neutron together with the Openvswitch. The systems used to run these tests have Ubuntu 13.04 installed with kernel 3.8.0.30 and Openvswitch 1.9. The MTU of the IP interfaces is kept at the default value of 1500.

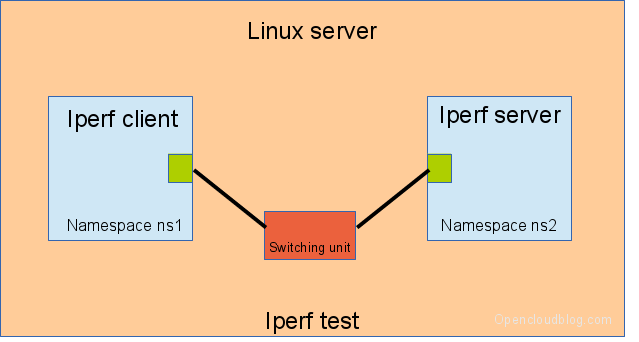

A test script has been used to collect performance numbers. The test script creates 2 namespaces, connects these namespaces using different Linux network technologies and measures the throughput and CPU usage using the software iperf. The iperf server process is started in one namespace, the client iperf process is started in the other namespace.

IMPORTANT REMARK: The results are confusing – a deeper analysis shows, that the configuration of the virtual network devices has a major impact on the performance. The settings TSO and GSO play a very important role when using network devices. I’ll show an analyses in an upcoming article.

Perf test setup

Single CPU (i5-2550 [3.3 GHz]) Kernel 3.13

The test system has the Desktop CPU i5-2500 CPU @ 3.30GHz providing 4 CPU cores and 32 GByte DDR3-10600 RAM providing around 160 GBit/s RAM throughput.

The test system is running Ubuntu 14.04 with kernel 3.13.0.24 and Openvswitch 2.0.1

The results are shown in the table below. iperf has been running with one, two and four threads. At the end the limiting factor is CPU usage. The column “efficiency” is defined as network throughput in GBit/s per Gigahertz available on CPUs.

iperf threads

[GBit/s]

tso gso lro gro on

[GBit/s per CPUGHZ]

[GBit/s]

tso gso lro gro off

The numbers show an drastic effect, if TSO … are switches off. TSO perfformes the segmentation at the NIC level. In this software NIC only environment no segmentation is done by the NIC, the large packets (average 40 kBytes) are sent to the other side as one packet. The guiding factor is the packet packet rate.

Single CPU (i5-2550 [3.3 GHz]) Kernel 3.8

The test system is running Ubuntu 13.04 with kernel 3.8.0.30 and Openvswitch 1.9 .

The results are shown in the table below. iperf has been running with one, two and four threads. At the end the limiting factor is CPU usage. The column “efficiency” is defined as network throughput in GBit/s per Gigahertz available on CPUs.

iperf threads

[GBit/s]

[GBit/s per CPUGHZ]

The results show a huge differences. The openvswitch using two internal openvswitch ports has the best throughput and the best efficiency.

The short summary is:

- Use Openvswitch and Openvswitch internal ports – in the case of one iperf thread you get 6.9 GBit/s throughput per CPU Ghz. But this solution does not provide any iptables rules on the link.

- If you like the old linuxbridge and veth pairs you get only 0.7 GBit/s per CPU Ghz throughput. With this solution it’s possible to filter the traffic on the network namespace links.

The table shows some interesting effects, e.g.:

- The test with the ovs and two ovs ports shows a drop in performance between 4 and 16 threads. The CPU analysis shows, that in the case of 8 threads, the CPU time used by softirqs doubled in comparison to the case of 4 threads. The softirq time used by 16 threads is the same as for 4 threads.

Openstack

If you are running Openstack Neutron, you should use the Openvswitch. Avoid linuxbridges. When connecting the Neutron networking Router/LBaas/DHCP namespaces DO NOT enable ovs_use_veth.

- Switching Performance – Connecting Linux Network Namespaces

- (4) Switching Performance – Connecting Linux Network Namespaces

- Linux Switching – Interconnecting Namespaces

- Linux Switching – Interconnecting Namespaces

- Linux Network Namespaces – Background

- Linux Switching – Interconnecting Namespaces 母机桥技术

- (5) Linux Network Namespaces – Background

- Linux Network Namespaces

- (1) Linux Network Namespaces

- Introducing Linux Network Namespaces

- (3) Linux Switching – Interconnecting Namespaces – brctl – ovs-vsctl – veth pair

- Switching Performance – Chaining OVS bridges

- (6) Switching Performance – Chaining OVS bridges

- linux 网络命名空间 Network namespaces

- Linux Namespaces

- namespaces - overview of Linux namespaces

- Connecting to the Network

- Connecting to the Network

- 数据结构C++版

- python map iter

- 有关python的字典以及对象什么的一些小技巧

- Dev控件GridControl 的使用

- VC/C++的面试题

- Switching Performance – Connecting Linux Network Namespaces

- 依赖倒置原则(DIP)

- 进入Quick-cocos2d-x准备工作

- 个人学习整理:C++版冒泡排序

- 明天百度面试太紧张

- 截杜赂祷亮弦拇窝凹霉幌袄

- DEV GridControl小结

- epoll两种监听模式

- mysql简单查询