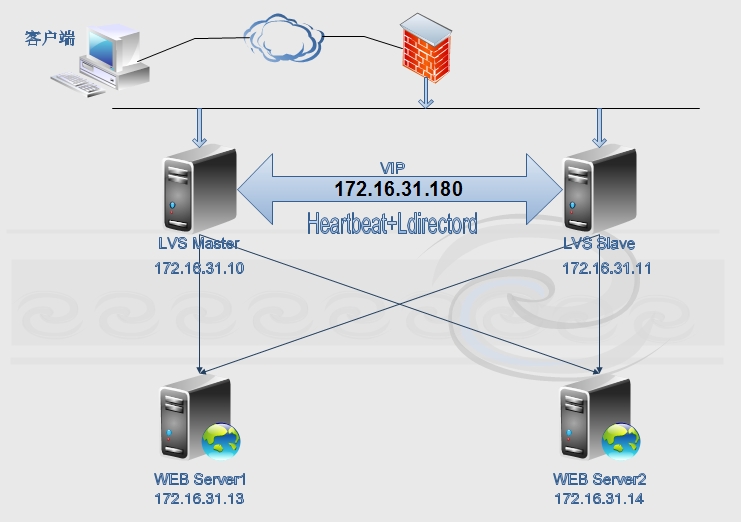

利用heartbeat的ldirectord实现ipvs的高可用集群构建

来源:互联网 发布:纯种狗的悲哀知乎 编辑:程序博客网 时间:2024/04/28 23:44

[root@node1 ~]# ssh-keygen -t rsa -P""[root@node1 ~]# ssh-copy-id -i .ssh/id_rsa.pub root@node2[root@node2 ~]# ssh-keygen -t rsa -P""[root@node2 ~]# ssh-copy-id -i .ssh/id_rsa.pub root@node1四个节点的hosts文件相同:

[root@node1 ~]# cat /etc/hosts127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4::1 localhost localhost.localdomain localhost6 localhost6.localdomain6172.16.0.1 server.magelinux.com server172.16.31.10 node1.stu31.com node1172.16.31.11 node2.stu31.com node2172.16.31.13 rs1.stu31.com rs1172.16.31.14 rs2.stu31.com rs2HA节点间实现无密钥通信:测试时间一致

[root@node1 ~]# date;ssh node2 'date'Sun Jan 4 17:57:51 CST 2015Sun Jan 4 17:57:50 CST 2015二.安装heartbeat[root@node1 heartbeat2]# lsheartbeat-2.1.4-12.el6.x86_64.rpm heartbeat-gui-2.1.4-12.el6.x86_64.rpm heartbeat-stonith-2.1.4-12.el6.x86_64.rpmheartbeat-ldirectord-2.1.4-12.el6.x86_64.rpmheartbeat-pils-2.1.4-12.el6.x86_64.rpm安装程序包组:

[root@node1 heartbeat2]# yum install -ynet-snmp-libs libnet PyXML3.安装heartbeat套件程序:

[root@node1 heartbeat2]# rpm -ivh heartbeat-2.1.4-12.el6.x86_64.rpm heartbeat-stonith-2.1.4-12.el6.x86_64.rpm heartbeat-pils-2.1.4-12.el6.x86_64.rpm heartbeat-gui-2.1.4-12.el6.x86_64.rpm heartbeat-ldirectord-2.1.4-12.el6.x86_64.rpmerror: Failed dependencies: ipvsadm is needed by heartbeat-ldirectord-2.1.4-12.el6.x86_64 perl(Mail::Send) is needed by heartbeat-ldirectord-2.1.4-12.el6.x86_64有依赖关系,解决依赖关系:node1和node2都安装# yum -y install ipvsadm perl-MailTools perl-TimeDate再次安装:# rpm -ivh heartbeat-2.1.4-12.el6.x86_64.rpm heartbeat-stonith-2.1.4-12.el6.x86_64.rpm heartbeat-pils-2.1.4-12.el6.x86_64.rpm heartbeat-gui-2.1.4-12.el6.x86_64.rpmheartbeat-ldirectord-2.1.4-12.el6.x86_64.rpmPreparing... ###########################################[100%] 1:heartbeat-pils ########################################### [ 20%] 2:heartbeat-stonith ########################################### [ 40%] 3:heartbeat ########################################### [ 60%] 4:heartbeat-gui ########################################### [ 80%] 5:heartbeat-ldirectord ########################################### [100%]安装完成![root@node1 heartbeat2]# rpm -ql heartbeat-ldirectord/etc/ha.d/resource.d/ldirectord/etc/init.d/ldirectord/etc/logrotate.d/ldirectord/usr/sbin/ldirectord/usr/share/doc/heartbeat-ldirectord-2.1.4/usr/share/doc/heartbeat-ldirectord-2.1.4/COPYING/usr/share/doc/heartbeat-ldirectord-2.1.4/README/usr/share/doc/heartbeat-ldirectord-2.1.4/ldirectord.cf/usr/share/man/man8/ldirectord.8.gz存在模版配置文件。[root@node1 ha.d]# vim /etc/rsyslog.conf#添加如下行:local0.* /var/log/heartbeat.log拷贝一份到node2:[root@node1 ha.d]# scp /etc/rsyslog.confnode2:/etc/rsyslog.conf2.拷贝配置文件模版到/etc/ha.d目录[root@node1 ha.d]# cd /usr/share/doc/heartbeat-2.1.4/[root@node1 heartbeat-2.1.4]# cp authkeysha.cf /etc/ha.d/主配置文件:[root@node1 ha.d]# grep -v ^# /etc/ha.d/ha.cflogfacility local0mcast eth0 225.231.123.31 694 1 0auto_failback onnode node1.stu31.comnode node2.stu31.comping 172.16.0.1crm on认证文件:[root@node1 ha.d]# vim authkeysauth 22 sha1 password权限必须是600或者400:[root@node1 ha.d]# scp authkeys ha.cf node2:/etc/ha.d/authkeys 100% 675 0.7KB/s 00:00 ha.cf 100% 10KB 10.4KB/s 00:00 [root@node1 ha.d]#四.配置RS Server:即WEB服务器

[root@rs1 ~]# cat rs.sh#!/bin/bashvip=172.16.31.180interface="lo:0"case $1 instart) echo 2 > /proc/sys/net/ipv4/conf/all/arp_announce echo 2 > /proc/sys/net/ipv4/conf/lo/arp_announce echo 1 > /proc/sys/net/ipv4/conf/lo/arp_ignore echo 1 > /proc/sys/net/ipv4/conf/all/arp_ignore ifconfig $interface $vip broadcast $vip netmask 255.255.255.255 up route add -host $vip dev $interface ;;stop) echo 0 > /proc/sys/net/ipv4/conf/all/arp_announce echo 0 > /proc/sys/net/ipv4/conf/lo/arp_announce echo 0 > /proc/sys/net/ipv4/conf/lo/arp_ignore echo 0 > /proc/sys/net/ipv4/conf/all/arp_ignore ifconfig $interface down ;;status) if ficonfig lo:0 |grep $vip &>/dev/null; then echo "ipvs isrunning." else echo "ipvs isstopped." fi ;;*) echo "Usage 'basename $0 start|stop|status" exit 1 ;;esac在两台RS 上执行启动。[root@rs1 ~]# echo "rs1.stu31.com" > /var/www/html/index.html[root@rs2 ~]# echo "rs2.stu31.com" > /var/www/html/index.html启动httpd服务测试:[root@rs1 ~]# service httpd startStarting httpd: [ OK ][root@rs1 ~]# curl http://172.16.31.13rs1.stu31.com[root@rs2 ~]# service httpd startStarting httpd: [ OK ][root@rs2 ~]# curl http://172.16.31.14rs2.stu31.com测试完成后就将httpd服务停止,关闭自启动:# service httpd stop # chkconfig httpd offRS就设置好了。[root@node1 ~]# ifconfig eth0:0 172.16.31.180 broadcast 172.16.31.180 netmask 255.255.255.255 up[root@node1 ~]# route add -host 172.16.31.180 dev eth0:0[root@node1 ~]# ipvsadm -C[root@node1 ~]# ipvsadm -A -t 172.16.31.180:80 -s rr[root@node1 ~]# ipvsadm -a -t 172.16.31.180:80 -r 172.16.31.13 -g[root@node1 ~]# ipvsadm -a -t 172.16.31.180:80 -r 172.16.31.14 -g[root@node1 ~]# ipvsadm -L -nIP Virtual Server version 1.2.1 (size=4096)Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConnTCP 172.16.31.180:80 rr -> 172.16.31.13:80 Route 1 0 0 -> 172.16.31.14:80 Route 1 0 02.访问测试:[root@nfs ~]# curl http://172.16.31.180rs2.stu31.com[root@nfs ~]# curl http://172.16.31.180rs1.stu31.com负载均衡实现了:[root@node1 ~]# ipvsadm -L -nIP Virtual Server version 1.2.1 (size=4096)Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConnTCP 172.16.31.180:80 rr -> 172.16.31.13:80 Route 1 0 1 -> 172.16.31.14:80 Route 1 0 1在节点上清除配置的规则:[root@node1 ~]# ipvsadm -C[root@node1 ~]# route del -host 172.16.31.180[root@node1 ~]# ifconfig eth0:0 down第二个LVS Server也同样测试一遍,测试成功后清除配置的规则。[root@node1 ~]# echo "sorry page fromlvs1" > /var/www/html/index.html[root@node2 ~]# echo "sorry page fromlvs2" > /var/www/html/index.html启动web服务测试错误页面正常:[root@node1 ha.d]# service httpd startStarting httpd: [ OK ][root@node1 ha.d]# curl http://172.16.31.10sorry page from lvs1[root@node2 ~]# service httpd startStarting httpd: [ OK ][root@node2 ~]# curl http://172.16.31.11sorry page from lvs24.将LVS的两个节点配置为HA集群:[root@node1 ~]# cd /usr/share/doc/heartbeat-ldirectord-2.1.4/[root@node1 heartbeat-ldirectord-2.1.4]# lsCOPYING ldirectord.cf README[root@node1 heartbeat-ldirectord-2.1.4]# cp ldirectord.cf /etc/ha.d

[root@node1 ha.d]# grep -v ^# /etc/ha.d/ldirectord.cf#检测超时checktimeout=3#检测间隔checkinterval=1#重新载入客户机autoreload=yes#real server 宕机后从lvs列表中删除,恢复后自动添加进列表quiescent=yes#监听VIP地址80端口virtual=172.16.31.180:80 #真正服务器的IP地址和端口,路由模式 real=172.16.31.13:80 gate real=172.16.31.14:80 gate #如果RS节点都宕机,则回切到本地环回口地址 fallback=127.0.0.1:80 gate #服务是http service=http #保存在RS的web根目录并且可以访问,通过它来判断RS是否存活 request=".health.html" #网页内容 receive="OK" #调度算法 scheduler=rr #persistent=600 #netmask=255.255.255.255 #检测协议 protocol=tcp #检测类型 checktype=negotiate #检测端口 checkport=80

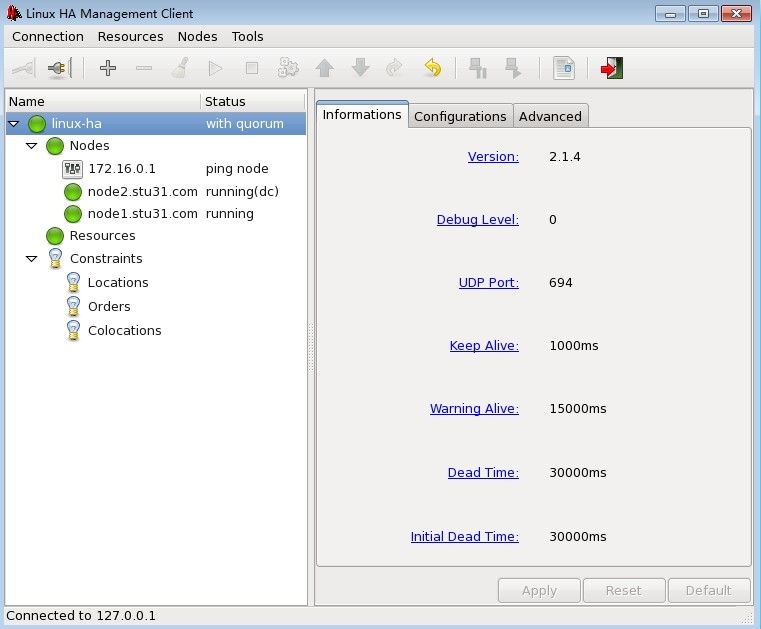

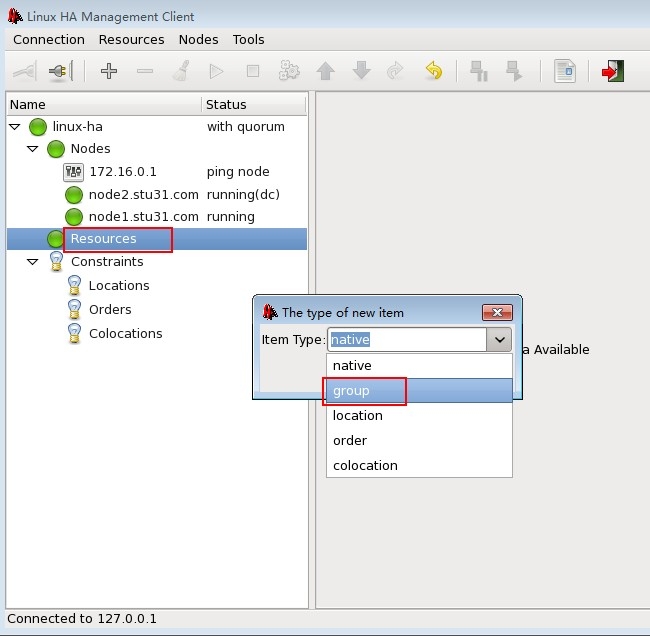

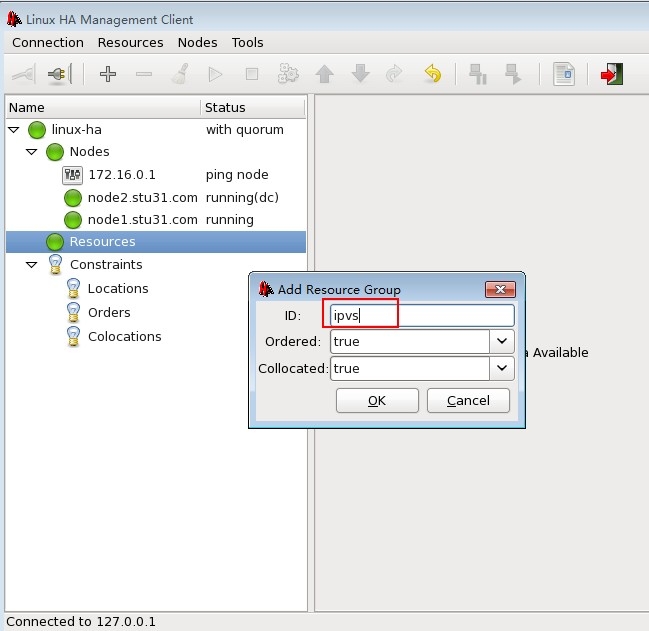

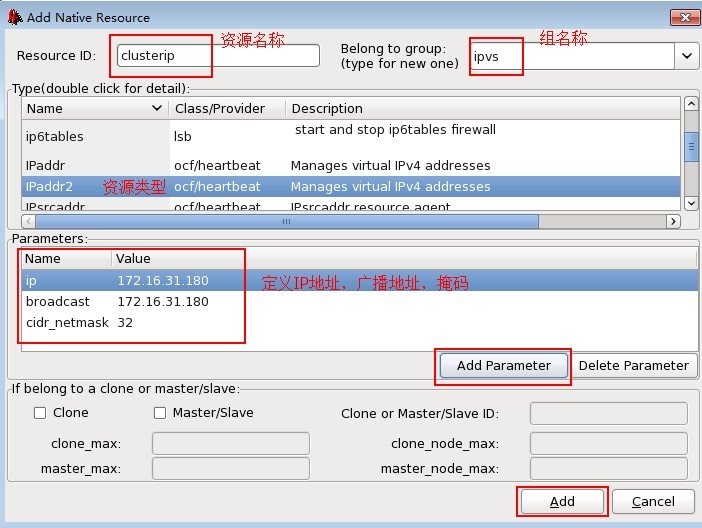

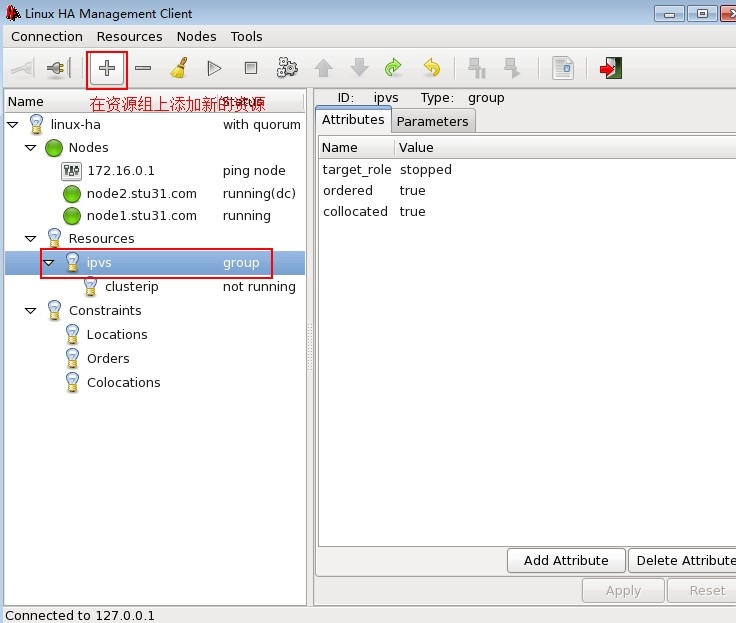

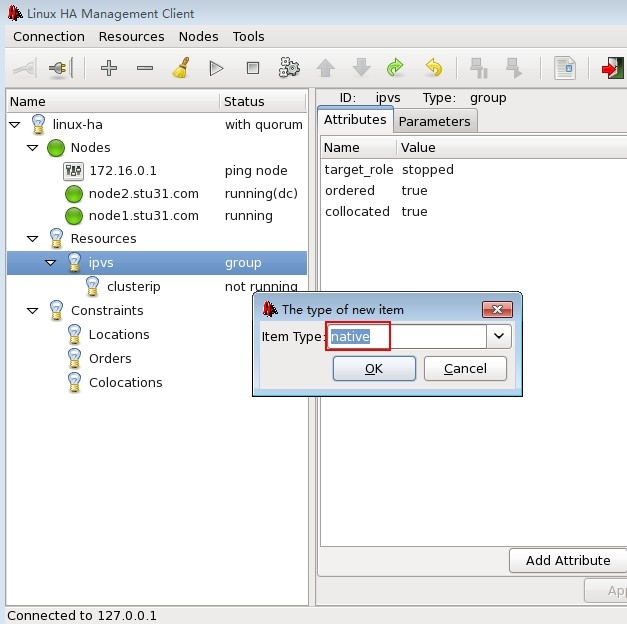

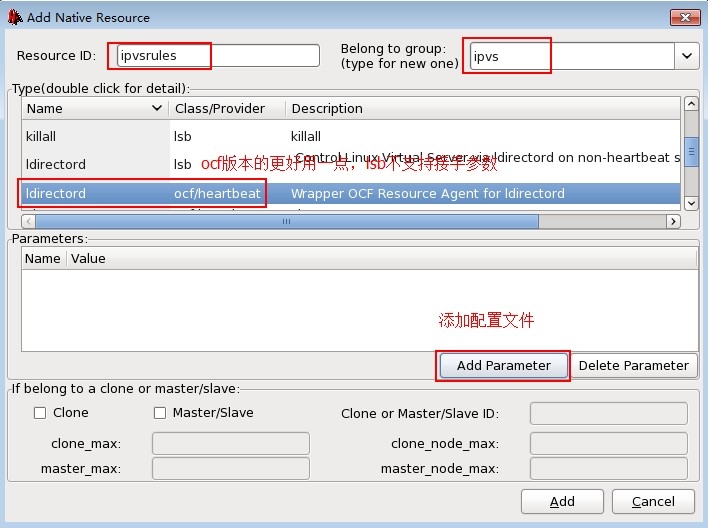

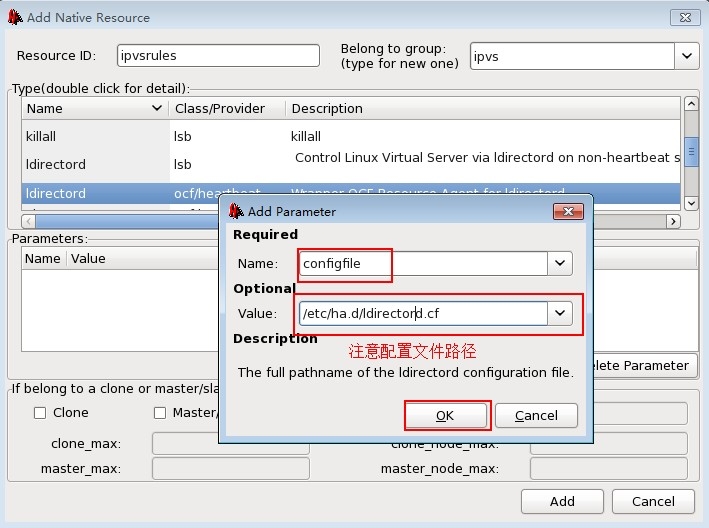

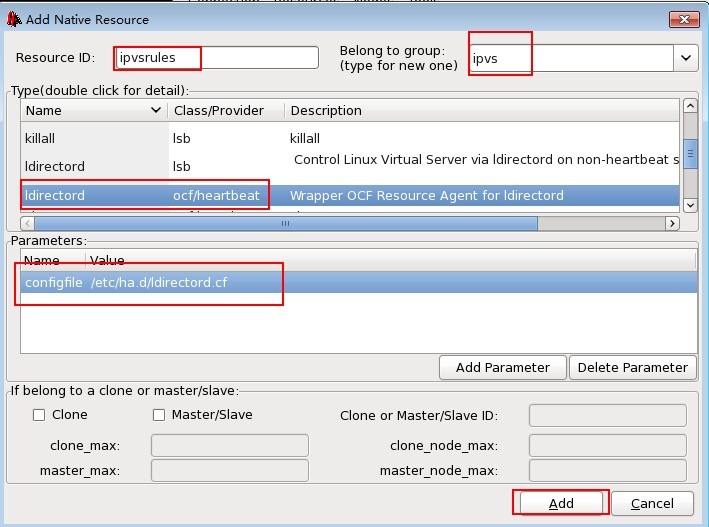

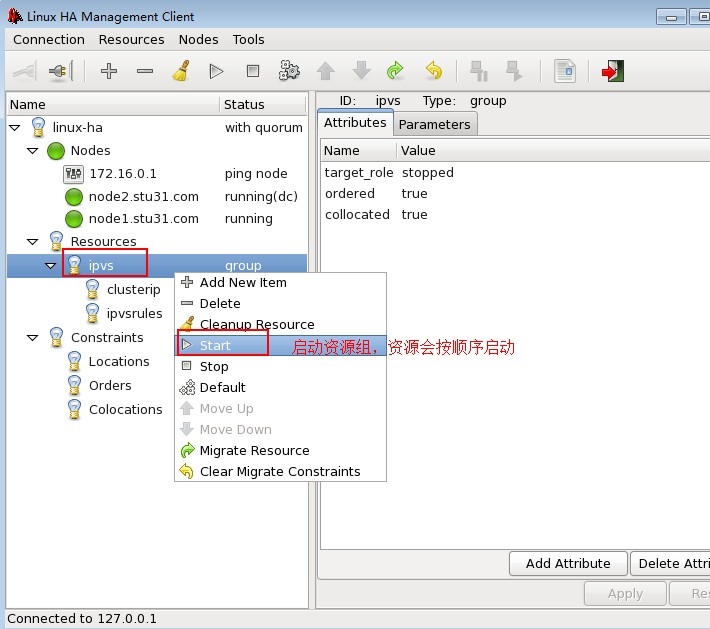

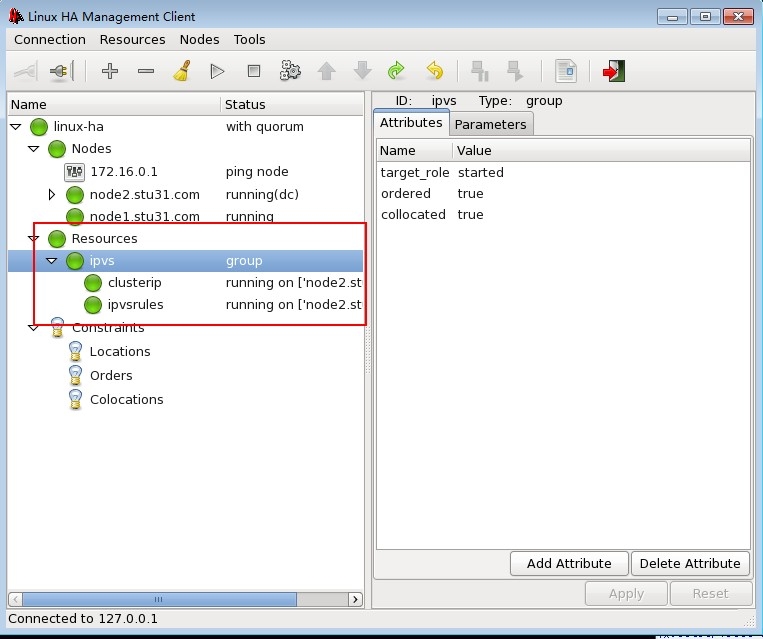

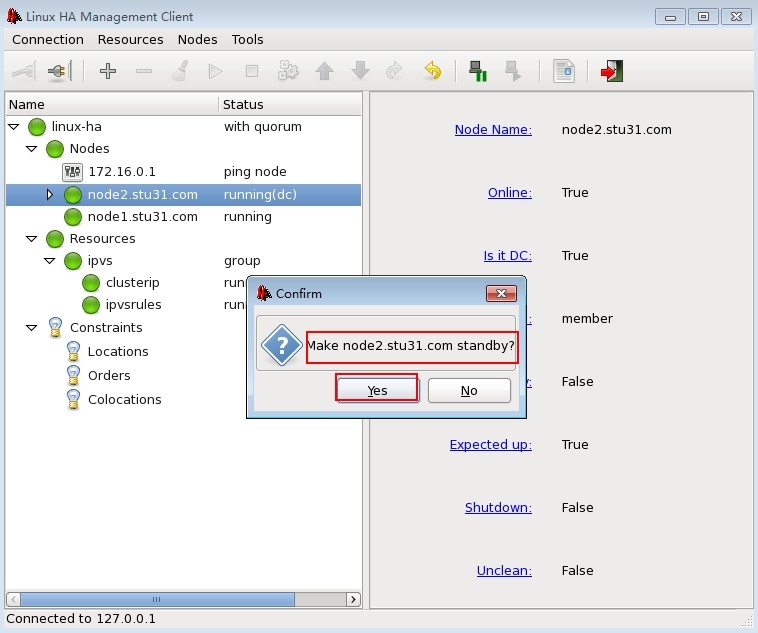

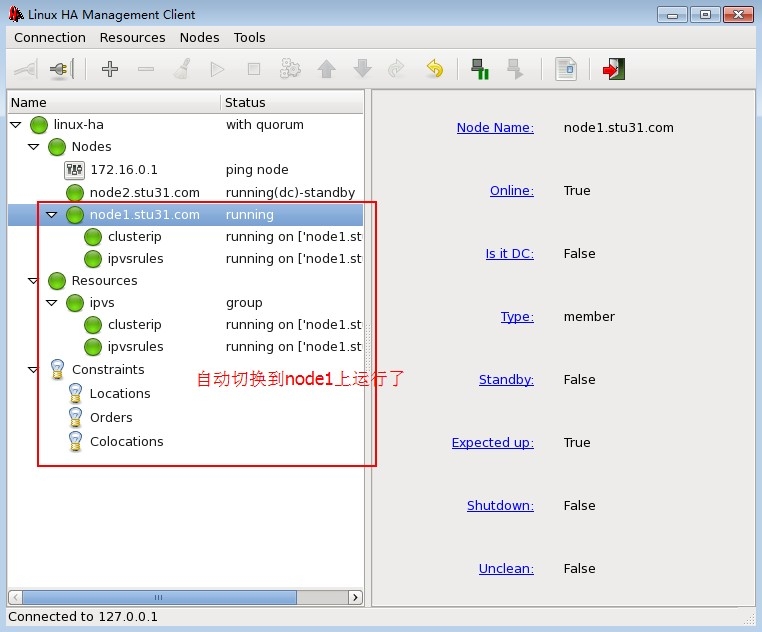

[root@rs1 ~]# echo "OK" > /var/www/html/.health.html[root@rs2 ~]# echo "OK" > /var/www/html/.health.html复制一份ldirectord.cf配置文件到node2:[root@node1 ha.d]# scp ldirectord.cf node2:/etc/ha.d/ldirectord.cf 100% 7553 7.4KB/s 00:00启动heartbeat服务:[root@node1 ha.d]# service heartbeat start;ssh node2 'service heartbeat start'Starting High-Availability services:Done. Starting High-Availability services:Done.查看监听端口:[root@node1 ha.d]# ss -tunl |grep 5560tcp LISTEN 0 10 *:5560 *:*六.资源配置[root@node1 ~]# echo oracle |passwd --stdin hacluster Changing password for user hacluster.passwd: all authentication tokens updatedsuccessfully.进入图形化配置端配置资源:[root@nfs ~]# curl http://172.16.31.180rs2.stu31.com[root@nfs ~]# curl http://172.16.31.180rs1.stu31.com到node2上查看负载均衡状态信息:[root@node2 ~]# ipvsadm -L -nIP Virtual Server version 1.2.1 (size=4096)Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConnTCP 172.16.31.180:80 rr -> 172.16.31.13:80 Route 1 0 1 -> 172.16.31.14:80 Route 1 0 1我们将后端两台RS服务器的web健康监测页面改名后查看效果:[root@rs1 ~]# cd /var/www/html/[root@rs1 html]# mv .health.html a.html[root@rs2 ~]# cd /var/www/html/[root@rs2 html]# mv .health.html a.html使用客户端访问:[root@nfs ~]# curl http://172.16.31.180sorry page from lvs2集群判断后端的RS都宕机了,就决定启用错误页面的web服务器,返回错误信息给用户。[root@node2 ~]# ipvsadm -L -nIP Virtual Server version 1.2.1 (size=4096)Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConnTCP 172.16.31.180:80 rr -> 127.0.0.1:80 Local 1 0 1 -> 172.16.31.13:80 Route 0 0 0 -> 172.16.31.14:80 Route 0 0 0将错误的RS服务器权重设置为0,将本地httpd服务的权重设为1.

[root@rs1 html]# mv a.html .health.html[root@rs2 html]# mv a.html .health.html访问测试:[root@nfs ~]# curl http://172.16.31.180rs2.stu31.com[root@nfs ~]# curl http://172.16.31.180rs1.stu31.com查看负载均衡状态信息:[root@node2 ~]# ipvsadm -L -nIP Virtual Server version 1.2.1 (size=4096)Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConnTCP 172.16.31.180:80 rr -> 172.16.31.13:80 Route 1 0 1 -> 172.16.31.14:80 Route 1 0 1测试成功。[root@nfs ~]# curl http://172.16.31.180rs2.stu31.com[root@nfs ~]# curl http://172.16.31.180rs1.stu31.com到节点1查看负载均衡状态:[root@node1 ~]# ipvsadm -L -nIP Virtual Server version 1.2.1 (size=4096)Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConnTCP 172.16.31.180:80 rr -> 172.16.31.13:80 Route 1 0 1 -> 172.16.31.14:80 Route 1 0 1至此,使用heartbeat的ldirectord组件构建ipvs负载均衡集群的高可用性集群就搭建完毕,可以实现集群对后端RS的监控。 0 0

- 利用heartbeat的ldirectord实现ipvs的高可用集群构建

- IPVS-DR+heartbeat+ldirectord实现高可用负载均衡集

- IPVS-DR+heartbeat+ldirectord实现高可用负载均衡集

- 高可用负载均衡集群Heartbeat+Ldirectord+lvs-DR的搭建

- linux高可用集群heartbeat实现http的高可用

- LVS+heartbeat+ldirectord高可用负载均衡集群解决方案

- heartbeat高可用集群+负载均衡+ldirectord后端安全检查

- 搭建基于heartbeat的高可用集群

- Heartbeat+ipvsadm+ldirectord高可用双机lvs

- 借助heartbeat构建redis的主备高可用集群示例

- 高可用集群heartbeat

- Linux Heartbeat实现高可用集群及在VirtualBox虚拟环境下的测试

- CentOS 6.5环境下heartbeat高可用集群的实现及工作原理详解

- 构建高可用的zookeeper 集群

- 高可用集群之heartbeat

- 集群高可用之heartbeat

- Linux之Heartbeat实现服务器的高可用

- 使用HeartBeat实现高可用HA的配置过程详解

- 《精通CSS与HTML设计模式》学习笔记2

- [程序员面试题精选100题]9.链表中倒数第k个结点

- 九度OJ题目1185:特殊排序

- 一个高效的内存池实现

- VBA 学习笔记 1

- 利用heartbeat的ldirectord实现ipvs的高可用集群构建

- C++拾遗--bind函数绑定

- 机器人操作系统ROS Indigo 入门学习(0)——ROS的UNIX基础

- android学习路线:如何成长为高级工程师

- Linux screen标题显示screen id(即pid)

- Phantomjs,Casperjs重要的概念:执行顺序

- 机器人操作系统ROS Indigo 入门学习(1)——安装ROS Indigo

- Android中Handler引起的内存泄露

- Unity3d资源管理模块