Spark通过Java Web提交任务

来源:互联网 发布:广联达软件官方售价 编辑:程序博客网 时间:2024/06/06 02:50

相关软件版本:

Spark1.4.1 ,Hadoop2.6,Scala2.10.5 , MyEclipse2014,intelliJ IDEA14,JDK1.8,Tomcat7

机器:

windows7 (包含JDK1.8,MyEclipse2014,IntelliJ IDEA14,TOmcat7);

centos6.6虚拟机(Hadoop伪分布式集群,Spark standAlone集群,JDK1.8);

centos7虚拟机(Tomcat,JDK1.8);

1. 场景:

1. windows简单java程序调用Spark,执行Scala开发的Spark程序,这里包含两种模式:

1> 提交任务到Spark集群,使用standAlone模式执行;

2> 提交任务到Yarn集群,使用yarn-client的模式;

2. windows 开发java web程序调用Spark,执行Scala开发的Spark程序,同样包含两种模式,参考1.

3. linux运行java web程序调用Spark,执行Scala开发的Spark程序,包含两种模式,参考1.

2. 实现:

1. 简单Scala程序,该程序的功能是读取HDFS中的log日志文件,过滤log文件中的WARN和ERROR的记录,最后把过滤后的记录写入到HDFS中,代码如下:

使用IntelliJ IDEA 并打成jar包备用(lz这里命名为spark_filter.jar);

2. java调用spark_filter.jar中的Scala_Test 文件,并采用Spark standAlone模式

java代码如下:

具体操作,使用MyEclipse新建java web工程,把spark_filter.jar 以及spark-assembly-1.4.1-hadoop2.6.0.jar(该文件在Spark压缩文件的lib目录中,同时该文件较大,拷贝需要一定时间) 拷贝到WebRoot/WEB-INF/lib目录。(注意:这里可以直接建立java web项目,在测试java调用时,直接运行java代码即可,在测试web项目时,开启tomcat即可)

java调用spark_filter.jar中的Scala_Test 文件,并采用Yarn模式。采用Yarn模式,不能使用简单的修改master为“yarn-client”或“yarn-cluster”,在使用Spark-shell或者spark-submit的时候,使用这个,同时配置HADOOP_CONF_DIR路径是可以的,但是在这里,读取不到HADOOP的配置,所以这里采用其他方式,使用yarn.Clent提交的方式,java代码如下:

SparkServlet如下:

这里只是调用了java编写的任务调用类而已。同时,SparServlet和YarnServlet也只是在调用的地方不同而已。

在web测试时,首先直接在MyEclipse上测试,然后拷贝工程WebRoot到centos7,再次运行tomcat,进行测试。

3. 总结及问题1. 测试结果:

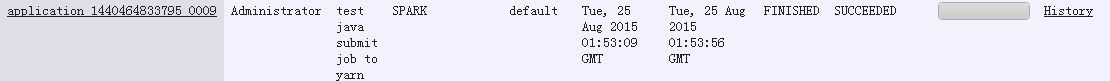

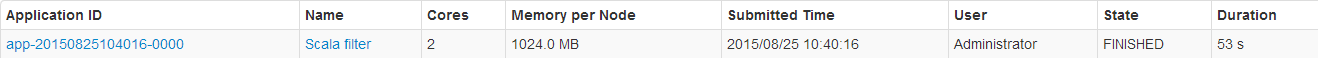

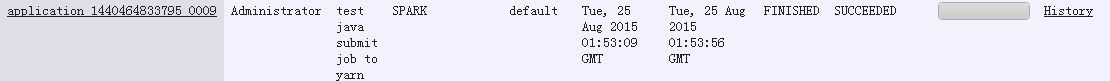

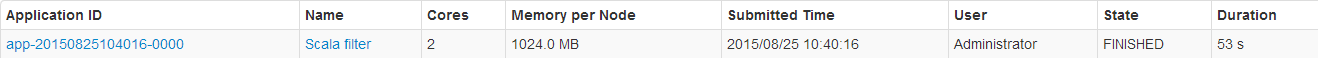

1> java代码直接提交任务到Spark和Yarn,进行日志文件的过滤,测试是成功运行的。可以在Yarn和Spark的监控中看到相关信息:

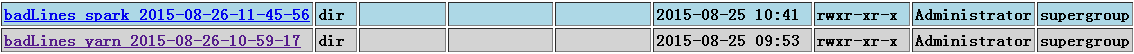

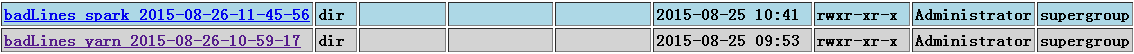

同时,在HDFS可以看到输出的文件:

2> java web 提交任务到Spark和Yarn,首先需要把spark-assembly-1.4.1-hadoop2.6.0.jar中的javax.servlet文件夹删掉,因为会和tomcat的servlet-api.jar冲突。

a. 在windows和linux上启动tomcat,提交任务到Spark standAlone,测试成功运行;

b. 在windows和linux上启动tomcat,提交任务到Yarn,测试失败;

2. 遇到的问题:

1> java web 提交任务到Yarn,会失败,失败的主要日志如下:

这个是因为javax.servlet的包被删掉了,和tomcat的冲突。

同时,在日志中还可以看到:

这里在环境初始化的时候,上传了两个jar,一个就是spark-assembly-1.4.1-hadoop2.6.0.jar 还有一个就是我们自定义的jar。上传的spark-assembly-1.4.1-hadoop2.6.0.jar 里面没有javax.servlet的文件夹,所以会报错。在java中直接调用(没有删除javax.servlet的时候)同样会看到这样的日志,同样的上传,那时是可以的,也就是说这里确实是删除了包文件夹的关系。那么如何修复呢?

上传servlet-api到hdfs,同时在使用yarn.Client提交任务的时候,添加相关的参数,这里查看参数,发现两个比较相关的参数,--addJars以及--archive 参数,把这两个参数都添加后,看到日志中确实把这个jar包作为了job的共享文件,但是java web提交任务到yarn 还是报这个类找不到的错误。所以这个办法也是行不通!

使用yarn.Client提交任务到Yarn参考http://blog.sequenceiq.com/blog/2014/08/22/spark-submit-in-java/ 。

Spark1.4.1 ,Hadoop2.6,Scala2.10.5 , MyEclipse2014,intelliJ IDEA14,JDK1.8,Tomcat7

机器:

windows7 (包含JDK1.8,MyEclipse2014,IntelliJ IDEA14,TOmcat7);

centos6.6虚拟机(Hadoop伪分布式集群,Spark standAlone集群,JDK1.8);

centos7虚拟机(Tomcat,JDK1.8);

1. 场景:

1. windows简单java程序调用Spark,执行Scala开发的Spark程序,这里包含两种模式:

1> 提交任务到Spark集群,使用standAlone模式执行;

2> 提交任务到Yarn集群,使用yarn-client的模式;

2. windows 开发java web程序调用Spark,执行Scala开发的Spark程序,同样包含两种模式,参考1.

3. linux运行java web程序调用Spark,执行Scala开发的Spark程序,包含两种模式,参考1.

2. 实现:

1. 简单Scala程序,该程序的功能是读取HDFS中的log日志文件,过滤log文件中的WARN和ERROR的记录,最后把过滤后的记录写入到HDFS中,代码如下:

[Bash shell] 纯文本查看 复制代码

01import org.apache.spark.{SparkConf, SparkContext}02 03 04/**05 * Created by Administrator on 2015/8/23.06 */07object Scala_Test {08 def main(args:Array[String]): Unit ={09 if(args.length!=2){10 System.err.println("Usage:Scala_Test <input> <output>")11 }12 // 初始化SparkConf13 val conf = new SparkConf().setAppName("Scala filter")14 val sc = new SparkContext(conf)15 16 // 读入数据17 val lines = sc.textFile(args(0))18 19 // 转换20 val errorsRDD = lines.filter(line => line.contains("ERROR"))21 val warningsRDD = lines.filter(line => line.contains("WARN"))22 val badLinesRDD = errorsRDD.union(warningsRDD)23 24 // 写入数据25 badLinesRDD.saveAsTextFile(args(1))26 27 // 关闭SparkConf28 sc.stop()29 }30}使用IntelliJ IDEA 并打成jar包备用(lz这里命名为spark_filter.jar);

2. java调用spark_filter.jar中的Scala_Test 文件,并采用Spark standAlone模式

java代码如下:

[Java] 纯文本查看 复制代码

01package test;02 03import java.text.SimpleDateFormat;04import java.util.Date;05 06import org.apache.spark.deploy.SparkSubmit;07/**08 * @author fansy09 *10 */11public class SubmitScalaJobToSpark {12 13 public static void main(String[] args) {14 SimpleDateFormat dateFormat = new SimpleDateFormat("yyyy-MM-dd-hh-mm-ss");15 String filename = dateFormat.format(new Date());16 String tmp=Thread.currentThread().getContextClassLoader().getResource("").getPath();17 tmp =tmp.substring(0, tmp.length()-8);18 String[] arg0=new String[]{19 "--master","spark://node101:7077",20 "--deploy-mode","client",21 "--name","test java submit job to spark",22 "--class","Scala_Test",23 "--executor-memory","1G",24// "spark_filter.jar",25 tmp+"lib/spark_filter.jar",//26 "hdfs://node101:8020/user/root/log.txt",27 "hdfs://node101:8020/user/root/badLines_spark_"+filename28 };29 30 SparkSubmit.main(arg0);31 }32}具体操作,使用MyEclipse新建java web工程,把spark_filter.jar 以及spark-assembly-1.4.1-hadoop2.6.0.jar(该文件在Spark压缩文件的lib目录中,同时该文件较大,拷贝需要一定时间) 拷贝到WebRoot/WEB-INF/lib目录。(注意:这里可以直接建立java web项目,在测试java调用时,直接运行java代码即可,在测试web项目时,开启tomcat即可)

java调用spark_filter.jar中的Scala_Test 文件,并采用Yarn模式。采用Yarn模式,不能使用简单的修改master为“yarn-client”或“yarn-cluster”,在使用Spark-shell或者spark-submit的时候,使用这个,同时配置HADOOP_CONF_DIR路径是可以的,但是在这里,读取不到HADOOP的配置,所以这里采用其他方式,使用yarn.Clent提交的方式,java代码如下:

[Java] 纯文本查看 复制代码

01package test;02 03import java.text.SimpleDateFormat;04import java.util.Date;05 06import org.apache.hadoop.conf.Configuration;07import org.apache.spark.SparkConf;08import org.apache.spark.deploy.yarn.Client;09import org.apache.spark.deploy.yarn.ClientArguments;10 11public class SubmitScalaJobToYarn {12 13 public static void main(String[] args) {14 SimpleDateFormat dateFormat = new SimpleDateFormat("yyyy-MM-dd-hh-mm-ss");15 String filename = dateFormat.format(new Date());16 String tmp=Thread.currentThread().getContextClassLoader().getResource("").getPath();17 tmp =tmp.substring(0, tmp.length()-8);18 String[] arg0=new String[]{19 "--name","test java submit job to yarn",20 "--class","Scala_Test",21 "--executor-memory","1G",22// "WebRoot/WEB-INF/lib/spark_filter.jar",//23 "--jar",tmp+"lib/spark_filter.jar",//24 25 "--arg","hdfs://node101:8020/user/root/log.txt",26 "--arg","hdfs://node101:8020/user/root/badLines_yarn_"+filename,27 "--addJars","hdfs://node101:8020/user/root/servlet-api.jar",//28 "--archives","hdfs://node101:8020/user/root/servlet-api.jar"//29 };30 31// SparkSubmit.main(arg0);32 Configuration conf = new Configuration();33 String os = System.getProperty("os.name");34 boolean cross_platform =false;35 if(os.contains("Windows")){36 cross_platform = true;37 }38 conf.setBoolean("mapreduce.app-submission.cross-platform", cross_platform);// 配置使用跨平台提交任务39 conf.set("fs.defaultFS", "hdfs://node101:8020");// 指定namenode40 conf.set("mapreduce.framework.name","yarn"); // 指定使用yarn框架41 conf.set("yarn.resourcemanager.address","node101:8032"); // 指定resourcemanager42 conf.set("yarn.resourcemanager.scheduler.address", "node101:8030");// 指定资源分配器43 conf.set("mapreduce.jobhistory.address","node101:10020");44 45 System.setProperty("SPARK_YARN_MODE", "true");46 47 SparkConf sparkConf = new SparkConf();48 ClientArguments cArgs = new ClientArguments(arg0, sparkConf);49 50 new Client(cArgs,conf,sparkConf).run();51 }52}SparkServlet如下:

[Java] 纯文本查看 复制代码

01package servlet;02 03import java.io.IOException;04import java.io.PrintWriter;05 06import javax.servlet.ServletException;07import javax.servlet.http.HttpServlet;08import javax.servlet.http.HttpServletRequest;09import javax.servlet.http.HttpServletResponse;10 11import test.SubmitScalaJobToSpark;12 13public class SparkServlet extends HttpServlet {14 15 /**16 * Constructor of the object.17 */18 public SparkServlet() {19 super();20 }21 22 /**23 * Destruction of the servlet. <br>24 */25 public void destroy() {26 super.destroy(); // Just puts "destroy" string in log27 // Put your code here28 }29 30 /**31 * The doGet method of the servlet. <br>32 *33 * This method is called when a form has its tag value method equals to get.34 *35 * @param request the request send by the client to the server36 * @param response the response send by the server to the client37 * @throws ServletException if an error occurred38 * @throws IOException if an error occurred39 */40 public void doGet(HttpServletRequest request, HttpServletResponse response)41 throws ServletException, IOException {42 43 this.doPost(request, response);44 }45 46 /**47 * The doPost method of the servlet. <br>48 *49 * This method is called when a form has its tag value method equals to post.50 *51 * @param request the request send by the client to the server52 * @param response the response send by the server to the client53 * @throws ServletException if an error occurred54 * @throws IOException if an error occurred55 */56 public void doPost(HttpServletRequest request, HttpServletResponse response)57 throws ServletException, IOException {58 System.out.println("开始SubmitScalaJobToSpark调用......");59 SubmitScalaJobToSpark.main(null);60 //YarnServlet也只是这里不同61 System.out.println("完成SubmitScalaJobToSpark调用!");62 response.setContentType("text/html");63 PrintWriter out = response.getWriter();64 out.println("<!DOCTYPE HTML PUBLIC \"-//W3C//DTD HTML 4.01 Transitional//EN\">");65 out.println("<HTML>");66 out.println(" <HEAD><TITLE>A Servlet</TITLE></HEAD>");67 out.println(" <BODY>");68 out.print(" This is ");69 out.print(this.getClass());70 out.println(", using the POST method");71 out.println(" </BODY>");72 out.println("</HTML>");73 out.flush();74 out.close();75 }76 77 /**78 * Initialization of the servlet. <br>79 *80 * @throws ServletException if an error occurs81 */82 public void init() throws ServletException {83 // Put your code here84 }85 86}这里只是调用了java编写的任务调用类而已。同时,SparServlet和YarnServlet也只是在调用的地方不同而已。

在web测试时,首先直接在MyEclipse上测试,然后拷贝工程WebRoot到centos7,再次运行tomcat,进行测试。

3. 总结及问题1. 测试结果:

1> java代码直接提交任务到Spark和Yarn,进行日志文件的过滤,测试是成功运行的。可以在Yarn和Spark的监控中看到相关信息:

同时,在HDFS可以看到输出的文件:

2> java web 提交任务到Spark和Yarn,首先需要把spark-assembly-1.4.1-hadoop2.6.0.jar中的javax.servlet文件夹删掉,因为会和tomcat的servlet-api.jar冲突。

a. 在windows和linux上启动tomcat,提交任务到Spark standAlone,测试成功运行;

b. 在windows和linux上启动tomcat,提交任务到Yarn,测试失败;

2. 遇到的问题:

1> java web 提交任务到Yarn,会失败,失败的主要日志如下:

[Bash shell] 纯文本查看 复制代码

0115/08/25 11:35:48 ERROR yarn.ApplicationMaster: User class threw exception: java.lang.NoClassDefFoundError: javax/servlet/http/HttpServletResponse02java.lang.NoClassDefFoundError: javax/servlet/http/HttpServletResponse这个是因为javax.servlet的包被删掉了,和tomcat的冲突。

同时,在日志中还可以看到:

[Bash shell] 纯文本查看 复制代码

0115/08/26 12:39:27 INFO Client: Setting up container launch context for our AM0215/08/26 12:39:27 INFO Client: Preparing resources for our AM container0315/08/26 12:39:27 INFO Client: Uploading resource file:/D:/workspase_scala/SparkWebTest/WebRoot/WEB-INF/lib/spark-assembly-1.4.1-hadoop2.6.0.jar ->hdfs://node101:8020/user/Administrator/.sparkStaging/application_1440464833795_0012/spark-assembly-1.4.1-hadoop2.6.0.jar0415/08/26 12:39:32 INFO Client: Uploading resource file:/D:/workspase_scala/SparkWebTest/WebRoot/WEB-INF/lib/spark_filter.jar ->hdfs://node101:8020/user/Administrator/.sparkStaging/application_1440464833795_0012/spark_filter.jar0515/08/26 12:39:33 INFO Client: Uploading resource file:/C:/Users/Administrator/AppData/Local/Temp/spark-46820caf-06e0-4c51-a479-3bb35666573f/__hadoop_conf__5465819424276830228.zip ->hdfs://node101:8020/user/Administrator/.sparkStaging/application_1440464833795_0012/__hadoop_conf__5465819424276830228.zip0615/08/26 12:39:33 INFO Client: Source and destination file systems are the same. Not copyinghdfs://node101:8020/user/root/servlet-api.jar0715/08/26 12:39:33 WARN Client: Resource hdfs://node101:8020/user/root/servlet-api.jar added multiple timesto distributed cache.这里在环境初始化的时候,上传了两个jar,一个就是spark-assembly-1.4.1-hadoop2.6.0.jar 还有一个就是我们自定义的jar。上传的spark-assembly-1.4.1-hadoop2.6.0.jar 里面没有javax.servlet的文件夹,所以会报错。在java中直接调用(没有删除javax.servlet的时候)同样会看到这样的日志,同样的上传,那时是可以的,也就是说这里确实是删除了包文件夹的关系。那么如何修复呢?

上传servlet-api到hdfs,同时在使用yarn.Client提交任务的时候,添加相关的参数,这里查看参数,发现两个比较相关的参数,--addJars以及--archive 参数,把这两个参数都添加后,看到日志中确实把这个jar包作为了job的共享文件,但是java web提交任务到yarn 还是报这个类找不到的错误。所以这个办法也是行不通!

使用yarn.Client提交任务到Yarn参考http://blog.sequenceiq.com/blog/2014/08/22/spark-submit-in-java/ 。

分享,成长,快乐

脚踏实地,专注

转载请注明blog地址:http://blog.csdn.net/fansy1990

0 0

- Spark通过Java Web提交任务

- Java Web提交任务到Spark Spark通过Java Web提交任务

- Java Web提交任务到Spark

- <转>Java Web提交任务到Spark

- Java Web提交参数到Spark集群执行任务

- Java Web提交参数到Spark集群执行任务

- Java Web提交任务到Spark Standalone集群并监控

- Spark通过YARN-client提交任务不成功

- spark提交任务java.nio.channels.ClosedChannelException

- hive on spark通过YARN-client提交任务不成功

- Spark调研笔记第2篇 - 如何通过Spark客户端向Spark提交任务

- spark 提交任务到spark

- Spark 提交任务详解

- spark任务提交参数

- Spark集群任务提交

- Spark任务的提交

- [解决]spark提交任务:java.net.UnknownHostException: ns1

- Spark提交任务到集群

- Android studio 导出jar包并混淆和aar

- 友善之臂smart210启动wifi热点

- 【高级算法】遗传,模拟退火,禁忌,Lasvegas等算法详解与实现

- JAVA_SE系列:17.扩展后的赋值运算符

- netty 流数据的传输处理

- Spark通过Java Web提交任务

- HDU 5427 A problem of sorting 水题

- 关于PHP引用(符号&)的用法

- Material Design之视图状态改变

- 基于共享内存多级hash设计

- 信息系统项目管理八项经典技术

- [iOS]#Swift#可选解析-optional

- JAVA_SE系列:18.比较运算符、逻辑运算符、三目运算符

- Android android:windowSoftInputMode 属性整理