hadoop: hdfs API示例

来源:互联网 发布:刺马案真相知乎 编辑:程序博客网 时间:2024/05/01 07:30

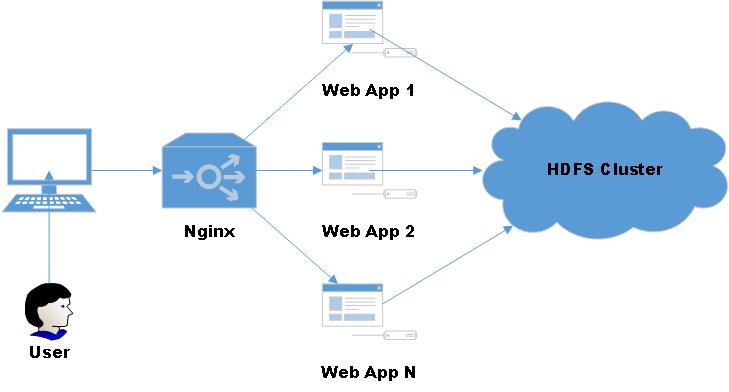

利用hdfs的api,可以实现向hdfs的文件、目录读写,利用这一套API可以设计一个简易的山寨版云盘,见下图:

为了方便操作,将常用的文件读写操作封装了一个工具类:

1 import org.apache.hadoop.conf.Configuration; 2 import org.apache.hadoop.fs.*; 3 import org.apache.hadoop.io.IOUtils; 4 5 import java.io.ByteArrayOutputStream; 6 import java.io.IOException; 7 import java.io.InputStream; 8 import java.io.OutputStream; 9 10 /** 11 * HDFS工具类 12 * Author: 菩提树下的杨过(http://yjmyzz.cnblogs.com) 13 * Since: 2015-05-21 14 */ 15 public class HDFSUtil { 16 17 18 private HDFSUtil() { 19 20 } 21 22 /** 23 * 判断路径是否存在 24 * 25 * @param conf 26 * @param path 27 * @return 28 * @throws IOException 29 */ 30 public static boolean exits(Configuration conf, String path) throws IOException { 31 FileSystem fs = FileSystem.get(conf); 32 return fs.exists(new Path(path)); 33 } 34 35 /** 36 * 创建文件 37 * 38 * @param conf 39 * @param filePath 40 * @param contents 41 * @throws IOException 42 */ 43 public static void createFile(Configuration conf, String filePath, byte[] contents) throws IOException { 44 FileSystem fs = FileSystem.get(conf); 45 Path path = new Path(filePath); 46 FSDataOutputStream outputStream = fs.create(path); 47 outputStream.write(contents); 48 outputStream.close(); 49 fs.close(); 50 } 51 52 /** 53 * 创建文件 54 * 55 * @param conf 56 * @param filePath 57 * @param fileContent 58 * @throws IOException 59 */ 60 public static void createFile(Configuration conf, String filePath, String fileContent) throws IOException { 61 createFile(conf, filePath, fileContent.getBytes()); 62 } 63 64 /** 65 * @param conf 66 * @param localFilePath 67 * @param remoteFilePath 68 * @throws IOException 69 */ 70 public static void copyFromLocalFile(Configuration conf, String localFilePath, String remoteFilePath) throws IOException { 71 FileSystem fs = FileSystem.get(conf); 72 Path localPath = new Path(localFilePath); 73 Path remotePath = new Path(remoteFilePath); 74 fs.copyFromLocalFile(true, true, localPath, remotePath); 75 fs.close(); 76 } 77 78 /** 79 * 删除目录或文件 80 * 81 * @param conf 82 * @param remoteFilePath 83 * @param recursive 84 * @return 85 * @throws IOException 86 */ 87 public static boolean deleteFile(Configuration conf, String remoteFilePath, boolean recursive) throws IOException { 88 FileSystem fs = FileSystem.get(conf); 89 boolean result = fs.delete(new Path(remoteFilePath), recursive); 90 fs.close(); 91 return result; 92 } 93 94 /** 95 * 删除目录或文件(如果有子目录,则级联删除) 96 * 97 * @param conf 98 * @param remoteFilePath 99 * @return100 * @throws IOException101 */102 public static boolean deleteFile(Configuration conf, String remoteFilePath) throws IOException {103 return deleteFile(conf, remoteFilePath, true);104 }105 106 /**107 * 文件重命名108 *109 * @param conf110 * @param oldFileName111 * @param newFileName112 * @return113 * @throws IOException114 */115 public static boolean renameFile(Configuration conf, String oldFileName, String newFileName) throws IOException {116 FileSystem fs = FileSystem.get(conf);117 Path oldPath = new Path(oldFileName);118 Path newPath = new Path(newFileName);119 boolean result = fs.rename(oldPath, newPath);120 fs.close();121 return result;122 }123 124 /**125 * 创建目录126 *127 * @param conf128 * @param dirName129 * @return130 * @throws IOException131 */132 public static boolean createDirectory(Configuration conf, String dirName) throws IOException {133 FileSystem fs = FileSystem.get(conf);134 Path dir = new Path(dirName);135 boolean result = fs.mkdirs(dir);136 fs.close();137 return result;138 }139 140 /**141 * 列出指定路径下的所有文件(不包含目录)142 *143 * @param conf144 * @param basePath145 * @param recursive146 */147 public static RemoteIterator<LocatedFileStatus> listFiles(FileSystem fs, String basePath, boolean recursive) throws IOException {148 149 RemoteIterator<LocatedFileStatus> fileStatusRemoteIterator = fs.listFiles(new Path(basePath), recursive);150 151 return fileStatusRemoteIterator;152 }153 154 /**155 * 列出指定路径下的文件(非递归)156 *157 * @param conf158 * @param basePath159 * @return160 * @throws IOException161 */162 public static RemoteIterator<LocatedFileStatus> listFiles(Configuration conf, String basePath) throws IOException {163 FileSystem fs = FileSystem.get(conf);164 RemoteIterator<LocatedFileStatus> remoteIterator = fs.listFiles(new Path(basePath), false);165 fs.close();166 return remoteIterator;167 }168 169 /**170 * 列出指定目录下的文件\子目录信息(非递归)171 *172 * @param conf173 * @param dirPath174 * @return175 * @throws IOException176 */177 public static FileStatus[] listStatus(Configuration conf, String dirPath) throws IOException {178 FileSystem fs = FileSystem.get(conf);179 FileStatus[] fileStatuses = fs.listStatus(new Path(dirPath));180 fs.close();181 return fileStatuses;182 }183 184 185 /**186 * 读取文件内容187 *188 * @param conf189 * @param filePath190 * @return191 * @throws IOException192 */193 public static String readFile(Configuration conf, String filePath) throws IOException {194 String fileContent = null;195 FileSystem fs = FileSystem.get(conf);196 Path path = new Path(filePath);197 InputStream inputStream = null;198 ByteArrayOutputStream outputStream = null;199 try {200 inputStream = fs.open(path);201 outputStream = new ByteArrayOutputStream(inputStream.available());202 IOUtils.copyBytes(inputStream, outputStream, conf);203 fileContent = outputStream.toString();204 } finally {205 IOUtils.closeStream(inputStream);206 IOUtils.closeStream(outputStream);207 fs.close();208 }209 return fileContent;210 }211 }

简单的测试了一下:

1 @Test 2 public void test() throws IOException { 3 Configuration conf = new Configuration(); 4 String newDir = "/test"; 5 //01.检测路径是否存在 测试 6 if (HDFSUtil.exits(conf, newDir)) { 7 System.out.println(newDir + " 已存在!"); 8 } else { 9 //02.创建目录测试10 boolean result = HDFSUtil.createDirectory(conf, newDir);11 if (result) {12 System.out.println(newDir + " 创建成功!");13 } else {14 System.out.println(newDir + " 创建失败!");15 }16 }17 String fileContent = "Hi,hadoop. I love you";18 String newFileName = newDir + "/myfile.txt";19 20 //03.创建文件测试21 HDFSUtil.createFile(conf, newFileName, fileContent);22 System.out.println(newFileName + " 创建成功");23 24 //04.读取文件内容 测试25 System.out.println(newFileName + " 的内容为:\n" + HDFSUtil.readFile(conf, newFileName));26 27 //05. 测试获取所有目录信息28 FileStatus[] dirs = HDFSUtil.listStatus(conf, "/");29 System.out.println("--根目录下的所有子目录---");30 for (FileStatus s : dirs) {31 System.out.println(s);32 }33 34 //06. 测试获取所有文件35 FileSystem fs = FileSystem.get(conf);36 RemoteIterator<LocatedFileStatus> files = HDFSUtil.listFiles(fs, "/", true);37 System.out.println("--根目录下的所有文件---");38 while (files.hasNext()) {39 System.out.println(files.next());40 }41 fs.close();42 43 //删除文件测试44 boolean isDeleted = HDFSUtil.deleteFile(conf, newDir);45 System.out.println(newDir + " 已被删除");46 47 }

注:测试时,不要忘记了在resources目录下放置core-site.xml文件,不然IDE环境下,代码不知道去连哪里的HDFS

输出结果:

/test 已存在!

/test/myfile.txt 创建成功

/test/myfile.txt 的内容为:

Hi,hadoop. I love you

--根目录下的所有子目录---

FileStatus{path=hdfs://172.28.20.102:9000/jimmy; isDirectory=true; modification_time=1432176691550; access_time=0; owner=hadoop; group=supergroup; permission=rwxrwxrwx; isSymlink=false}

FileStatus{path=hdfs://172.28.20.102:9000/test; isDirectory=true; modification_time=1432181331362; access_time=0; owner=jimmy; group=supergroup; permission=rwxr-xr-x; isSymlink=false}

FileStatus{path=hdfs://172.28.20.102:9000/user; isDirectory=true; modification_time=1431931797244; access_time=0; owner=hadoop; group=supergroup; permission=rwxr-xr-x; isSymlink=false}

--根目录下的所有文件---

LocatedFileStatus{path=hdfs://172.28.20.102:9000/jimmy/input/README.txt; isDirectory=false; length=1366; replication=1; blocksize=134217728; modification_time=1431922483851; access_time=1432174134018; owner=hadoop; group=supergroup; permission=rw-r--r--; isSymlink=false}

LocatedFileStatus{path=hdfs://172.28.20.102:9000/jimmy/output/_SUCCESS; isDirectory=false; length=0; replication=3; blocksize=134217728; modification_time=1432176692454; access_time=1432176692448; owner=jimmy; group=supergroup; permission=rw-r--r--; isSymlink=false}

LocatedFileStatus{path=hdfs://172.28.20.102:9000/jimmy/output/part-r-00000; isDirectory=false; length=1306; replication=3; blocksize=134217728; modification_time=1432176692338; access_time=1432176692182; owner=jimmy; group=supergroup; permission=rw-r--r--; isSymlink=false}

LocatedFileStatus{path=hdfs://172.28.20.102:9000/test/myfile.txt; isDirectory=false; length=21; replication=3; blocksize=134217728; modification_time=1432181331601; access_time=1432181331362; owner=jimmy; group=supergroup; permission=rw-r--r--; isSymlink=false}

/test 已被删除

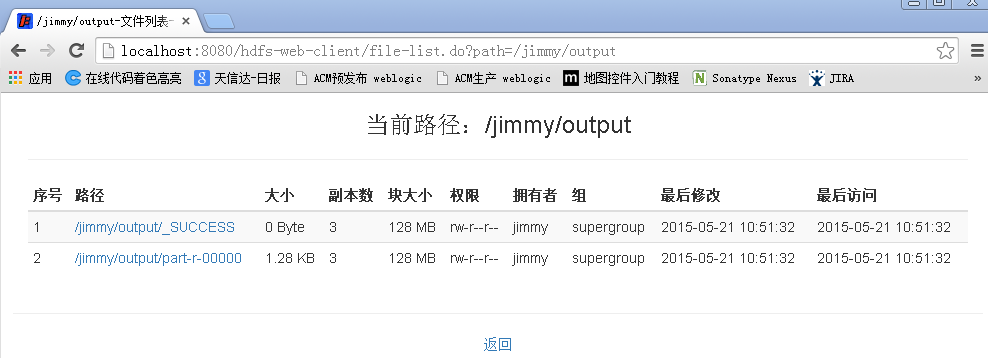

用spring-mvc结合hdfs api仿造hadoop的文件浏览管理界面,做了一个山寨版:(只完成了文件列表功能)

源代码托管在taobao开源平台上了,有需要的可以参考下:

- hadoop: hdfs API示例

- hadoop hdfs API操作

- hadoop-2 HDFS API

- JAVA操作HDFS API(hadoop)

- JAVA操作HDFS API(hadoop)

- hadoop hdfs api基本操作

- Hadoop之HDFS的API

- Hadoop学习(3)----HDFS API

- Hadoop--HDFS API编程封装

- hadoop hdfs api简单操作

- hadoop hdfs 应用API --HDFSUtil

- Python API 操作Hadoop hdfs

- Hadoop 通过 Maven 用 Java API 对HDFS的读取/写入示例

- JAVA操作HDFS API(hadoop) HDFS API详解

- HDFS(Hadoop Distributed File System )常用命令示例:

- hadoop hdfs java api 文件操作类

- hadoop API 写入HDFS简单注释

- Hadoop通过C的API访问HDFS

- 【Web API系列教程】1.4 — 实战:用ASP.NET Web API和Angular.js创建单页面应用程序(下)

- 将dev gridControl表中原形数据导出到excel功能写法

- 百度地图API学习笔记

- qt编译中常出现的无法解析的外部符号问题

- 大尾端 小尾端和 htons函数

- hadoop: hdfs API示例

- 直接插入排序

- 数据结构的基本知识

- 实现对HDFS增删改查CRUD等操作

- 掌握VS2010调试 -- 入门指南

- 数组、链表、堆栈、队列和树

- 求最大公约数和最小公倍数

- 此证书的签发者无效Missing iOS Distribution signing identity问题解决

- ubuntu14.04安装cuda7.0 (Nvidia独显计算,Intel集显显示)