Storm 1.0.2 - 单词计数案例学习

来源:互联网 发布:男士 护肤品牌 知乎 编辑:程序博客网 时间:2024/06/08 03:36

转自: http://www.cnblogs.com/jonyo/p/5861171.html

单词计数拓扑WordCountTopology实现的基本功能就是不停地读入一个个句子,最后输出每个单词和数目并在终端不断的更新结果,拓扑的数据流如下:

- 语句输入Spout: 从数据源不停地读入数据,并生成一个个句子,输出的tuple格式:{"sentence":"hello world"}

- 语句分割Bolt: 将一个句子分割成一个个单词,输出的tuple格式:{"word":"hello"} {"word":"world"}

- 单词计数Bolt: 保存每个单词出现的次数,每接到上游一个tuple后,将对应的单词加1,并将该单词和次数发送到下游去,输出的tuple格式:{"hello":"1"} {"world":"3"}

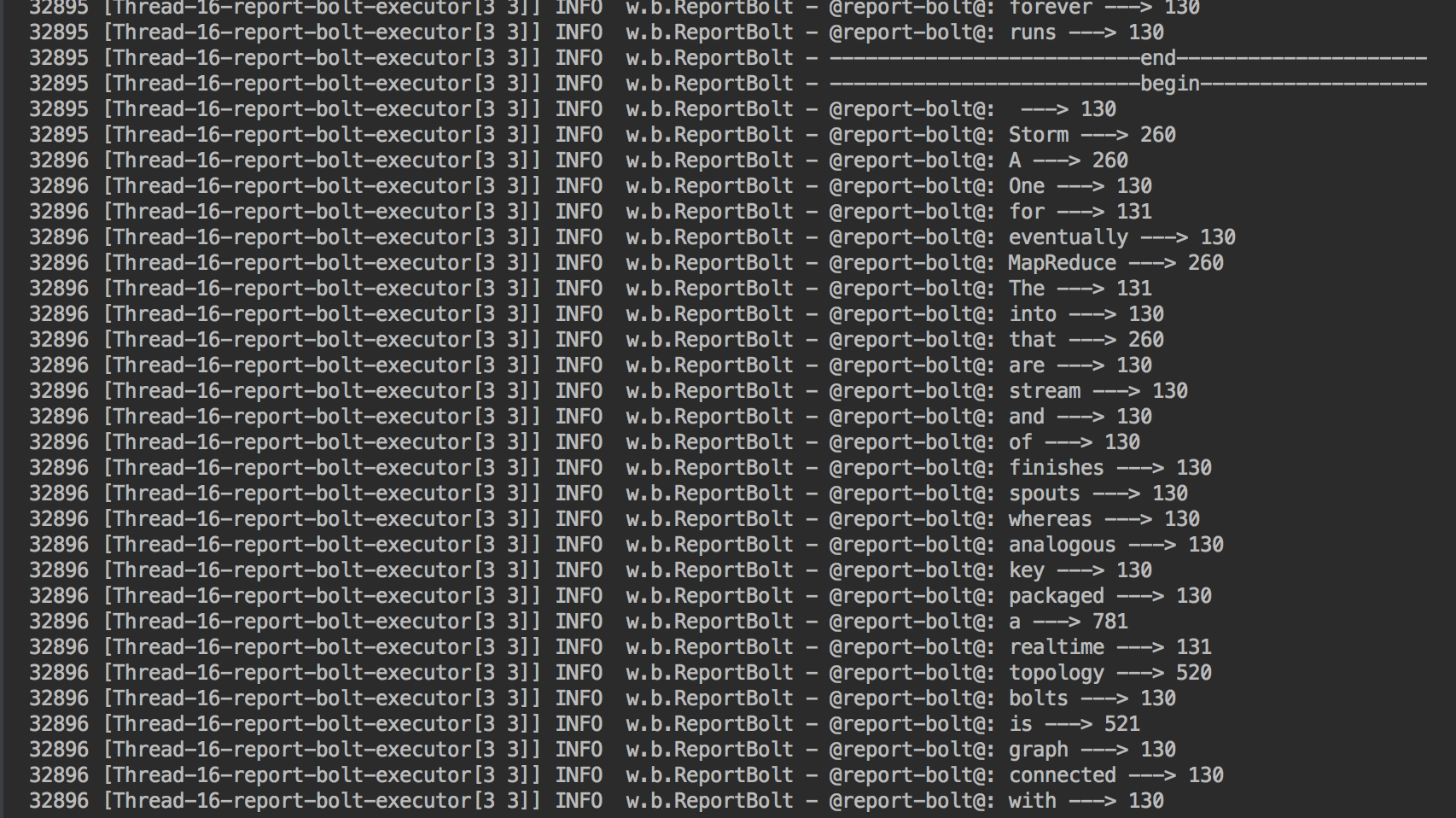

- 结果上报Bolt: 维护一份所有单词计数表,每接到上游一个tuple后,更新表中的计数数据,并在终端将结果打印出来。

开发步骤:

1.环境

- 操作系统:mac os 10.10.3

- JDK: jdk1.8.0_40

- IDE: intellij idea 15.0.3

- Maven: apache-maven-3.0.3

2.项目搭建

- 在idea新建一个maven项目工程:storm-learning

- 修改pom.xml文件,加入strom核心的依赖,配置slf4j依赖,方便Log输出

<dependencies> <dependency> <groupId>org.slf4j</groupId> <artifactId>slf4j-api</artifactId> <version>1.6.1</version> </dependency> <dependency> <groupId>org.apache.storm</groupId> <artifactId>storm-core</artifactId> <version>1.0.2</version> </dependency></dependencies>

3. Spout和Bolt组件的开发

- SentenceSpout

- SplitSentenceBolt

- WordCountBolt

- ReportBolt

SentenceSpout.java

1 public class SentenceSpout extends BaseRichSpout{ 2 3 private SpoutOutputCollector spoutOutputCollector; 4 5 //为了简单,定义一个静态数据模拟不断的数据流产生 6 private static final String[] sentences={ 7 "The logic for a realtime application is packaged into a Storm topology", 8 "A Storm topology is analogous to a MapReduce job", 9 "One key difference is that a MapReduce job eventually finishes whereas a topology runs forever",10 " A topology is a graph of spouts and bolts that are connected with stream groupings"11 };12 13 private int index=0;14 15 //初始化操作16 public void open(Map map, TopologyContext topologyContext, SpoutOutputCollector spoutOutputCollector) {17 this.spoutOutputCollector = spoutOutputCollector;18 }19 20 //核心逻辑21 public void nextTuple() {22 spoutOutputCollector.emit(new Values(sentences[index]));23 ++index;24 if(index>=sentences.length){25 index=0;26 }27 }28 29 //向下游输出30 public void declareOutputFields(OutputFieldsDeclarer outputFieldsDeclarer) {31 outputFieldsDeclarer.declare(new Fields("sentences"));32 }33 }

SplitSentenceBolt.java

1 public class SplitSentenceBolt extends BaseRichBolt{ 2 3 private OutputCollector outputCollector; 4 5 public void prepare(Map map, TopologyContext topologyContext, OutputCollector outputCollector) { 6 this.outputCollector = outputCollector; 7 } 8 9 public void execute(Tuple tuple) {10 String sentence = tuple.getStringByField("sentences");11 String[] words = sentence.split(" ");12 for(String word : words){13 outputCollector.emit(new Values(word));14 }15 }16 17 public void declareOutputFields(OutputFieldsDeclarer outputFieldsDeclarer) {18 outputFieldsDeclarer.declare(new Fields("word"));19 }20 }

WordCountBolt.java

1 public class WordCountBolt extends BaseRichBolt{ 2 3 //保存单词计数 4 private Map<String,Long> wordCount = null; 5 6 private OutputCollector outputCollector; 7 8 public void prepare(Map map, TopologyContext topologyContext, OutputCollector outputCollector) { 9 this.outputCollector = outputCollector;10 wordCount = new HashMap<String, Long>();11 }12 13 public void execute(Tuple tuple) {14 String word = tuple.getStringByField("word");15 Long count = wordCount.get(word);16 if(count == null){17 count = 0L;18 }19 ++count;20 wordCount.put(word,count);21 outputCollector.emit(new Values(word,count));22 }23 24 25 public void declareOutputFields(OutputFieldsDeclarer outputFieldsDeclarer) {26 outputFieldsDeclarer.declare(new Fields("word","count"));27 }28 }

ReportBolt.java

1 public class ReportBolt extends BaseRichBolt { 2 3 private static final Logger log = LoggerFactory.getLogger(ReportBolt.class); 4 5 private Map<String, Long> counts = null; 6 7 public void prepare(Map map, TopologyContext topologyContext, OutputCollector outputCollector) { 8 counts = new HashMap<String, Long>(); 9 }10 11 public void execute(Tuple tuple) {12 String word = tuple.getStringByField("word");13 Long count = tuple.getLongByField("count");14 counts.put(word, count);15 //打印更新后的结果16 printReport();17 }18 19 public void declareOutputFields(OutputFieldsDeclarer outputFieldsDeclarer) {20 //无下游输出,不需要代码21 }22 23 //主要用于将结果打印出来,便于观察24 private void printReport(){25 log.info("--------------------------begin-------------------");26 Set<String> words = counts.keySet();27 for(String word : words){28 log.info("@report-bolt@: " + word + " ---> " + counts.get(word));29 }30 log.info("--------------------------end---------------------");31 }32 }

4.拓扑配置

- WordCountTopology

1 public class WordCountTopology { 2 3 private static final Logger log = LoggerFactory.getLogger(WordCountTopology.class); 4 5 //各个组件名字的唯一标识 6 private final static String SENTENCE_SPOUT_ID = "sentence-spout"; 7 private final static String SPLIT_SENTENCE_BOLT_ID = "split-bolt"; 8 private final static String WORD_COUNT_BOLT_ID = "count-bolt"; 9 private final static String REPORT_BOLT_ID = "report-bolt";10 11 //拓扑名称12 private final static String TOPOLOGY_NAME = "word-count-topology";13 14 public static void main(String[] args) {15 16 log.info(".........begining.......");17 //各个组件的实例18 SentenceSpout sentenceSpout = new SentenceSpout();19 SplitSentenceBolt splitSentenceBolt = new SplitSentenceBolt();20 WordCountBolt wordCountBolt = new WordCountBolt();21 ReportBolt reportBolt = new ReportBolt();22 23 //构建一个拓扑Builder24 TopologyBuilder topologyBuilder = new TopologyBuilder();25 26 //配置第一个组件sentenceSpout27 topologyBuilder.setSpout(SENTENCE_SPOUT_ID, sentenceSpout, 2);28 29 //配置第二个组件splitSentenceBolt,上游为sentenceSpout,tuple分组方式为随机分组shuffleGrouping30 topologyBuilder.setBolt(SPLIT_SENTENCE_BOLT_ID, splitSentenceBolt).shuffleGrouping(SENTENCE_SPOUT_ID);31 32 //配置第三个组件wordCountBolt,上游为splitSentenceBolt,tuple分组方式为fieldsGrouping,同一个单词将进入同一个task中(bolt实例)33 topologyBuilder.setBolt(WORD_COUNT_BOLT_ID, wordCountBolt).fieldsGrouping(SPLIT_SENTENCE_BOLT_ID, new Fields("word"));34 35 //配置最后一个组件reportBolt,上游为wordCountBolt,tuple分组方式为globalGrouping,即所有的tuple都进入这一个task中36 topologyBuilder.setBolt(REPORT_BOLT_ID, reportBolt).globalGrouping(WORD_COUNT_BOLT_ID);37 38 Config config = new Config();39 40 //建立本地集群,利用LocalCluster,storm在程序启动时会在本地自动建立一个集群,不需要用户自己再搭建,方便本地开发和debug41 LocalCluster cluster = new LocalCluster();42 43 //创建拓扑实例,并提交到本地集群进行运行44 cluster.submitTopology(TOPOLOGY_NAME, config, topologyBuilder.createTopology());45 }46 }

5.拓扑执行

- 方法一:通过IDEA执行

在idea中对代码进行编译compile,然后run;

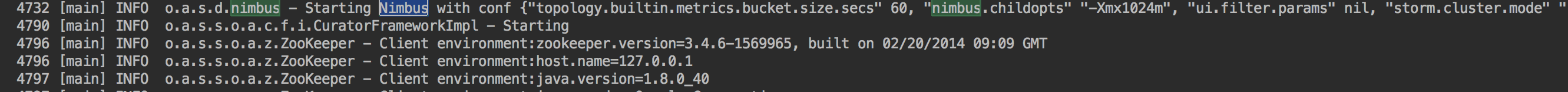

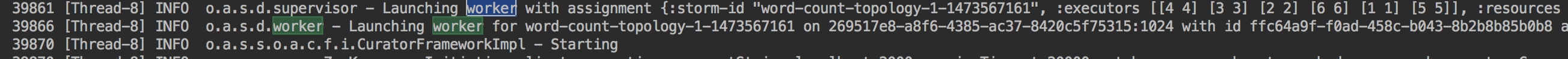

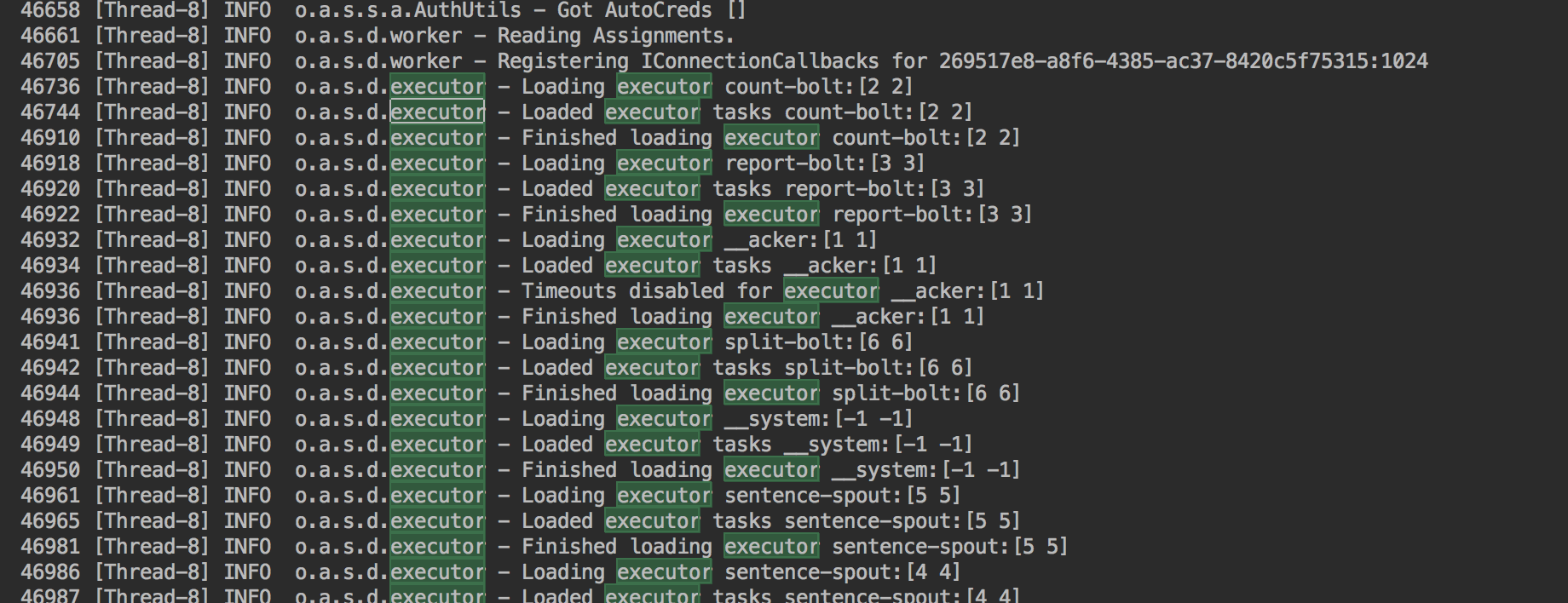

观察控制台输出会发现,storm首先在本地自动建立了运行环境,即启动了zookepeer,接着启动nimbus,supervisor;然后nimbus将提交的topology进行分发到supervisor,supervisor启动woker进程,woker进程里利用Executor来运行topology的组件(spout和bolt);最后在控制台发现不断的输出单词计数的结果。

zookepeer的连接建立

nimbus启动

supervisor启动

worker启动

Executor启动执行

结果输出

- 方法二:通过maven来执行

- 进入到该项目的主目录下:storm-learning

- mvn compile 进行代码编译,保证代码编译通过

- 通过mvn执行程序:

mvn exec:java -Dexec.mainClass="wordCount.WordCountTopology" - 控制台输出的结果跟方法一一致

其他资料:

http://www.cnblogs.com/jonyo/p/5861125.html

http://www.cnblogs.com/jonyo/p/5861835.html

0 0

- Storm 1.0.2 - 单词计数案例学习

- storm两个案例(1单词计数本地执行 2累加集群执行 3集群关闭storm任务写法)

- storm单词计数实例

- Storm分布式单词计数

- Storm实例:实时单词计数

- storm单词计数 本地执行

- storm trident实现单词计数

- storm详解:第一章 storm分布式单词计数

- storm详解: storm分布式单词计数

- Storm入门实例之单词计数

- Storm集群部署与单词计数程序

- Linux 单词计数 WordCount 以及代码案例

- 【Storm】storm安装、配置、使用以及Storm单词计数程序的实例分析

- 【Storm】storm安装、配置、使用以及Storm单词计数程序的实例分析

- 磁盘存储分布不均与单词计数案例

- Spark学习总结一 单词计数

- 单词计数

- 单词计数

- Android MD5

- Spring Boot / Spring MVC 入门实践 (四) :需求记录网站的实现

- 优秀的自学机器学习课程

- 遍历HashMap和ArrayList

- 输入一个32位的整数a,使用按位异或^运算,生成一个新的32位整数b,使得该整数b的每一位等于原整数a中该位左右两边两个bit位的异或结果

- Storm 1.0.2 - 单词计数案例学习

- linux通信 --- 消息队列

- 变换编码增益(Transform Coding Gain)

- CF 4D Mysterious Present

- Windows环境下的NodeJS+NPM+Bower安装配

- 三道找规律的题

- 几种排序总结(一)

- stop(true,true) jQuery多格焦点图效果

- git学习笔记 day1