德国慕尼黑工业大学(TUM)计算机视觉组

来源:互联网 发布:java软件编程培训班 编辑:程序博客网 时间:2024/04/28 16:15

LSD-SLAM: Large-Scale Direct Monocular SLAM

Contact: Jakob Engel, Dr. Jörg Stückler, Prof. Dr. Daniel Cremers

Check out DSO, our new Direct & Sparse Visual Odometry Method published in July 2016 here: DSO: Direct Sparse Odometry

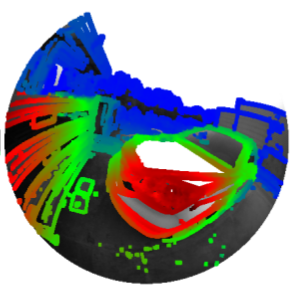

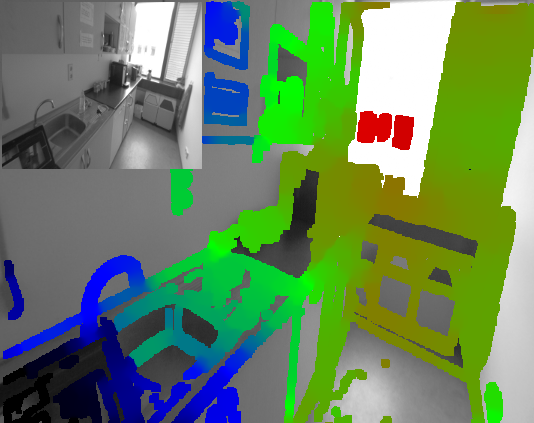

LSD-SLAM is a novel, direct monocular SLAM technique: Instead of using keypoints, it directly operates on image intensities both for tracking and mapping. The camera is tracked using direct image alignment, while geometry is estimated in the form of semi-dense depth maps, obtained by filtering over many pixelwise stereo comparisons. We then build a Sim(3) pose-graph of keyframes, which allows to build scale-drift corrected, large-scale maps including loop-closures. LSD-SLAM runs in real-time on a CPU, and even on a modern smartphone.

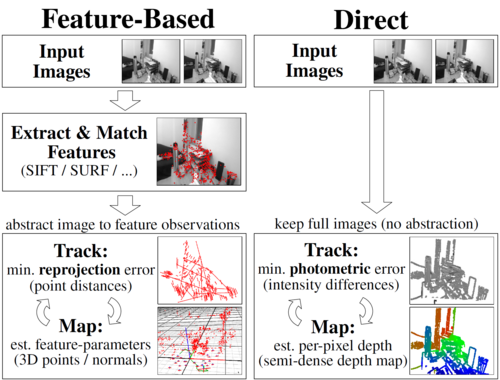

Difference to keypoint-based methods

As direct method, LSD-SLAM uses all information in the image, including e.g. edges – while keypoint-based approaches can only use small patches around corners. This leads to higher accuracy and more robustness in sparsely textured environments (e.g. indoors), and a much denser 3D reconstruction. Further, as the proposed piselwise depth-filters incorporate many small-baseline stereo comparisons instead of only few large-baseline frames, there are much less outliers.

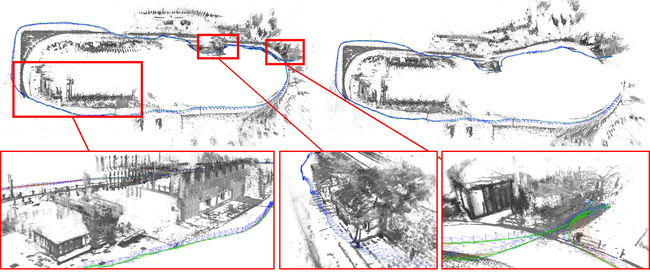

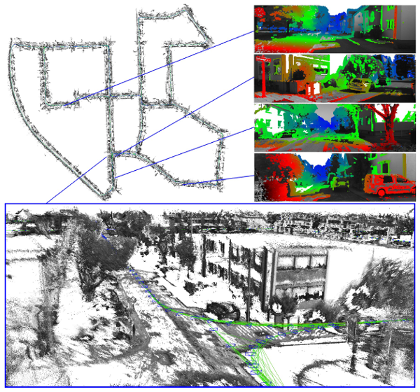

Building a global map

(click on the images for full resolution)

(click on the images for full resolution)LSD-SLAM builds a pose-graph of keyframes, each containing an estimated semi-dense depth map. Using a novel direct image alignment forumlation, we directly track Sim(3)-constraints between keyframes (i.e., rigid body motion + scale), which are used to build a pose-graph which is then optimized. This formulation allows to detect and correct substantial scale-drift after large loop-closures, and to deal with large scale-variation within the same map.

Mobile Implementation

The approach even runs on a smartphone, where it can be used for AR. The estimated semi-dense depth maps are in-painted and completed with an estimated ground-plane, which then allows to implement basic physical interaction with the environment.Stereo LSD-SLAM

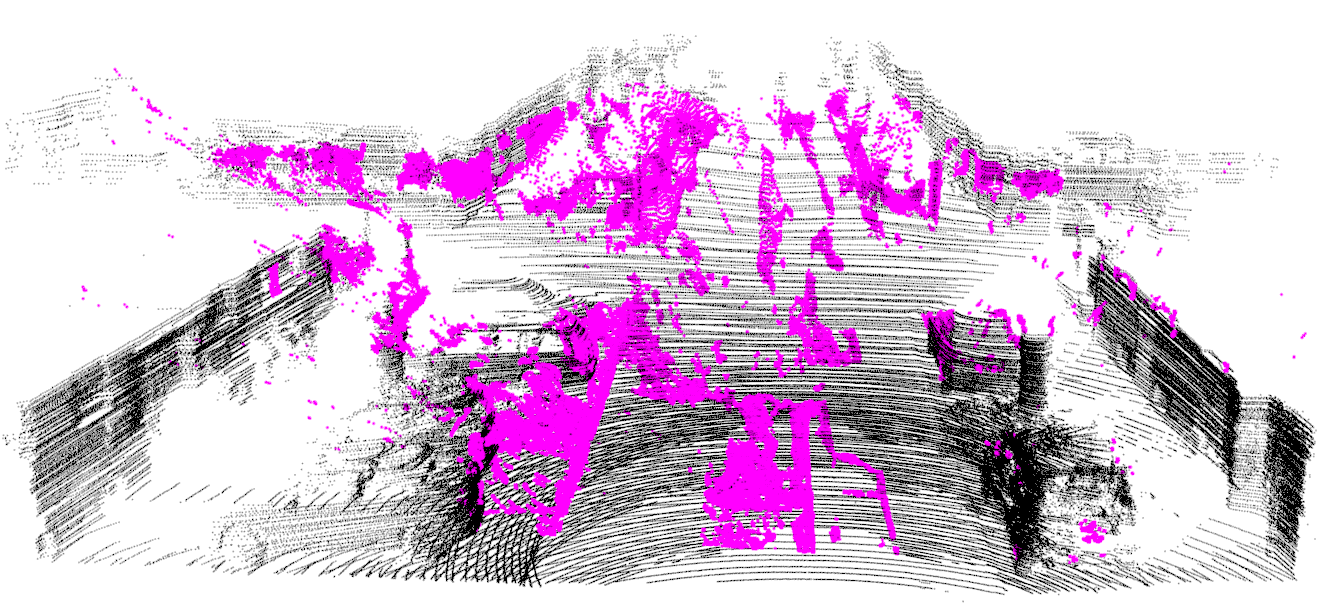

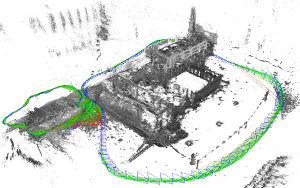

We propose a novel Large-Scale Direct SLAM algorithm for stereo cameras (Stereo LSD-SLAM) that runs in real-time at high frame rate on standard CPUs. See below for the full publication.Omnidirectional LSD-SLAM

We propose a real-time, direct monocular SLAM method for omnidirectional or wide field-of-view fisheye cameras. Both tracking (direct image alignment) and mapping (pixel-wise distance filtering) are directly formulated for the unified omnidirectional model, which can model central imaging devices with a field of view well above 150°. The dataset used for the evaluation can be found here. See below for the full publication.Software

LSD-SLAM is on github: http://github.com/tum-vision/lsd_slamWe support only ROS-based build system tested on Ubuntu 12.04 or 14.04 and ROS Indigo or Fuerte. However, ROS is only used for input (video), output (pointcloud & poses) and parameter handling; ROS-dependent code is tightly wrapped and can easily be replaced. To avoid overhead from maintaining different build-systems however, we do not offer an out-of-the-box ROS-free version. Android-specific optimizations and AR integration are not part of the open-source release.

Detailled installation and usage instructions can be found in the README.md, including descriptions of the most important parameters. For best results, we recommend using a monochrome global-shutter camera with fisheye lens.

If you use our code, please cite our respective publications (see below). We are excited to see what you do with LSD-SLAM, if you want drop us a quick hint if you have nice videos / pictures / models / applications.

Datasets

To get you started, we provide some example sequences including the input video and camera calibration, the complete generated pointcloud to be displayed with thelsd_slam_viewer, as well as a (sparsified) pointcloud as .ply, which can be displayed e.g. using meshlab.Hint: Use rosbag play -r 25 X_pc.bag while the lsd_slam_viewer is running to replay the result of real-time SLAM at 25x speed, building up the full reconstruction whithin seconds.

- Desk Sequence (0:55min, 640×480 @ 50fps)

- Video: [.bag] [.png]

- Pointcloud: [.bag] [.ply]

- Machine Sequence (2:20min, 640×480 @ 50fps)

- Download Video: [.bag] [.png]

- Download Pointcloud: [.bag] [.ply]

- Foodcourt Sequence (12min, 640×480 @ 50fps)

- Download Video: [.bag] [.png]

- Download Pointcloud: [.bag] [.ply]

- ECCV Sequence (7:00min, 640×480 @ 50fps)

- Enable FabMap for large loop-closures for this sequence!

- Video: [.bag] [.png]

- Pointcloud: [.bag] [.ply]

License

LSD-SLAM is released under the GPLv3 license. A professional version under a different licensing agreement intended for commercial use is available here. Please contact us if you are interested.Related publications

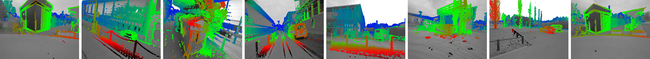

Reconstructing Street-Scenes in Real-Time From a Driving Car , In Proc. of the Int. Conference on 3D Vision (3DV), 2015. [bib] [pdf]

Reconstructing Street-Scenes in Real-Time From a Driving Car , In Proc. of the Int. Conference on 3D Vision (3DV), 2015. [bib] [pdf] Large-Scale Direct SLAM for Omnidirectional Cameras , In International Conference on Intelligent Robots and Systems (IROS), 2015. [bib] [pdf] [video]

Large-Scale Direct SLAM for Omnidirectional Cameras , In International Conference on Intelligent Robots and Systems (IROS), 2015. [bib] [pdf] [video] Large-Scale Direct SLAM with Stereo Cameras , In International Conference on Intelligent Robots and Systems (IROS), 2015. [bib] [pdf] [video]

Large-Scale Direct SLAM with Stereo Cameras , In International Conference on Intelligent Robots and Systems (IROS), 2015. [bib] [pdf] [video] Semi-Dense Visual Odometry for AR on a Smartphone , In International Symposium on Mixed and Augmented Reality, 2014. [bib] [pdf] [video]Best Short Paper Award

Semi-Dense Visual Odometry for AR on a Smartphone , In International Symposium on Mixed and Augmented Reality, 2014. [bib] [pdf] [video]Best Short Paper Award LSD-SLAM: Large-Scale Direct Monocular SLAM , In European Conference on Computer Vision (ECCV), 2014. [bib] [pdf] [video]Oral Presentation

LSD-SLAM: Large-Scale Direct Monocular SLAM , In European Conference on Computer Vision (ECCV), 2014. [bib] [pdf] [video]Oral Presentation Semi-Dense Visual Odometry for a Monocular Camera , In IEEE International Conference on Computer Vision (ICCV), 2013. [bib] [pdf] [video]

Semi-Dense Visual Odometry for a Monocular Camera , In IEEE International Conference on Computer Vision (ICCV), 2013. [bib] [pdf] [video]- 德国慕尼黑工业大学(TUM)计算机视觉组

- 慕尼黑工业大学TUM简介

- 德国计算机视觉研究机构及企业(computer vision)

- 孟丽秋教授当选为慕尼黑工业大学第一副校长

- 华为在德国慕尼黑召开5G无线网络技术大会

- 计算机视觉研究组

- 杭州计算机视觉交流组

- 西北工业大学计算机研究生机试真题

- 西北工业大学计算机 复试 调整矩阵

- 计算机视觉

- 计算机视觉

- 计算机视觉

- 计算机视觉

- 计算机视觉

- 计算机视觉

- 计算机视觉

- 计算机视觉

- 计算机视觉

- Oracle笔记(五)

- 50个PHP程序性能优化的方法

- MySQL数据库表升级相关语句

- CentOS7上elasticsearch5.0启动失败

- 推荐系统评分矩阵稀疏性计算

- 德国慕尼黑工业大学(TUM)计算机视觉组

- 写给Android开发者的混淆使用手册

- linux系统下mongodb数据库的备份与恢复

- HTML4和HTML5的对比

- 基于Ubuntu14.04+ROS indigo环境LSD-SLAM的数据集测试成功

- 【USACO】2006 Nov Roadblocks 次短路径

- 解析XML-SAX

- Android Studio常见问题 -- uses-sdk:minSdkVersion 8 cannot be smaller than version 9 declared in library

- jvm设置