linux内存管理数据结构

来源:互联网 发布:网页自动生成软件 编辑:程序博客网 时间:2024/05/19 20:41

1.相关概念

UMA(Uniform Memory Access): 一致性内存访问,所有CPU core访问内存速度一致;

NUMA(Non Uniform Memory Access):非一致性内存访问,不同CPU访问不同的内存区域速度是不一样的;不同的CPU分别挂载了属于自己本地的内存,所以CPU访问本地内存速度最快,

访问远端内存速度相对较慢;

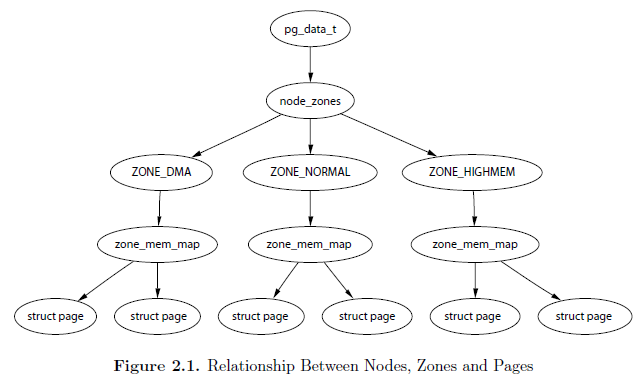

linux中将NUMA 中内存访问速度一致的内存部分称为一个节点(node),用struct pglist_data

表示;

一个节点分成很多区zone,struct zone来表示;

一个zone里面包含很多page,struct page表示;

结构之间关系如图所示:

2. 数据结构详解:

typedef struct pglist_data {//一个节点中zone区数组,一般有ZONE_DMA,ZONE_NORMALstruct zone node_zones[MAX_NR_ZONES];

//分配内存时的顺序struct zonelist node_zonelists[MAX_ZONELISTS];int nr_zones;//节点中zone的个数#ifdef CONFIG_FLAT_NODE_MEM_MAP/* means !SPARSEMEM *///非稀疏内存模型才会有此变量,该节点第一个页面在page数组中的地址struct page *node_mem_map;#ifdef CONFIG_MEMCGstruct page_cgroup *node_page_cgroup;#endif#endif#ifndef CONFIG_NO_BOOTMEMstruct bootmem_data *bdata;#endif#ifdef CONFIG_MEMORY_HOTPLUG/* * Must be held any time you expect node_start_pfn, node_present_pages * or node_spanned_pages stay constant. Holding this will also * guarantee that any pfn_valid() stays that way. * * pgdat_resize_lock() and pgdat_resize_unlock() are provided to * manipulate node_size_lock without checking for CONFIG_MEMORY_HOTPLUG. * * Nests above zone->lock and zone->span_seqlock */spinlock_t node_size_lock;#endifunsigned long node_start_pfn;//节点的起始页面号unsigned long node_present_pages; //总的有效物理页面数unsigned long node_spanned_pages; //所有物理页面数,包含了内存空洞

int node_id; //node idwait_queue_head_t kswapd_wait;wait_queue_head_t pfmemalloc_wait;struct task_struct *kswapd; //页换出进程描述符int kswapd_max_order;enum zone_type classzone_idx;#ifdef CONFIG_NUMA_BALANCING/* Lock serializing the migrate rate limiting window */spinlock_t numabalancing_migrate_lock;/* Rate limiting time interval */unsigned long numabalancing_migrate_next_window;/* Number of pages migrated during the rate limiting time interval */unsigned long numabalancing_migrate_nr_pages;#endif} pg_data_t;

struct zone {/* Read-mostly fields */<span style="font-family: Arial, Helvetica, sans-serif;"> //zone的水位标示数组,内存回收时参考不同的数位值</span></span>unsigned long watermark[NR_WMARK]; /* * We don't know if the memory that we're going to allocate will be freeable * or/and it will be released eventually, so to avoid totally wasting several * GB of ram we must reserve some of the lower zone memory (otherwise we risk * to run OOM on the lower zones despite there's tons of freeable ram * on the higher zones). This array is recalculated at runtime if the * sysctl_lowmem_reserve_ratio sysctl changes. */long lowmem_reserve[MAX_NR_ZONES];#ifdef CONFIG_NUMAint node;#endif/* * The target ratio of ACTIVE_ANON to INACTIVE_ANON pages on * this zone's LRU. Maintained by the pageout code. */unsigned int inactive_ratio;struct pglist_data*zone_pgdat;//zone 区所属的节点struct per_cpu_pageset __percpu *pageset;/* * This is a per-zone reserve of pages that should not be * considered dirtyable memory. */unsigned longdirty_balance_reserve;#ifdef CONFIG_CMAboolcma_alloc;#endif#ifndef CONFIG_SPARSEMEM/* * Flags for a pageblock_nr_pages block. See pageblock-flags.h. * In SPARSEMEM, this map is stored in struct mem_section */unsigned long*pageblock_flags;#endif /* CONFIG_SPARSEMEM */#ifdef CONFIG_NUMA/* * zone reclaim becomes active if more unmapped pages exist. */unsigned longmin_unmapped_pages;unsigned longmin_slab_pages;#endif /* CONFIG_NUMA *//* zone_start_pfn == zone_start_paddr >> PAGE_SHIFT */unsigned longzone_start_pfn;/* * spanned_pages is the total pages spanned by the zone, including * holes, which is calculated as: * spanned_pages = zone_end_pfn - zone_start_pfn; * * present_pages is physical pages existing within the zone, which * is calculated as: *present_pages = spanned_pages - absent_pages(pages in holes); * * managed_pages is present pages managed by the buddy system, which * is calculated as (reserved_pages includes pages allocated by the * bootmem allocator): *managed_pages = present_pages - reserved_pages; * * So present_pages may be used by memory hotplug or memory power * management logic to figure out unmanaged pages by checking * (present_pages - managed_pages). And managed_pages should be used * by page allocator and vm scanner to calculate all kinds of watermarks * and thresholds. * * Locking rules: * * zone_start_pfn and spanned_pages are protected by span_seqlock. * It is a seqlock because it has to be read outside of zone->lock, * and it is done in the main allocator path. But, it is written * quite infrequently. * * The span_seq lock is declared along with zone->lock because it is * frequently read in proximity to zone->lock. It's good to * give them a chance of being in the same cacheline. * * Write access to present_pages at runtime should be protected by * mem_hotplug_begin/end(). Any reader who can't tolerant drift of * present_pages should get_online_mems() to get a stable value. * * Read access to managed_pages should be safe because it's unsigned * long. Write access to zone->managed_pages and totalram_pages are * protected by managed_page_count_lock at runtime. Idealy only * adjust_managed_page_count() should be used instead of directly * touching zone->managed_pages and totalram_pages. */unsigned longmanaged_pages; unsigned longspanned_pages; //zone 总物理页面数unsigned longpresent_pages; //zone除去空洞后的页面数const char*name;//zone name /* * Number of MIGRATE_RESEVE page block. To maintain for just * optimization. Protected by zone->lock. */intnr_migrate_reserve_block;#ifdef CONFIG_MEMORY_ISOLATION/* * Number of isolated pageblock. It is used to solve incorrect * freepage counting problem due to racy retrieving migratetype * of pageblock. Protected by zone->lock. */unsigned longnr_isolate_pageblock;#endif#ifdef CONFIG_MEMORY_HOTPLUG/* see spanned/present_pages for more description */seqlock_tspan_seqlock;#endif/* * wait_table-- the array holding the hash table * wait_table_hash_nr_entries-- the size of the hash table array * wait_table_bits-- wait_table_size == (1 << wait_table_bits) * * The purpose of all these is to keep track of the people * waiting for a page to become available and make them * runnable again when possible. The trouble is that this * consumes a lot of space, especially when so few things * wait on pages at a given time. So instead of using * per-page waitqueues, we use a waitqueue hash table. * * The bucket discipline is to sleep on the same queue when * colliding and wake all in that wait queue when removing. * When something wakes, it must check to be sure its page is * truly available, a la thundering herd. The cost of a * collision is great, but given the expected load of the * table, they should be so rare as to be outweighed by the * benefits from the saved space. * * __wait_on_page_locked() and unlock_page() in mm/filemap.c, are the * primary users of these fields, and in mm/page_alloc.c * free_area_init_core() performs the initialization of them. */wait_queue_head_t*wait_table;//进程等待队列,这些进程等待管理区中的某页unsigned longwait_table_hash_nr_entries;unsigned longwait_table_bits;ZONE_PADDING(_pad1_)/* Write-intensive fields used from the page allocator */spinlock_tlock;/* free areas of different sizes */struct free_areafree_area[MAX_ORDER]; //zone中的空闲页链表,二维数组结构进行管理/* zone flags, see below */unsigned longflags;ZONE_PADDING(_pad2_)/* Write-intensive fields used by page reclaim *//* Fields commonly accessed by the page reclaim scanner */spinlock_tlru_lock;struct lruveclruvec;/* Evictions & activations on the inactive file list */atomic_long_tinactive_age;/* * When free pages are below this point, additional steps are taken * when reading the number of free pages to avoid per-cpu counter * drift allowing watermarks to be breached */unsigned long percpu_drift_mark;#if defined CONFIG_COMPACTION || defined CONFIG_CMA/* pfn where compaction free scanner should start */unsigned longcompact_cached_free_pfn;/* pfn where async and sync compaction migration scanner should start */unsigned longcompact_cached_migrate_pfn[2];#endif#ifdef CONFIG_COMPACTION/* * On compaction failure, 1<<compact_defer_shift compactions * are skipped before trying again. The number attempted since * last failure is tracked with compact_considered. */unsigned intcompact_considered;unsigned intcompact_defer_shift;intcompact_order_failed;#endif#if defined CONFIG_COMPACTION || defined CONFIG_CMA/* Set to true when the PG_migrate_skip bits should be cleared */boolcompact_blockskip_flush;#endifZONE_PADDING(_pad3_)/* Zone statistics */atomic_long_tvm_stat[NR_VM_ZONE_STAT_ITEMS];} ____cacheline_internodealigned_in_smp;

/* * Each physical page in the system has a struct page associated with * it to keep track of whatever it is we are using the page for at the * moment. Note that we have no way to track which tasks are using * a page, though if it is a pagecache page, rmap structures can tell us * who is mapping it. * * The objects in struct page are organized in double word blocks in * order to allows us to use atomic double word operations on portions * of struct page. That is currently only used by slub but the arrangement * allows the use of atomic double word operations on the flags/mapping * and lru list pointers also. */struct page {/* First double word block */unsigned long flags; //页面标志,具体含义参考enum pageflags union {struct address_space *mapping;/* If low bit clear, points to * inode address_space, or NULL. * If page mapped as anonymous * memory, low bit is set, and * it points to anon_vma object: * see PAGE_MAPPING_ANON below. */void *s_mem;/* slab first object */};/* Second double word */struct {union {pgoff_t index;/* Our offset within mapping. */void *freelist;/* sl[aou]b first free object */bool pfmemalloc;/* If set by the page allocator, * ALLOC_NO_WATERMARKS was set * and the low watermark was not * met implying that the system * is under some pressure. The * caller should try ensure * this page is only used to * free other pages. */};union {#if defined(CONFIG_HAVE_CMPXCHG_DOUBLE) && \defined(CONFIG_HAVE_ALIGNED_STRUCT_PAGE)/* Used for cmpxchg_double in slub */unsigned long counters;#else/* * Keep _count separate from slub cmpxchg_double data. * As the rest of the double word is protected by * slab_lock but _count is not. */unsigned counters;#endifstruct {union {/* * Count of ptes mapped in * mms, to show when page is * mapped & limit reverse map * searches. * * Used also for tail pages * refcounting instead of * _count. Tail pages cannot * be mapped and keeping the * tail page _count zero at * all times guarantees * get_page_unless_zero() will * never succeed on tail * pages. */atomic_t _mapcount;struct { /* SLUB */unsigned inuse:16;unsigned objects:15;unsigned frozen:1;};int units;/* SLOB */};atomic_t _count;/* Usage count, see below. */};unsigned int active;/* SLAB */};};/* Third double word block */union {struct list_head lru;/* Pageout list, eg. active_list * protected by zone->lru_lock ! * Can be used as a generic list * by the page owner. */struct {/* slub per cpu partial pages */struct page *next;/* Next partial slab */#ifdef CONFIG_64BITint pages;/* Nr of partial slabs left */int pobjects;/* Approximate # of objects */#elseshort int pages;short int pobjects;#endif};struct slab *slab_page; /* slab fields */struct rcu_head rcu_head;/* Used by SLAB * when destroying via RCU */#if defined(CONFIG_TRANSPARENT_HUGEPAGE) && USE_SPLIT_PMD_PTLOCKSpgtable_t pmd_huge_pte; /* protected by page->ptl */#endif};/* Remainder is not double word aligned */union {unsigned long private;/* Mapping-private opaque data: * usually used for buffer_heads * if PagePrivate set; used for * swp_entry_t if PageSwapCache; * indicates order in the buddy * system if PG_buddy is set. */#if USE_SPLIT_PTE_PTLOCKS#if ALLOC_SPLIT_PTLOCKSspinlock_t *ptl;#elsespinlock_t ptl;#endif#endifstruct kmem_cache *slab_cache;/* SL[AU]B: Pointer to slab */struct page *first_page;/* Compound tail pages */};/* * On machines where all RAM is mapped into kernel address space, * we can simply calculate the virtual address. On machines with * highmem some memory is mapped into kernel virtual memory * dynamically, so we need a place to store that address. * Note that this field could be 16 bits on x86 ... ;) * * Architectures with slow multiplication can define * WANT_PAGE_VIRTUAL in asm/page.h */#if defined(WANT_PAGE_VIRTUAL)void *virtual;/* Kernel virtual address (NULL if not kmapped, ie. highmem) */#endif /* WANT_PAGE_VIRTUAL */#ifdef CONFIG_WANT_PAGE_DEBUG_FLAGSunsigned long debug_flags;/* Use atomic bitops on this */#endif#ifdef CONFIG_BLK_DEV_IO_TRACEstruct task_struct *tsk_dirty;/* task that sets this page dirty */#endif#ifdef CONFIG_KMEMCHECK/* * kmemcheck wants to track the status of each byte in a page; this * is a pointer to such a status block. NULL if not tracked. */void *shadow;#endif#ifdef LAST_CPUPID_NOT_IN_PAGE_FLAGSint _last_cpupid;#endif#ifdef CONFIG_PAGE_OWNERint order;gfp_t gfp_mask;struct stack_trace trace;unsigned long trace_entries[8];#endif}因为内核会为每一个物理页帧创建一个struct page的结构体,因此要保证page结构体足够的小,否则仅struct page就要占用大量的内存。

出于节省内存的考虑,struct page中使用了大量的联合体union。下面仅对常用的一些的字段做说明:

1) flags:描述page的状态和其他信息,如图:

主要分为4部分,其中标志位flag向高位增长,其余位字段向低位增长,中间存在空闲位。

section:主要用于稀疏内存模型SPARSEMEM,可忽略。

node:NUMA节点号,标识该page属于哪一个节点。

zone:内存域标志,标识该page属于哪一个zone。

flag:page的状态标识,常用的有:

PG_locked:page被锁定,说明有使用者正在操作该page。

PG_error:状态标志,表示涉及该page的IO操作发生了错误。

PG_referenced:表示page刚刚被访问过。

PG_active:page处于inactive LRU链表。PG_active和PG_referenced一起控制该page的活跃程度,这在内存回收时将会非常有用。

PG_uptodate:表示page的数据已经与后备存储器是同步的,是最新的。

PG_dirty:与后备存储器中的数据相比,该page的内容已经被修改。

PG_lru:表示该page处于LRU链表上。

PG_slab:该page属于slab分配器。

PG_reserved:设置该标志,防止该page被交换到swap。

PG_private:如果page中的private成员非空,则需要设置该标志。参考6)对private的解释。

PG_writeback:page中的数据正在被回写到后备存储器。

PG_swapcache:表示该page处于swap cache中。

PG_mappedtodisk:表示page中的数据在后备存储器中有对应。

PG_reclaim:表示该page要被回收。当PFRA决定要回收某个page后,需要设置该标志。

PG_swapbacked:该page的后备存储器是swap。

PG_unevictable:该page被锁住,不能交换,并会出现在LRU_UNEVICTABLE链表中,它包括的几种page:ramdisk或ramfs使用的页、

shm_locked、mlock锁定的页。

PG_mlocked:该page在vma中被锁定,一般是通过系统调用mlock()锁定了一段内存。

内核中提供了一些标准宏,用来检查、操作某些特定的比特位,如:

-> PageXXX(page):检查page是否设置了PG_XXX位

-> SetPageXXX(page):设置page的PG_XXX位

-> ClearPageXXX(page):清除page的PG_XXX位

-> TestSetPageXXX(page):设置page的PG_XXX位,并返回原值

-> TestClearPageXXX(page):清除page的PG_XXX位,并返回原值

2) _count:引用计数,表示内核中引用该page的次数,如果要操作该page,引用计数会+1,操作完成-1。当该值为0时,表示没有引用该page的位置,所以该page可以被解除映射,这往往在内存回收时是有用的。

3) _mapcount:被页表映射的次数,也就是说该page同时被多少个进程共享。初始值为-1,如果只被一个进程的页表映射了,该值为0 。如果该page处于伙伴系统中,该值为PAGE_BUDDY_MAPCOUNT_VALUE(-128),内核通过判断该值是否为PAGE_BUDDY_MAPCOUNT_VALUE来确定该page是否属于伙伴系统。

注意区分_count和_mapcount,_mapcount表示的是映射次数,而_count表示的是使用次数;被映射了不一定在使用,但要使用必须先映射。

4) mapping:有三种含义

a: 如果mapping = 0,说明该page属于交换缓存(swap cache);当需要使用地址空间时会指定交换分区的地址空间swapper_space。

b: 如果mapping != 0,bit[0] = 0,说明该page属于页缓存或文件映射,mapping指向文件的地址空间address_space。

c: 如果mapping != 0,bit[0] != 0,说明该page为匿名映射,mapping指向struct anon_vma对象。

通过mapping恢复anon_vma的方法:anon_vma = (struct anon_vma *)(mapping - PAGE_MAPPING_ANON)。

5) index:在映射的虚拟空间(vma_area)内的偏移;一个文件可能只映射一部分,假设映射了1M的空间,index指的是在1M空间内的偏移,而不是在整个文件内的偏移。

6) private:私有数据指针,由应用场景确定其具体的含义:

a:如果设置了PG_private标志,表示buffer_heads;

b:如果设置了PG_swapcache标志,private存储了该page在交换分区中对应的位置信息swp_entry_t。

c:如果_mapcount = PAGE_BUDDY_MAPCOUNT_VALUE,说明该page位于伙伴系统,private存储该伙伴的阶。

7) lru:链表头,主要有3个用途:

a:page处于伙伴系统中时,用于链接相同阶的伙伴(只使用伙伴中的第一个page的lru即可达到目的)。

b:page属于slab时,page->lru.next指向page驻留的的缓存的管理结构,page->lru.prec指向保存该page的slab的管理结构。

c:page被用户态使用或被当做页缓存使用时,用于将该page连入zone中相应的lru链表,供内存回收时使用。

0 0

- linux内存管理数据结构

- linux内存管理之数据结构

- linux kernel内存管理数据结构

- Linux内存管理中的数据结构和函数

- linux内存管理重要的数据结构

- Linux内存管理数据结构之间的关系

- 关于linux内存管理的主要数据结构

- 【内存管理】重要数据结构

- 【数据结构】动态内存管理

- 数据结构--动态内存管理

- Linux kernel 内存管理重要数据结构关系组织图

- linux进程/内存管理的数据结构之u区

- linux内核源码分析(内存管理)--之数据结构

- linux内存管理之slab数据结构三巨头

- Linux内核中内存管理相关的数据结构

- Linux内核内存管理之SLAB内存管理算法(三) --基本数据结构及slab分配

- 数据结构内存管理c++实现

- 15.数据结构和内存管理

- OpenCV Mat数据类型像素操作

- python6(math函数)

- JSON.parse()和JSON.stringify()

- [投稿]准备篇

- SpringBoot初始教程之项目结构(一)

- linux内存管理数据结构

- 18、Spring MVC 之 HTTP caching support

- array_merge 和 + 在和并数组时候的 相同和不同

- POJ 2253Frogger(最短路floyd)

- 手写数字识别(二)----SVM 实现Mnist-image 手写数字图像识别

- 在yii框架中使用jquery,实现单删批删

- Zookeeper安装

- The Application of Two-level Attention Models in CNN for Fine-grained Image Classification

- Linux 怎样实现非阻塞connect