logstash使用grok正则解析日志和kibana遇到的问题

来源:互联网 发布:淘宝图标 编辑:程序博客网 时间:2024/05/20 17:58

#Nginx日志格式定义

log_format combine '$remote_addr - $remote_user [$time_local] "$request" $http_host ' '$status $body_bytes_sent "$http_referer" ''"$http_user_agent" "$http_x_forwarded_for" ''$upstream_addr $upstream_status $upstream_cache_status "$upstream_http_content_type" $upstream_response_time > $request_time';

#日志内容

11.11.1.1 - - [01/Mar/2013:12:23:53 +0800] "GET /v1/api HTTP/1.1" api.xx.com 200 4003 "https://api.xx.com/v1/api" "Mozilla/4.0 (compatible; MSIE 8.0; Windows NT 6.1; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0)" "-" 10.1.1.1:80 200 - "text/html;charset=UTF-8" 0.023 > 0.023# GROK pattern

%{IPORHOST:client_ip} %{USER:ident} %{USER:auth} \[%{HTTPDATE:timestamp}\] "(?:%{WORD:verb} %{NOTSPACE:request}(?: HTTP/%{NUMBER:http_version})?|-)" %{HOST:domain} %{NUMBER:response} (?:%{NUMBER:bytes}|-) %{QS:referrer} %{QS:agent} "(%{WORD:x_forword}|-)" (%{URIHOST:upstream_host}|-) %{NUMBER:upstream_response} (%{WORD:upstream_cache_status}|-) %{QS:upstream_content_type} (%{BASE16FLOAT:upstream_response_time}) > (%{BASE16FLOAT:request_time})#GROK 在logstash里面的定义,双引号转义一下

filter { grep { match => [ "@message", "DNSPod-monitor|DNSPod-reporting|(Webluker NetWork Probe Agent)|JianKongBao" ] type => "nginx-access" negate=> true } grok { type => "nginx-access" pattern => "%{IPORHOST:client_ip} %{USER:ident} %{USER:auth} \[%{HTTPDATE:timestamp}\] \"(?:%{WORD:verb} %{NOTSPACE:request}(?: HTTP/%{NUMBER:http_version})?|-)\" %{HOST:domain} %{NUMBER:response} (?:%{NUMBER:bytes}|-) %{QS:referrer} %{QS:agent} \"(%{QS:x_forword}|-)\" (%{URIHOST:upstream_host}|-) %{NUMBER:upstream_response} (%{WORD:upstream_cache_status}|-) %{QS:upstream_content_type} (%{BASE16FLOAT:upstream_response_time}) > (%{BASE16FLOAT:request_time})" } }#GROK 内置pattern

https://github.com/ logstash /logstash/blob/v1.1.9/patterns/grok-patterns

#在线调试地址:

http://grokdebug.herokuapp.com/

{ "client_ip": [ [ "11.11.1.1" ] ], "ident": [ [ "-" ] ], "auth": [ [ "-" ] ], "timestamp": [ [ "01/Mar/2013:12:23:53 +0800" ] ], "verb": [ [ "GET" ] ], "request": [ [ "/v1/api" ] ], "http_version": [ [ "1.1" ] ], "domain": [ [ "api.xx.com" ] ], "response": [ [ "200" ] ], "bytes": [ [ "4003" ] ], "referrer": [ [ "\"https://api.xx.com/v1/api\"" ] ], "agent": [ [ "\"Mozilla/4.0 (compatible; MSIE 8.0; Windows NT 6.1; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0)\"" ] ], "x_forword": [ [ null ] ], "upstream_host": [ [ "10.1.1.1:80" ] ], "port": [ [ "80" ] ], "upstream_response": [ [ "200" ] ], "upstream_cache_status": [ [ null ] ], "upstream_content_type": [ [ "\"text/html;charset=UTF-8\"" ] ], "upstream_response_time": [ [ "0.023" ] ], "request_time": [ [ "0.023" ] ]}StatsD监控

statsd { host => "10.1.1.1" port => 8125 increment => "nginx.response.%{response}" increment => "nginx.request.total" timing => ["nginx.request.time", "%{request_time}"] timing => ["nginx.upstream.response.time", "%{upstream_response_time}"] }-------------------------------------

妈蛋呀,改用logstash的原因是因为,scribe 真心搞不定,其次就是产品经理需要我开发一个可自定义的panel图表系统。

因为长时间没搞elk方案, 都忘了logstash的语法了,因为爬虫的都是我们自己定义的,这个时候需要自己手写正则了。

logstash 本身就内置了很多程序的变量正则, 比如nginx haproxy apahce tomcat的正则, 需要你自己指明 type格式就可以了。

这里标记下文章的源地址, http://xiaorui.cc http://xiaorui.cc/?p=1055

那么问题来了… … type貌似不能随便的引入,我一开始没注意 就随意用了nginx-access 结果filter里的grok正则怎么都匹配不了,很是恼火 … …

最后干掉了type后,就可以正常的匹配了,应该没这么二b,有时间再折腾下这个问题 。

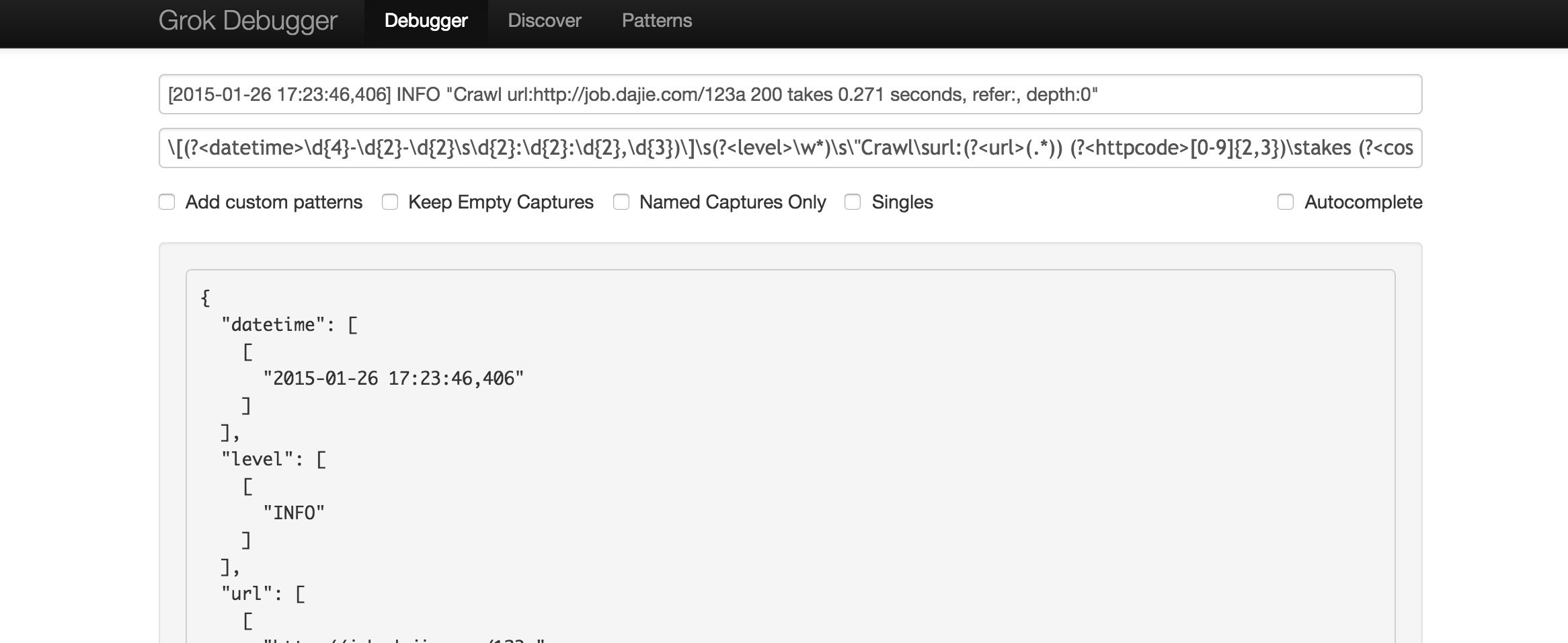

关于grep或者是grok,大家可以在http://grokdebug.herokuapp.com/ 这里查询下正则的匹配。

我经过测试后的logstash agent.conf的配置 。

input { file { type => “producer” path => “/home/ruifengyun/buzzspider/spider/spider.log” }}filter { grok { pattern => “\[(?<datetime>\d{4}-\d{2}-\d{2}\s\d{2}:\d{2}:\d{2},\d{3})\]\s(?<level>\w*)\s\”Crawl\surl:(?<url>(.*)) (?<httpcode>[0-9]{2,3})\stakes (?<cost>\d.\d\d).*” } }output { redis { host => “123.116.x.x” data_type =>”list” key => “logstash:demo” } stdout { codec => rubydebug}}在终端的结果是:

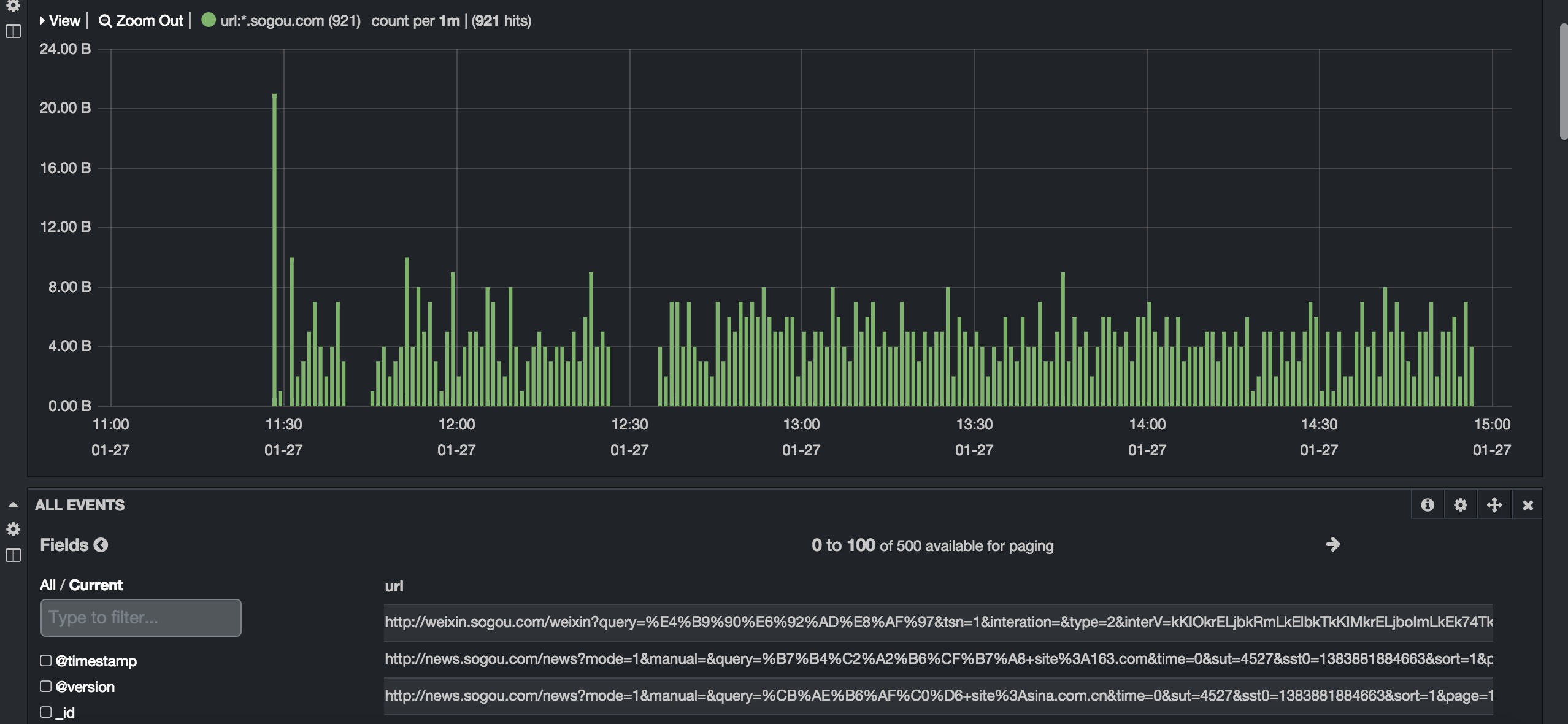

"message" => "[2015-01-27 14:56:55,613] INFO \"Crawl url:http://weixin.sogou.com/weixin?query=%E4%B9%90%E6%92%AD%E8%AF%97&tsn=1&interation=&type=2&interV=kKIOkrELjbkRmLkElbkTkKIMkrELjboImLkEk74TkKIRmLkEk78TkKILkbELjboN_105333196&ie=utf8&page=7&p=40040100&dp=1&num=100 200 takes 0.085 seconds, refer:, depth:2\"", "@timestamp" => "2015-01-27T06:56:56.359Z", "@version" => "1", "type" => "producer", "host" => "bj-buzz-dev01", "path" => "/home/ruifengyun/buzzspider/spider/spider.log", "datetime" => "2015-01-27 14:56:55,613", "level" => "INFO", "url" => "http://weixin.sogou.com/weixin?query=%E4%B9%90%E6%92%AD%E8%AF%97&tsn=1&interation=&type=2&interV=kKIOkrELjbkRmLkElbkTkKIMkrELjboImLkEk74TkKIRmLkEk78TkKILkbELjboN_105333196&ie=utf8&page=7&p=40040100&dp=1&num=100", "httpcode" => "200", "cost" => "0.08"}{ "message" => "[2015-01-27 14:56:55,637] INFO \"Crawl url:http://dealer.autohome.com.cn/8178/newslist.html 200 takes 0.146 seconds, refer:, depth:2\"", "@timestamp" => "2015-01-27T06:56:56.359Z", "@version" => "1", "type" => "producer", "host" => "bj-buzz-dev01", "path" => "/home/ruifengyun/buzzspider/spider/spider.log", "datetime" => "2015-01-27 14:56:55,637", "level" => "INFO", "url" => "http://dealer.autohome.com.cn/8178/newslist.html", "httpcode" => "200", "cost" => "0.14"}我们在kibana 3的界面上看到的结果,我这里是搜索下 时间周期里爬了sogou.com有多少次。

kibana的一个问题是, 不知道怎么写搜索的语句 。

url:*.sogou.com* ,我一开始以为是可以写纯正则。 kibana后端调用的是es的语法,所以你的语法要和elasticsearch想对应。

################ 官方文档也有详细的描述,在简单把logstash官方关于grok文章翻译下。

Example

下面是日志的样子

55.3.244.1 GET /index.html 15824 0.043

正则的例子

%{IP:client} %{WORD:method} %{URIPATHPARAM:request} %{NUMBER:bytes} %{NUMBER:duration}

配置文件里是怎么写得?

input {

file {

path => “/var/log/http.log”

}

}

filter {

grok {

match => [ "message", "%{IP:client} %{WORD:method} %{URIPATHPARAM:request} %{NUMBER:bytes} %{NUMBER:duration}" ]

}

}

解析后,是个什么样子?

client: 55.3.244.1

method: GET

request: /index.html

bytes: 15824

duration: 0.043

自定义正则

(?<field_name>the pattern here)

(?<queue_id>[0-9A-F]{10,11})

当然你也可以把众多的正则,放在一个集中文件里面。

# in ./patterns/postfix

POSTFIX_QUEUEID [0-9A-F]{10,11}

filter {

grok {

patterns_dir => “./patterns”

match => [ "message", "%{SYSLOGBASE} %{POSTFIX_QUEUEID:queue_id}: %{GREEDYDATA:syslog_message}" ]

}

}

############

logstash已经自带了不少的正则,如果想偷懒的话,可以在内置正则里借用下。

USERNAME [a-zA-Z0-9._-]+

USER %{USERNAME}

INT (?:[+-]?(?:[0-9]+))

BASE10NUM (?<![0-9.+-])(?>[+-]?(?:(?:[0-9]+(?:\.[0-9]+)?)|(?:\.[0-9]+)))

NUMBER (?:%{BASE10NUM})

BASE16NUM (?<![0-9A-Fa-f])(?:[+-]?(?:0x)?(?:[0-9A-Fa-f]+))

BASE16FLOAT \b(?<![0-9A-Fa-f.])(?:[+-]?(?:0x)?(?:(?:[0-9A-Fa-f]+(?:\.[0-9A-Fa-f]*)?)|(?:\.[0-9A-Fa-f]+)))\b

POSINT \b(?:[1-9][0-9]*)\b

NONNEGINT \b(?:[0-9]+)\b

WORD \b\w+\b

NOTSPACE \S+

SPACE \s*

DATA .*?

GREEDYDATA .*

QUOTEDSTRING (?>(?<!\\)(?>”(?>\\.|[^\\"]+)+”|”"|(?>’(?>\\.|[^\\']+)+’)|”|(?>(?>\\.|[^\]+)+)|`))

UUID [A-Fa-f0-9]{8}-(?:[A-Fa-f0-9]{4}-){3}[A-Fa-f0-9]{12}

# Networking

MAC (?:%{CISCOMAC}|%{WINDOWSMAC}|%{COMMONMAC})

CISCOMAC (?:(?:[A-Fa-f0-9]{4}\.){2}[A-Fa-f0-9]{4})

WINDOWSMAC (?:(?:[A-Fa-f0-9]{2}-){5}[A-Fa-f0-9]{2})

COMMONMAC (?:(?:[A-Fa-f0-9]{2}:){5}[A-Fa-f0-9]{2})

IPV6 ((([0-9A-Fa-f]{1,4}:){7}([0-9A-Fa-f]{1,4}|:))|(([0-9A-Fa-f]{1,4}:){6}(:[0-9A-Fa-f]{1,4}|((25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)(\.(25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)){3})|:))|(([0-9A-Fa-f]{1,4}:){5}(((:[0-9A-Fa-f]{1,4}){1,2})|:((25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)(\.(25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)){3})|:))|(([0-9A-Fa-f]{1,4}:){4}(((:[0-9A-Fa-f]{1,4}){1,3})|((:[0-9A-Fa-f]{1,4})?:((25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)(\.(25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)){3}))|:))|(([0-9A-Fa-f]{1,4}:){3}(((:[0-9A-Fa-f]{1,4}){1,4})|((:[0-9A-Fa-f]{1,4}){0,2}:((25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)(\.(25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)){3}))|:))|(([0-9A-Fa-f]{1,4}:){2}(((:[0-9A-Fa-f]{1,4}){1,5})|((:[0-9A-Fa-f]{1,4}){0,3}:((25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)(\.(25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)){3}))|:))|(([0-9A-Fa-f]{1,4}:){1}(((:[0-9A-Fa-f]{1,4}){1,6})|((:[0-9A-Fa-f]{1,4}){0,4}:((25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)(\.(25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)){3}))|:))|(:(((:[0-9A-Fa-f]{1,4}){1,7})|((:[0-9A-Fa-f]{1,4}){0,5}:((25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)(\.(25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)){3}))|:)))(%.+)?

IPV4 (?<![0-9])(?:(?:25[0-5]|2[0-4][0-9]|[0-1]?[0-9]{1,2})[.](?:25[0-5]|2[0-4][0-9]|[0-1]?[0-9]{1,2})[.](?:25[0-5]|2[0-4][0-9]|[0-1]?[0-9]{1,2})[.](?:25[0-5]|2[0-4][0-9]|[0-1]?[0-9]{1,2}))(?![0-9])

IP (?:%{IPV6}|%{IPV4})

HOSTNAME \b(?:[0-9A-Za-z][0-9A-Za-z-]{0,62})(?:\.(?:[0-9A-Za-z][0-9A-Za-z-]{0,62}))*(\.?|\b)

HOST %{HOSTNAME}

IPORHOST (?:%{HOSTNAME}|%{IP})

HOSTPORT (?:%{IPORHOST=~/\./}:%{POSINT})

# paths

PATH (?:%{UNIXPATH}|%{WINPATH})

UNIXPATH (?>/(?>[\w_%!$@:.,-]+|\\.)*)+

TTY (?:/dev/(pts|tty([pq])?)(\w+)?/?(?:[0-9]+))

WINPATH (?>[A-Za-z]+:|\\)(?:\\[^\\?*]*)+

URIPROTO [A-Za-z]+(\+[A-Za-z+]+)?

URIHOST %{IPORHOST}(?::%{POSINT:port})?

# uripath comes loosely from RFC1738, but mostly from what Firefox

# doesn’t turn into %XX

URIPATH (?:/[A-Za-z0-9$.+!*'(){},~:;=@#%_\-]*)+

#URIPARAM \?(?:[A-Za-z0-9]+(?:=(?:[^&]*))?(?:&(?:[A-Za-z0-9]+(?:=(?:[^&]*))?)?)*)?

URIPARAM \?[A-Za-z0-9$.+!*’|(){},~@#%&/=:;_?\-\[\]]*

URIPATHPARAM %{URIPATH}(?:%{URIPARAM})?

URI %{URIPROTO}://(?:%{USER}(?::[^@]*)?@)?(?:%{URIHOST})?(?:%{URIPATHPARAM})?

# Months: January, Feb, 3, 03, 12, December

MONTH \b(?:Jan(?:uary)?|Feb(?:ruary)?|Mar(?:ch)?|Apr(?:il)?|May|Jun(?:e)?|Jul(?:y)?|Aug(?:ust)?|Sep(?:tember)?|Oct(?:ober)?|Nov(?:ember)?|Dec(?:ember)?)\b

MONTHNUM (?:0?[1-9]|1[0-2])

MONTHDAY (?:(?:0[1-9])|(?:[12][0-9])|(?:3[01])|[1-9])

# Days: Monday, Tue, Thu, etc…

DAY (?:Mon(?:day)?|Tue(?:sday)?|Wed(?:nesday)?|Thu(?:rsday)?|Fri(?:day)?|Sat(?:urday)?|Sun(?:day)?)

# Years?

YEAR (?>\d\d){1,2}

HOUR (?:2[0123]|[01]?[0-9])

MINUTE (?:[0-5][0-9])

# ’60′ is a leap second in most time standards and thus is valid.

SECOND (?:(?:[0-5][0-9]|60)(?:[:.,][0-9]+)?)

TIME (?!<[0-9])%{HOUR}:%{MINUTE}(?::%{SECOND})(?![0-9])

# datestamp is YYYY/MM/DD-HH:MM:SS.UUUU (or something like it)

DATE_US %{MONTHNUM}[/-]%{MONTHDAY}[/-]%{YEAR}

DATE_EU %{MONTHDAY}[./-]%{MONTHNUM}[./-]%{YEAR}

ISO8601_TIMEZONE (?:Z|[+-]%{HOUR}(?::?%{MINUTE}))

ISO8601_SECOND (?:%{SECOND}|60)

TIMESTAMP_ISO8601 %{YEAR}-%{MONTHNUM}-%{MONTHDAY}[T ]%{HOUR}:?%{MINUTE}(?::?%{SECOND})?%{ISO8601_TIMEZONE}?

DATE %{DATE_US}|%{DATE_EU}

DATESTAMP %{DATE}[- ]%{TIME}

TZ (?:[PMCE][SD]T|UTC)

DATESTAMP_RFC822 %{DAY} %{MONTH} %{MONTHDAY} %{YEAR} %{TIME} %{TZ}

DATESTAMP_OTHER %{DAY} %{MONTH} %{MONTHDAY} %{TIME} %{TZ} %{YEAR}

# Syslog Dates: Month Day HH:MM:SS

SYSLOGTIMESTAMP %{MONTH} +%{MONTHDAY} %{TIME}

PROG (?:[\w._/%-]+)

SYSLOGPROG %{PROG:program}(?:\[%{POSINT:pid}\])?

SYSLOGHOST %{IPORHOST}

SYSLOGFACILITY <%{NONNEGINT:facility}.%{NONNEGINT:priority}>

HTTPDATE %{MONTHDAY}/%{MONTH}/%{YEAR}:%{TIME} %{INT}

# Shortcuts

QS %{QUOTEDSTRING}

# Log formats

SYSLOGBASE %{SYSLOGTIMESTAMP:timestamp} (?:%{SYSLOGFACILITY} )?%{SYSLOGHOST:logsource} %{SYSLOGPROG}:

COMMONAPACHELOG %{IPORHOST:clientip} %{USER:ident} %{USER:auth} \[%{HTTPDATE:timestamp}\] “(?:%{WORD:verb} %{NOTSPACE:request}(?: HTTP/%{NUMBER:httpversion})?|%{DATA:rawrequest})” %{NUMBER:response} (?:%{NUMBER:bytes}|-)

COMBINEDAPACHELOG %{COMMONAPACHELOG} %{QS:referrer} %{QS:agent}

# Log Levels

LOGLEVEL ([A-a]lert|ALERT|[T|t]race|TRACE|[D|d]ebug|DEBUG|[N|n]otice|NOTICE|[I|i]nfo|INFO|[W|w]arn?(?:ing)?|WARN?(?:ING)?|[E|e]rr?(?:or)?|ERR?(?:OR)?|[C|c]rit?(?:ical)?|CRIT?(?:ICAL)?|[F|f]atal|FATAL|[S|s]evere|SEVERE|EMERG(?:ENCY)?|[Ee]merg(?:ency)?)

如果想赏钱,可以用微信扫描下面的二维码. 另外再次标注博客原地址 xiaorui.cc …… 感谢!

- logstash使用grok正则解析日志和kibana遇到的问题

- logstash使用grok正则解析日志

- logstash 使用grok正则解析日志

- 搭建ELK(ElasticSearch+Logstash+Kibana)日志分析系统(十四) logstash grok 正则解析日志

- Logstash使用grok解析IIS日志

- logstash 的 grok 正则表达式测试方法

- logstash grok 正则 实例

- logstash grok正则调试

- logstash + grok 正则语法

- logstash 中正则grok

- logstash grok解析

- logstash grok 解析Nginx

- logstash+grok+json+elasticsearch解析复杂日志数据(一)

- logstash+grok+json+elasticsearch解析复杂日志数据(二)

- grok 正则解析日志例子<1>

- java-grok通过正则表达式解析日志

- Logstash使用grok过滤nginx日志(二)

- logstash过滤器filter grok多种日志匹配使用心得

- Marching Cube(C++ OpenGl代码)读取医学三维图像*.raw进行三维重建

- Oracle-Soft Parse/Hard Parse/Soft Soft Parse解读

- 解决Python下pip install MySQL-python失败的问题

- Levenberg–Marquardt算法学习

- timestamps 字段按天去group by的写法

- logstash使用grok正则解析日志和kibana遇到的问题

- 操作系统(Linux)--按优先数调度算法实现处理器调度

- 个人记录-LeetCode 34. Search for a Range

- XCode8新建项目中使用swift2.3

- RapidMiner 5.3.015源代码下载并且正确的运行

- edittext在界面开始不弹出软键盘和获取焦点

- centos 7 yum 安装LAMP服务器 Apache PHP MariaDB

- 如何描述事物(对象)

- 【博弈】hdu1850 && hdu2176 (尼姆博弈)