Analysis of 【Dropout】

来源:互联网 发布:进销存数据库设计 编辑:程序博客网 时间:2024/05/01 10:33

原文:https://pgaleone.eu/deep-learning/regularization/2017/01/10/anaysis-of-dropout/

这篇分析dropout的比较好,记录一下。译文在http://www.wtoutiao.com/p/649MGEJ.html

Overfitting is a problem in Deep Neural Networks (DNN): the model learns to classify only the training set, adapting itself to the training examples instead of learning decision boundaries capable of classifying generic instances. Many solutions to the overfitting problem have been presented during these years; one of them have overwhelmed the others due to its simplicity and its empirical good results: Dropout.

Dropout

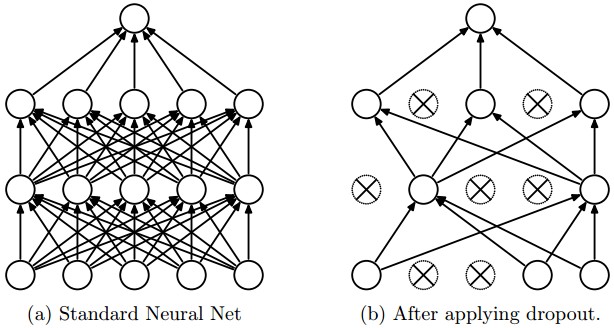

The network on the left it's the same network used at test time, once the parameters have been learned.

The idea behind Dropout is to train an ensemble of DNNs and average the results of the whole ensemble instead of train a single DNN.

The DNNs are built dropping out neurons with

The dropped neurons do not contribute to the training phase in both the forward and backward phases of back-propagation: for this reason every time a single neuron is dropped out it’s like the training phase is done on a new network.

Quoting the authors:

In a standard neural network, the derivative received by each parameter tells it how it should change so the final loss function is reduced, given what all other units are doing. Therefore, units may change in a way that they fix up the mistakes of the other units. This may lead to complex co-adaptations. This in turn leads to overfitting because these co-adaptations do not generalize to unseen data. We hypothesize that for each hidden unit, Dropout prevents co-adaptation by making the presence of other hidden units unreliable. Therefore, a hidden unit cannot rely on other specific units to correct its mistakes.

In short: Dropout works well in practice because it prevents the co-adaption of neurons during the training phase.

Now that we got an intuitive idea behind Dropout, let’s analyze it in depth.

How Dropout works

As said before, Dropout turns off neurons with probability

Every single neuron has the same probability of being turned off. This means that:

Given

h(x)=xW+b a linear projection of adi -dimensional inputx in adh -dimensional output space.a(h) an activation function

it’s possible to model the application of Dropout, in the training phase only, to the given projection as a modified activation function:

Where

A Bernoulli random variable has the following probability mass distribution:

Where

It’s evident that this random variable perfectly models the Dropout application on a single neuron. In fact, the neuron is turned off with probability

It can be useful to see the application of Dropout on a generic i-th neuron:

where

Since during train phase a neuron is kept on with probability

To do this, the authors suggest scaling the activation function by a factor of

Train phase:

Test phase:

Inverted Dropout

A slightly different approach is to use Inverted Dropout. This approach consists in the scaling of the activations during the training phase, leaving the test phase untouched.

The scale factor is the inverse of the keep probability:

Train phase:

Test phase:

Inverted Dropout is how Dropout is implemented in practice in the various deep learning frameworks because it helps to define the model once and just change a parameter (the keep/drop probability) to run train and test on the same model.

Direct Dropout, instead, force you to modify the network during the test phase because if you don’t multiply by

Dropout of a set of neurons

It can be easily noticed that a layer

Thus, the output of the layer

Since every neuron is now modeled as a Bernoulli random variable and all these random variables are independent and identically distributed, the total number of dropped neuron is a random variable too, called Binomial:

Where the probability of getting exactly

This formula can be easily explained:

pk(1−p)n−k is the probability of getting a single sequence ofk successes onn trials and thereforen−k failures.(nk) is the binomial coefficient used to calculate the number of possible sequence of success.

We can now use this distribution to analyze the probability of dropping a specified number of neurons.

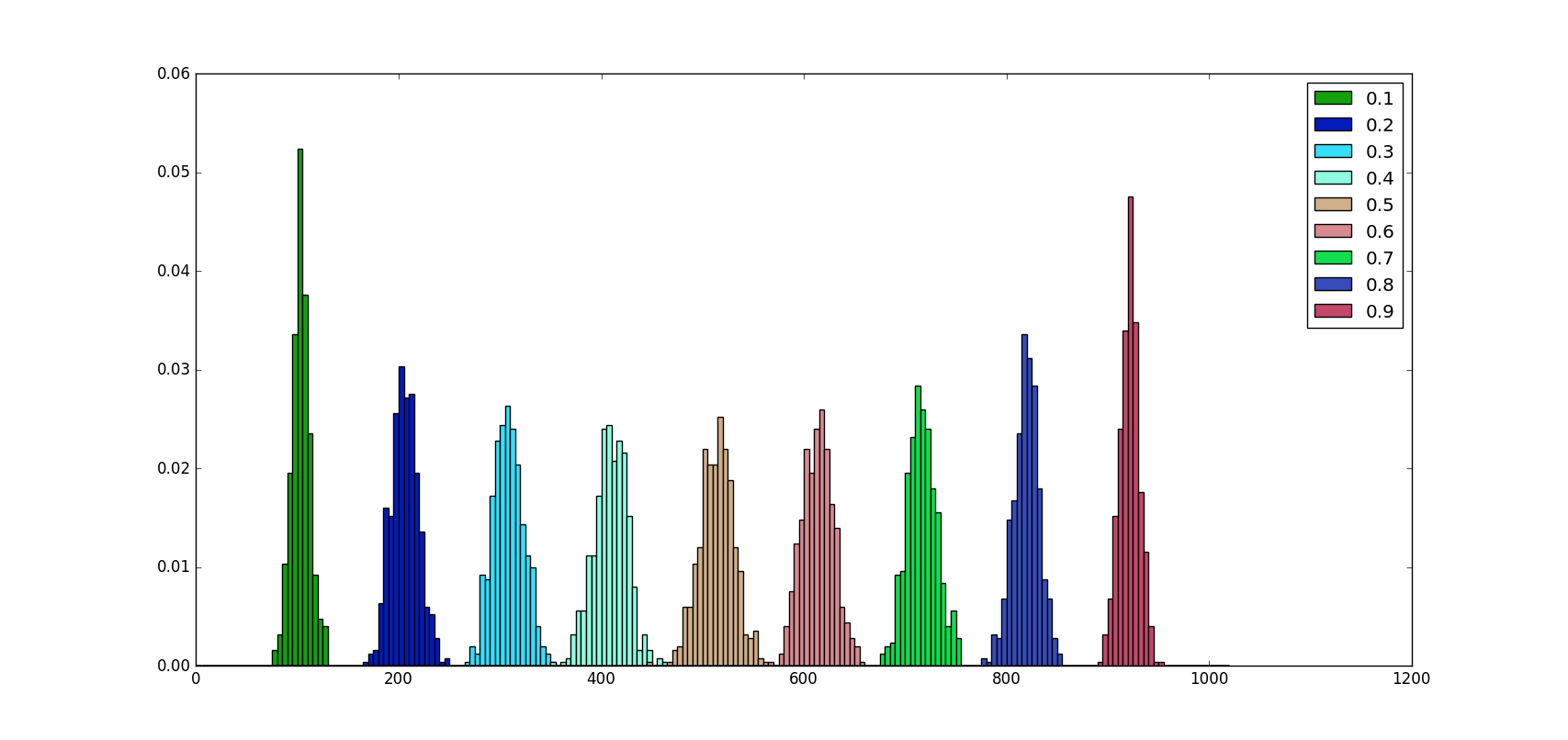

When using Dropout, we define a fixed Dropout probability

For example, if the layer we apply Dropout to has

Thus, the probability of dropping out exactly

A python 3 script can help us to visualize how neurons are dropped for different values of

As we can see from the image above no matter what the

Moreover, we can notice that the distribution of values is almost symmetric around

The scaling factor has been added by the authors to compensate the activation values, because they expect that during the training phase only a percentage of

Dropout & other regularizers

Dropout is often used with L2 normalization and other parameter constraint techniques (such as Max Norm 1), this is not a case. Normalizations help to keep model parameters value low, in this way a parameter can’t grow too much.

In brief, the L2 normalization (for example) is an additional term to the loss, where

It’s easy to understand that this additional term, when doing back-propagation via gradient descent, reduces the update amount. If

Dropout alone, instead, does not have any way to prevent parameter values from becoming too large during this update phase. Moreover, the inverted implementation leads the update steps to become bigger, as showed below.

Inverted Dropout and other regularizers

Since Dropout does not prevent parameters from growing and overwhelming each other, applying L2 regularization (or any other regularization technique that constraints the parameter values) can help.

Making explicit the scaling factor, the previous equation becomes:

It can be easily seen that when using Inverted Dropout, the learning rate is scaled by a factor of

For this reason, from now on we’ll call

The effective learning rate, thus, is higher respect to the learning rate chosen: for this reason normalizations that constrain the parameter values can help to simplify the learning rate selection process.

Summary

- Dropout exists in two versions: direct (not commonly implemented) and inverted

- Dropout on a single neuron can be modeled using a Bernoulli random variable

- Dropout on a set of neurons can be modeled using a Binomial random variable

- Even if the probability of dropping exactly

np neurons is low,np neurons are dropped on average on a layer ofn neurons. - Inverted Dropout boost the learning rate

- Inverted Dropout should be using together with other normalization techniques that constrain the parameter values in order to simplify the learning rate selection procedure

- Dropout helps to prevent overfitting in deep neural networks.

Max Norm impose a constraint to the parameters size. Chosen a value for the hyper-parameter

c it impose the constraint|w|≤c . ↩

- Analysis of 【Dropout】

- A summary of dropout technique

- Dropout

- Dropout

- dropout

- Dropout

- Dropout

- Dropout

- dropout

- Dropout

- Dropout

- analysis of wait_event_interruptible()

- analysis of wake_up_interruptible()

- analysis communication of ucenter

- Infinispan Analysis of Distribution

- Analysis of DefaultConsistentHash.java

- The analysis of suse_register

- Analysis of pNFS

- NDK Java 调用 C代码

- 【HDFS】hadoop2.x HDFS javaAPI

- Android 自定义加载框

- OC当中的深拷贝和浅拷贝

- 圈子金融的weex领悟 - weex-start

- Analysis of 【Dropout】

- java虚拟机

- 【mapreduce】hadoop2.x—mapreduce实战和总结

- Java高并发,如何解决,什么方式解决

- 使用swoole实现生产者消费者模型(2)

- poj1751

- Android ADB命令的使用

- HDU1010 Tempter of the Bone (DFS & 奇偶剪枝)

- 【PAT】1096. Consecutive Factors