[paper] GAN

来源:互联网 发布:单片机开发招聘 编辑:程序博客网 时间:2024/05/22 22:04

(NIPS 2014) Generative Adversarial Nets

Paper: https://papers.nips.cc/paper/5423-generative-adversarial-nets

Code: http://www.github.com/goodfeli/adversarial

We propose a new framework for estimating generative models via an adversarial process, in which we simultaneously train two models: a generative model G that captures the data distribution, and a discriminative model D that estimates the probability that a sample came from the training data rather than G.

The training procedure for G is to maximize the probability of D making a mistake.

This framework corresponds to a minimax two-player game.

Introduction

Deep generative models have had less of an impact, due to the difficulty of approximating many intractable probabilistic computations that arise in maximum likelihood estimation and related strategies, and due to difficulty of leveraging the benefits of piecewise linear units in the generative context.

We propose a new generative model estimation procedure that sidesteps these difficulties.

The generative model can be thought of as analogous to a team of counterfeiters, trying to produce fake currency and use it without detection, while the discriminative model is analogous to the police, trying to detect the counterfeit currency.

we can train both models using only the highly successful backpropagation and dropout algorithms [17] and sample from the generative model using only forward propagation. No approximate inference or Markov chains are necessary.

Related work

Adversarial nets

D and G play the following two-player minimax game with value function

Optimizing D to completion in the inner loop of training is computationally prohibitive, and on finite datasets would result in overfitting. Instead, we alternate between k steps of optimizing D and one step of optimizing G.

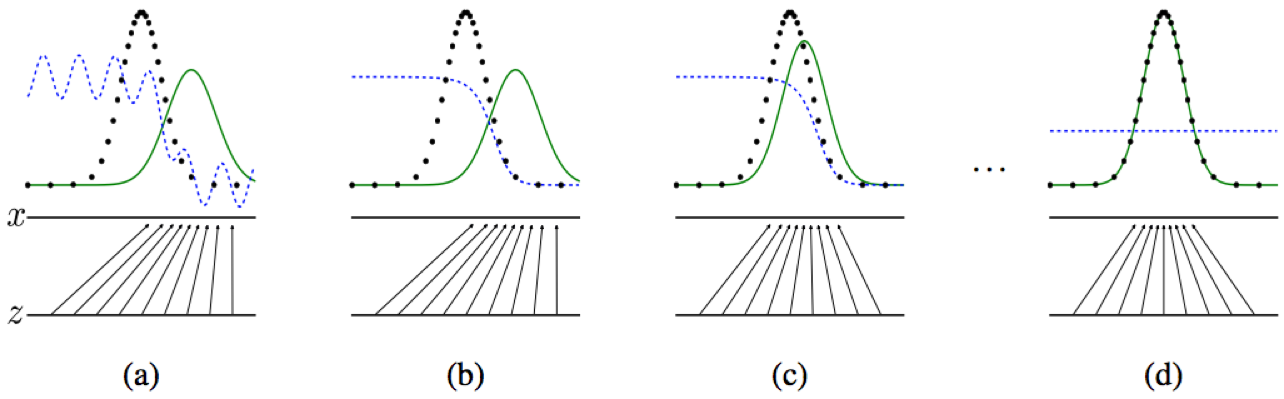

Figure 1: Generative adversarial nets are trained by simultaneously updating the discriminative distribution (D, blue, dashed line) so that it discriminates between samples from the data generating distribution (black, dotted line)

The lower horizontal line is the domain from which

The horizontal line above is part of the domain of

The upward arrows show how the mapping

G contracts in regions of high density and expands in regions of low density of

(a) Poorly fit model

(b) After updating D

(c) After updating G

(d) Mixed strategy equilibrium

Theoretical Results

Global Optimality of pg=pdata

Convergence of Algorithm 1

Experiments

We trained adversarial nets an a range of datasets including MNIST[23], the Toronto Face Database (TFD) [28], and CIFAR-10 [21].

Advantages and disadvantages

Conclusions and future work

- [paper] GAN

- The GAN Zoo (最新GAN 相关paper收集)

- [Paper 资源帖]ICML 2017中有关GAN的Paper

- [Paper 资源帖]CVPR 2017中有关GAN的Paper

- gan

- GAN

- GAN

- GAN

- GAN

- GAN

- GAN

- GAN

- GAN

- GAN︱生成模型学习笔记(运行机制、NLP结合难点、应用案例、相关Paper)

- paper

- paper

- paper

- PAPER

- 欢迎使用CSDN-markdown编辑器

- 人工智能和新科技革命

- share Nav 颜色

- 手机录屏存为Gif

- redux-amrc:用更少的代码发起异步 action

- [paper] GAN

- windows container (docker) 容器资料笔记

- strncmp函数

- (ssl1459)求最长不下降序列

- Learning Rate--学习率的选择(to be continued)

- runTime 的消息转发机制

- JavaScript进阶之路 初学者的开始

- Cmake编译helloworld

- 复String类的实现