10 Minutes to pandas

来源:互联网 发布:淘宝时尚韩国女装店铺 编辑:程序博客网 时间:2024/06/06 04:28

最近在看pandas,之前一致用SQL做数据处理,对于线下的小数据量,的确是pandas功能简洁实用,而且方便可视化操作。翻译来自于pandas官方网站上《10 Minutes to pandas》,首先是引入所需的包

import pandas as pdimport numpy as npimport matplotlib.pyplot as plt创建对象

具体见Data Structure Intro section

- 通过传递一个list对象来创建一个Series,pandas会默认创建整型索引:

s = pd.Series([1,3,5,np.nan,6,8])# Out[5]:# 0 1.0# 1 3.0# 2 5.0# 3 NaN# 4 6.0# 5 8.0# dtype: float64- 通过传递一个numpy array,时间索引以及列标签来创建一个DataFrame:

dates = pd.date_range('20130101', periods=6)# DatetimeIndex(['2013-01-01', '2013-01-02', '2013-01-03', '2013-01-04',# '2013-01-05', '2013-01-06'],# dtype='datetime64[ns]', freq='D')df = pd.DataFrame(np.random.randn(6,4), index=dates, columns=list('ABCD'))# A B C D# 2013-01-01 0.469112 -0.282863 -1.509059 -1.135632# 2013-01-02 1.212112 -0.173215 0.119209 -1.044236# 2013-01-03 -0.861849 -2.104569 -0.494929 1.071804# 2013-01-04 0.721555 -0.706771 -1.039575 0.271860# 2013-01-05 -0.424972 0.567020 0.276232 -1.087401# 2013-01-06 -0.673690 0.113648 -1.478427 0.524988- 通过传递一个能够被转换成类似序列结构的字典对象来创建一个DataFrame:

df2 = pd.DataFrame({'A': 1., 'B': pd.Timestamp('20130102'), 'C': pd.Series(1, index=list(range(4)), dtype='float32'), 'D': np.array([3] * 4, dtype='int32'), 'E': pd.Categorical(["test", "train", "test", "train"]), 'F': 'foo'})# A B C D E F# 0 1.0 2013-01-02 1.0 3 test foo# 1 1.0 2013-01-02 1.0 3 train foo# 2 1.0 2013-01-02 1.0 3 test foo# 3 1.0 2013-01-02 1.0 3 train foo- 查看不同列的数据类型:

df2.dtypes# A float64# B datetime64[ns]# C float32# D int32# E category# F object# dtype: object- 如果你使用的是IPython,使用Tab自动补全功能会自动识别所有的属性以及自定义的列.

df2.<TAB># df2.A df2.bool# ...查看数据

来源Basics section

- 查看frame中头部和尾部的行

df.head()# 头部,默认5行,可以指定显示行数。# A B C D# 2013-01-01 0.469112 -0.282863 -1.509059 -1.135632# 2013-01-02 1.212112 -0.173215 0.119209 -1.044236# 2013-01-03 -0.861849 -2.104569 -0.494929 1.071804# 2013-01-04 0.721555 -0.706771 -1.039575 0.271860# 2013-01-05 -0.424972 0.567020 0.276232 -1.087401df.tail(3)# 尾部# A B C D# 2013-01-04 0.721555 -0.706771 -1.039575 0.271860# 2013-01-05 -0.424972 0.567020 0.276232 -1.087401# 2013-01-06 -0.673690 0.113648 -1.478427 0.524988- 显示索引、列和底层的numpy数据

df.index # 显示索引# [2013-01-01, ..., 2013-01-06]df.columns # 列# Index(['A', 'B', 'C', 'D'], dtype='object')df.values # 数据# array([[ 0.4691, -0.2829, -1.5091, -1.1356],...])- describe()函数对于数据的快速统计汇总

df.describe()# A B C D# count 6.000000 6.000000 6.000000 6.000000# mean 0.073711 -0.431125 -0.687758 -0.233103# std 0.843157 0.922818 0.779887 0.973118# min -0.861849 -2.104569 -1.509059 -1.135632# 25% -0.611510 -0.600794 -1.368714 -1.076610# 50% 0.022070 -0.228039 -0.767252 -0.386188# 75% 0.658444 0.041933 -0.034326 0.461706# max 1.212112 0.567020 0.276232 1.071804- 转置

df.T- 按轴进行排序

df.sort_index(axis=1, ascending=False) # 即按列名排序,交换列位置。- 按值进行排序

df.sort_values(by='B') # 按照列B的值升序排序选择-Selection

虽然标准的Python/Numpy的选择和设置表达式都能够直接派上用场,但是作为工程使用的代码,我们推荐使用经过优化的pandas数据访问方式: .at, .iat, .loc, .iloc 和 .ix

详情请参阅Indexing and Selecing Data 和 MultiIndex / Advanced Indexing。

获取-Getting

- 选择一个单独的列,这将会返回一个Series,等同于df.A

df['A']- 通过[]进行选择,这将会对行进行切片

df[0:3]# A B C D# 2013-01-01 0.469112 -0.282863 -1.509059 -1.135632# 2013-01-02 1.212112 -0.173215 0.119209 -1.044236# 2013-01-03 -0.861849 -2.104569 -0.494929 1.071804 df['20130102':'20130104']# A B C D# 2013-01-02 1.212112 -0.173215 0.119209 -1.044236# 2013-01-03 -0.861849 -2.104569 -0.494929 1.071804# 2013-01-04 0.721555 -0.706771 -1.039575 0.271860通过标签选择-Selection by Label

参考Selection by Label

- 使用标签来获取一个交叉的区域

df.loc[dates[0]]# A 0.469112# B -0.282863# C -1.509059# D -1.135632# Name: 2013-01-01 00:00:00, dtype: float64- 通过标签来在多个轴上进行选择

df.loc[:,['A','B']]# A B# 2013-01-01 0.469112 -0.282863# 2013-01-02 1.212112 -0.173215# 2013-01-03 -0.861849 -2.104569# 2013-01-04 0.721555 -0.706771# 2013-01-05 -0.424972 0.567020# 2013-01-06 -0.673690 0.113648- 标签切片

df.loc['20130102':'20130104',['A','B']]# Out[28]: # A B# 2013-01-02 1.212112 -0.173215# 2013-01-03 -0.861849 -2.104569# 2013-01-04 0.721555 -0.706771- 对于返回的对象进行维度缩减

df.loc['20130102',['A','B']]# Out[29]: # A 1.212112# B -0.173215# Name: 2013-01-02 00:00:00, dtype: float64- 获取一个标量

df.loc[dates[0],'A']# Out[30]: 0.46911229990718628- 快速访问一个标量(与上一个方法等价)

df.at[dates[0],'A']# Out[31]: 0.46911229990718628通过位置选择-Selection by Position

详情Selection by Position

- 通过传递数值进行位置选择(选择的是行)

In [32]: df.iloc[3]# Out[32]: # A 0.721555# B -0.706771# C -1.039575# D 0.271860# Name: 2013-01-04 00:00:00, dtype: float64- 通过数值进行切片,与numpy/python中的情况类似

df.iloc[3:5,0:2]# Out[33]: # A B# 2013-01-04 0.721555 -0.706771# 2013-01-05 -0.424972 0.567020- 通过指定一个位置的列表,与numpy/python中的情况类似

df.iloc[[1,2,4],[0,2]]# Out[34]: # A C# 2013-01-02 1.212112 0.119209# 2013-01-03 -0.861849 -0.494929# 2013-01-05 -0.424972 0.276232- 对行进行切片

df.iloc[1:3,:] # 1,2行# Out[35]: # A B C D# 2013-01-02 1.212112 -0.173215 0.119209 -1.044236# 2013-01-03 -0.861849 -2.104569 -0.494929 1.071804- 对列进行切片

df.iloc[:,1:3] # 1,2列# Out[36]: # B C# 2013-01-01 -0.282863 -1.509059# # 2013-01-02 -0.173215 0.119209# 2013-01-03 -2.104569 -0.494929# 2013-01-04 -0.706771 -1.039575# 2013-01-05 0.567020 0.276232# 2013-01-06 0.113648 -1.478427- 获取特定的值

df.iloc[1,1]# Out[37]: -0.17321464905330858# For getting fast access to a scalardf.iat[1,1]# Out[38]: -0.17321464905330858布尔索引

- 使用一个单独列的值来选择数据

df[df.A > 0]# A B C D# 2013-01-01 0.469112 -0.282863 -1.509059 -1.135632# 2013-01-02 1.212112 -0.173215 0.119209 -1.044236# 2013-01-04 0.721555 -0.706771 -1.039575 0.271860- 使用布尔条件来选择数据

df[df > 0]# A B C D# 2013-01-01 0.469112 NaN NaN NaN# 2013-01-02 1.212112 NaN 0.119209 NaN# 2013-01-03 NaN NaN NaN 1.071804# 2013-01-04 0.721555 NaN NaN 0.271860# 2013-01-05 NaN 0.567020 0.276232 NaN# 2013-01-06 NaN 0.113648 NaN 0.524988- 使用isin()方法来过滤

df2 = df.copy()df2['E'] = ['one', 'one','two','three','four','three']# A B C D E# 2013-01-01 0.469112 -0.282863 -1.509059 -1.135632 one# 2013-01-02 1.212112 -0.173215 0.119209 -1.044236 one# 2013-01-03 -0.861849 -2.104569 -0.494929 1.071804 two# 2013-01-04 0.721555 -0.706771 -1.039575 0.271860 three# 2013-01-05 -0.424972 0.567020 0.276232 -1.087401 four# 2013-01-06 -0.673690 0.113648 -1.478427 0.524988 threedf2[df2['E'].isin(['two','four'])]# A B C D E# 2013-01-03 -0.861849 -2.104569 -0.494929 1.071804 two# 2013-01-05 -0.424972 0.567020 0.276232 -1.087401 four设置-setting

- 设置一个新的列

s1 = pd.Series([1,2,3,4,5,6], index=pd.date_range('20130102', periods=6))# 2013-01-02 1# 2013-01-03 2# 2013-01-04 3# 2013-01-05 4# 2013-01-06 5# 2013-01-07 6# Freq: D, dtype: int64df['F'] = s1- 通过标签设置新的值:

df.at[dates[0],'A'] = 0- 通过位置设置新的值

df.iat[0,1] = 0- 通过一个numpy数组设置一组新值

df.loc[:,'D'] = np.array([5] * len(df))上诉操作之后的结果

A B C D F2013-01-01 0.000000 0.000000 -1.509059 5 NaN2013-01-02 1.212112 -0.173215 0.119209 5 1.02013-01-03 -0.861849 -2.104569 -0.494929 5 2.02013-01-04 0.721555 -0.706771 -1.039575 5 3.02013-01-05 -0.424972 0.567020 0.276232 5 4.02013-01-06 -0.673690 0.113648 -1.478427 5 5.0- 通过where操作来设置新的值

df2 = df.copy()df2[df2 > 0] = -df2# A B C D F# 2013-01-01 0.000000 0.000000 -1.509059 -5 NaN# 2013-01-02 -1.212112 -0.173215 -0.119209 -5 -1.0# 2013-01-03 -0.861849 -2.104569 -0.494929 -5 -2.0# 2013-01-04 -0.721555 -0.706771 -1.039575 -5 -3.0# 2013-01-05 -0.424972 -0.567020 -0.276232 -5 -4.0# 2013-01-06 -0.673690 -0.113648 -1.478427 -5 -5.0缺失值处理

在pandas中,使用np.nan来代替缺失值,这些值将默认不会包含在计算中,详情请参阅:Missing Data Section。

- reindex()方法可以对指定轴上的索引进行改变/增加/删除操作,这将返回原始数据的一个拷贝

df1 = df.reindex(index=dates[0:4], columns=list(df.columns) + ['E'])df1.loc[dates[0]:dates[1],'E'] = 1# A B C D F E# 2013-01-01 0.000000 0.000000 -1.509059 5 NaN 1.0# 2013-01-02 1.212112 -0.173215 0.119209 5 1.0 1.0# 2013-01-03 -0.861849 -2.104569 -0.494929 5 2.0 NaN# 2013-01-04 0.721555 -0.706771 -1.039575 5 3.0 NaN- 去掉包含缺失值的行

df1.dropna(how='any')# A B C D F E# 2013-01-02 1.212112 -0.173215 0.119209 5 1.0 1.0- 对缺失值进行填充

df1.fillna(value=5)- 对数据进行布尔填充

pd.isnull(df1)# A B C D F E# 2013-01-01 False False False False True False# 2013-01-02 False False False False False False# 2013-01-03 False False False False False True# 2013-01-04 False False False False False True操作-Operations

详情 Basic Section On Binary Ops

统计

- 执行描述性统计,所有轴。

df.mean() # 均值- 在指定轴上进行相同的操作

df.mean(1)- 对于拥有不同维度,需要对齐的对象进行操作。Pandas会自动的沿着指定的维度进行广播

s = pd.Series([1,3,5,np.nan,6,8], index=dates).shift(2)# Out[64]: # 2013-01-01 NaN# 2013-01-02 NaN# 2013-01-03 1.0# 2013-01-04 3.0# 2013-01-05 5.0# 2013-01-06 NaN# Freq: D, dtype: float64df.sub(s, axis='index')# A B C D F# 2013-01-01 NaN NaN NaN NaN NaN# 2013-01-02 NaN NaN NaN NaN NaN# 2013-01-03 -1.861849 -3.104569 -1.494929 4.0 1.0# 2013-01-04 -2.278445 -3.706771 -4.039575 2.0 0.0# 2013-01-05 -5.424972 -4.432980 -4.723768 0.0 -1.0# 2013-01-06 NaN NaN NaN NaN NaN应用

- 对数据应用函数

df.apply(np.cumsum) # 应用numpy的累计求和函数。# A B C D F# 2013-01-01 0.000000 0.000000 -1.509059 5 NaN# 2013-01-02 1.212112 -0.173215 -1.389850 10 1.0# 2013-01-03 0.350263 -2.277784 -1.884779 15 3.0# 2013-01-04 1.071818 -2.984555 -2.924354 20 6.0# 2013-01-05 0.646846 -2.417535 -2.648122 25 10.0# 2013-01-06 -0.026844 -2.303886 -4.126549 30 15.0df.apply(lambda x: x.max() - x.min())# # A 2.073961# B 2.671590# C 1.785291# D 0.000000# F 4.000000# dtype: float64直方图-Histogramming

具体参照:Histogramming and Discretization

s = pd.Series(np.random.randint(0, 7, size=10))# # 0 4# 1 2# 2 1# 3 2# 4 6# 5 4# 6 4# 7 6# 8 4# 9 4# dtype: int64s.value_counts()# 4 5# 6 2# 2 2# 1 1# dtype: int64字符串方法

Series对象在其str属性中配备了一组字符串处理方法,可以很容易的应用到数组中的每个元素,如下段代码所示。更多详情请参考:Vectorized String Methods.

s = pd.Series(['A', 'B', 'C', 'Aaba', 'Baca', np.nan, 'CABA', 'dog', 'cat'])s.str.lower()# # 0 a# 1 b# 2 c# 3 aaba# 4 baca# 5 NaN# 6 caba# 7 dog# 8 cat# dtype: object合并-Merge

Pandas提供了大量的方法能够轻松的对Series,DataFrame和Panel对象进行各种符合各种逻辑关系的合并操作。具体请参阅:Merging section

Concat

concat()方法:

df = pd.DataFrame(np.random.randn(10, 4))## 0 1 2 3# 0 -0.548702 1.467327 -1.015962 -0.483075# 1 1.637550 -1.217659 -0.291519 -1.745505# 2 -0.263952 0.991460 -0.919069 0.266046# 3 -0.709661 1.669052 1.037882 -1.705775# 4 -0.919854 -0.042379 1.247642 -0.009920# 5 0.290213 0.495767 0.362949 1.548106# 6 -1.131345 -0.089329 0.337863 -0.945867# 7 -0.932132 1.956030 0.017587 -0.016692# 8 -0.575247 0.254161 -1.143704 0.215897# 9 1.193555 -0.077118 -0.408530 -0.862495# break it into piecespieces = [df[:3], df[3:7], df[7:]]pd.concat(pieces)# 0 1 2 3# 0 -0.548702 1.467327 -1.015962 -0.483075# 1 1.637550 -1.217659 -0.291519 -1.745505# 2 -0.263952 0.991460 -0.919069 0.266046# 3 -0.709661 1.669052 1.037882 -1.705775# 4 -0.919854 -0.042379 1.247642 -0.009920# 5 0.290213 0.495767 0.362949 1.548106# 6 -1.131345 -0.089329 0.337863 -0.945867# 7 -0.932132 1.956030 0.017587 -0.016692# 8 -0.575247 0.254161 -1.143704 0.215897# 9 1.193555 -0.077118 -0.408530 -0.862495join

Join 类似于SQL类型的合并,具体请参阅:Database style joining

left = pd.DataFrame({'key': ['foo', 'foo'], 'lval': [1, 2]})right = pd.DataFrame({'key': ['foo', 'foo'], 'rval': [4, 5]})pd.merge(left, right, on='key')# Out[81]: # key lval rval# 0 foo 1 4# 1 foo 1 5# 2 foo 2 4# 3 foo 2 5Append

Append 将一行连接到一个DataFrame上,具体请参阅Appending:

df = pd.DataFrame(np.random.randn(8, 4), columns=['A','B','C','D'])s = df.iloc[3]df.append(s, ignore_index=True)Grouping

对于”group by”操作,我们通常是指以下一个或多个操作步骤:

(Splitting)按照一些规则将数据分为不同的组;

(Applying)对于每组数据分别执行一个函数;

(Combining)将结果组合到一个数据结构中;

df = pd.DataFrame({'A' : ['foo', 'bar', 'foo', 'bar', 'foo', 'bar', 'foo', 'foo'], 'B' : ['one', 'one', 'two', 'three', 'two', 'two', 'one', 'three'], 'C' : np.random.randn(8), 'D' : np.random.randn(8)})- 分组并对每个分组执行sum函数

df.groupby('A').sum()# C D# A B # bar one -1.814470 2.395985# three -0.595447 0.166599# two -0.392670 -0.136473# foo one -1.195665 -0.616981# three 1.928123 -1.623033# two 2.414034 1.600434Reshaping

详情请参阅 Hierarchical Indexing 和 Reshaping。

Stack

stack() 方法“压缩”DataFrame列.

tuples = list(zip(*[['bar', 'bar', 'baz', 'baz', 'foo', 'foo', 'qux', 'qux'], ['one', 'two', 'one', 'two', 'one', 'two', 'one', 'two']]))index = pd.MultiIndex.from_tuples(tuples, names=['first', 'second'])df = pd.DataFrame(np.random.randn(8, 2), index=index, columns=['A', 'B'])df2 = df[:4]# A B# first second # bar one 0.029399 -0.542108# two 0.282696 -0.087302# baz one -1.575170 1.771208# two 0.816482 1.100230stacked = df2.stack()# first second # bar one A 0.029399# B -0.542108# two A 0.282696# B -0.087302# baz one A -1.575170# B 1.771208# two A 0.816482# B 1.100230# dtype: float64stack() 和 unstack()相互为反函数。

stacked.unstack()# A B# first second # bar one 0.029399 -0.542108# two 0.282696 -0.087302# baz one -1.575170 1.771208# two 0.816482 1.100230stacked.unstack(1)# second one two# first # bar A 0.029399 0.282696# B -0.542108 -0.087302# baz A -1.575170 0.816482# B 1.771208 1.100230stacked.unstack(0)# first bar baz# second # one A 0.029399 -1.575170# B -0.542108 1.771208# two A 0.282696 0.816482# B -0.087302 1.100230数据透视表-Pivot Tables

详情请参阅:Pivot Tables.

df = pd.DataFrame({'A' : ['one', 'one', 'two', 'three'] * 3, 'B' : ['A', 'B', 'C'] * 4, 'C' : ['foo', 'foo', 'foo', 'bar', 'bar', 'bar'] * 2, 'D' : np.random.randn(12), 'E' : np.random.randn(12)})# # A B C D E# 0 one A foo 1.418757 -0.179666# 1 one B foo -1.879024 1.291836# 2 two C foo 0.536826 -0.009614# 3 three A bar 1.006160 0.392149# 4 one B bar -0.029716 0.264599# 5 one C bar -1.146178 -0.057409# 6 two A foo 0.100900 -1.425638# 7 three B foo -1.035018 1.024098# 8 one C foo 0.314665 -0.106062# 9 one A bar -0.773723 1.824375# 10 two B bar -1.170653 0.595974# 11 three C bar 0.648740 1.167115生成数据透视表

pd.pivot_table(df, values='D', index=['A', 'B'], columns=['C'])# Out[107]: # C bar foo# A B # one A -0.773723 1.418757# B -0.029716 -1.879024# C -1.146178 0.314665# three A 1.006160 NaN# B NaN -1.035018# C 0.648740 NaN# two A NaN 0.100900# B -1.170653 NaN# C NaN 0.536826时间序列-Time Series

Pandas在对频率转换进行重新采样时拥有简单、强大且高效的功能(如将按秒采样的数据转换为按5分钟为单位进行采样的数据)。这种操作在金融领域非常常见。具体参考:Time Series section。

rng = pd.date_range('1/1/2012', periods=100, freq='S')ts = pd.Series(np.random.randint(0, 500, len(rng)), index=rng)ts.resample('5Min').sum()# 2012-01-01 25083# Freq: 5T, dtype: int64- 时区表示

rng = pd.date_range('3/6/2012 00:00', periods=5, freq='D')ts = pd.Series(np.random.randn(len(rng)), rng)# 2012-03-06 0.464000# 2012-03-07 0.227371# 2012-03-08 -0.496922# 2012-03-09 0.306389# 2012-03-10 -2.290613# Freq: D, dtype: float64ts_utc = ts.tz_localize('UTC')# 2012-03-06 00:00:00+00:00 0.464000# 2012-03-07 00:00:00+00:00 0.227371# 2012-03-08 00:00:00+00:00 -0.496922# 2012-03-09 00:00:00+00:00 0.306389# 2012-03-10 00:00:00+00:00 -2.290613# Freq: D, dtype: float64- 时区转换

ts_utc.tz_convert('US/Eastern')# Out[116]: # 2012-03-05 19:00:00-05:00 0.464000# 2012-03-06 19:00:00-05:00 0.227371# 2012-03-07 19:00:00-05:00 -0.496922# 2012-03-08 19:00:00-05:00 0.306389# 2012-03-09 19:00:00-05:00 -2.290613# Freq: D, dtype: float64- 时间跨度转换

rng = pd.date_range('1/1/2012', periods=5, freq='M')ts = pd.Series(np.random.randn(len(rng)), index=rng)# 2012-01-31 -1.134623# 2012-02-29 -1.561819# 2012-03-31 -0.260838# 2012-04-30 0.281957# 2012-05-31 1.523962# Freq: M, dtype: float64ps = ts.to_period()# 2012-01 -1.134623# 2012-02 -1.561819# 2012-03 -0.260838# 2012-04 0.281957# 2012-05 1.523962# Freq: M, dtype: float64ps.to_timestamp()# # 2012-01-01 -1.134623# 2012-02-01 -1.561819# 2012-03-01 -0.260838# 2012-04-01 0.281957# 2012-05-01 1.523962# Freq: MS, dtype: float64- 时期和时间戳之间的转换使得可以使用一些方便的算术函数。

prng = pd.period_range('1990Q1', '2000Q4', freq='Q-NOV')ts = pd.Series(np.random.randn(len(prng)), prng)ts.index = (prng.asfreq('M', 'e') + 1).asfreq('H', 's') + 9ts.head()# 1990-03-01 09:00 -0.902937# 1990-06-01 09:00 0.068159# 1990-09-01 09:00 -0.057873# 1990-12-01 09:00 -0.368204# 1991-03-01 09:00 -1.144073# Freq: H, dtype: float64Categorical

从0.15版本开始,pandas可以在DataFrame中支持Categorical类型的数据,详细 介绍参看:categorical introduction和API documentation。

df = pd.DataFrame({"id":[1,2,3,4,5,6], "raw_grade":['a', 'b', 'b', 'a', 'a', 'e']})- 将原始的grade转换为Categorical数据类型

df["grade"] = df["raw_grade"].astype("category")# Out[129]: # 0 a# 1 b# 2 b# 3 a# 4 a# 5 e# Name: grade, dtype: category# Categories (3, object): [a, b, e]- 将Categorical类型数据重命名为更有意义的名称

df["grade"].cat.categories = ["very good", "good", "very bad"] # [a, b, e]- 对类别进行重新排序,增加缺失的类别

df["grade"] = df["grade"].cat.set_categories(["very bad", "bad", "medium", "good", "very good"])# # 0 very good# 1 good# 2 good# 3 very good# 4 very good# 5 very bad# Name: grade, dtype: category# Categories (5, object): [very bad, bad, medium, good, very good]- 排序是按照Categorical的顺序进行的而不是按照字典顺序进行

df.sort_values(by="grade")# Out[133]: # id raw_grade grade# 5 6 e very bad# 1 2 b good# 2 3 b good# 0 1 a very good# 3 4 a very good# 4 5 a very good- 对Categorical列进行排序时存在空的类别

df.groupby("grade").size()# Out[134]: # grade# very bad 1# bad 0# medium 0# good 2# very good 3# dtype: int64画图-Plotting

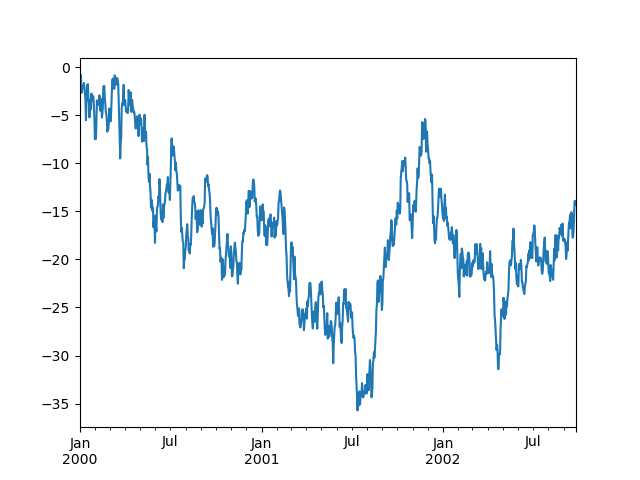

具体文档参看:Plotting docs

ts = pd.Series(np.random.randn(1000), index=pd.date_range('1/1/2000', periods=1000))ts = ts.cumsum()ts.plot()plt.show()

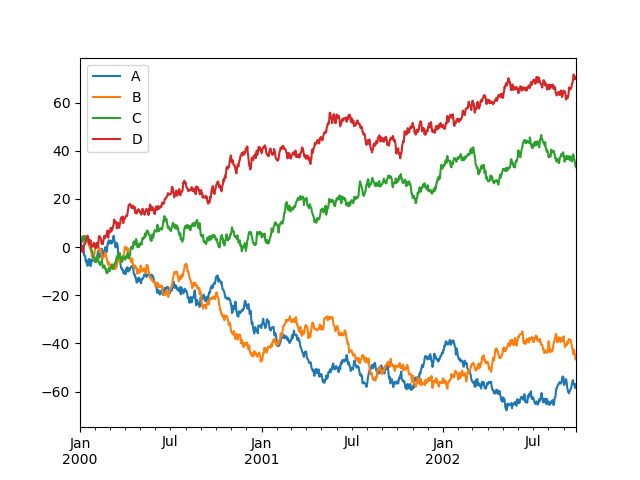

- 对于DataFrame来说,plot是一种将所有列及其标签进行绘制的简便方法:

df = pd.DataFrame(np.random.randn(1000, 4), index=ts.index, columns=['A', 'B', 'C', 'D'])df = df.cumsum()plt.figure(); df.plot(); plt.legend(loc='best')

导入和保存数据

csv

参考:Writing to a csv file

- 写入csv

df.to_csv('foo.csv')- 读取csv

pd.read_csv('foo.csv')HDF5

参考:HDFStores

- 写入HDF5存储

df.to_hdf('foo.h5','df')- 从HDF5存储中读取

pd.read_hdf('foo.h5','df')Excel

参考: MS Excel

- 写入excel

df.to_excel('foo.xlsx', sheet_name='Sheet1')- 读取excel

pd.read_excel('foo.xlsx', 'Sheet1', index_col=None, na_values=['NA'])Gotchas

if pd.Series([False, True, False]): print("I was true")See Comparisons for an explanation and what to do.

See Gotchas as well.

Reference

- 10 Minutes to pandas

- Pandas分组统计函数:groupby、pivot_table及crosstab

- 10 Minutes to pandas

- 10 Minutes to pandas

- 10 MINUTES TO PANDAS(10分钟搞定Pandas)

- pandas官方网站上《10 Minutes to pandas》的简单翻译

- 10 Minutes to pandas----十分钟搞定Pandas

- 十分钟学会pandas《10 Minutes to pandas》

- five minutes to spare

- seconds to minutes

- How to Spend the First 10 Minutes of Your Day

- Permutations - 10 minutes.

- Learn UNIX in 10 minutes

- learn xajax in 10 minutes

- Learn UNIX in 10 minutes

- Regular Expressions in 10 Minutes

- Learn Python in 10 Minutes

- front UAG in 10 minutes

- Learn Python in 10 minutes

- learn python in 10 minutes

- 单链表的逆置(头插法和就地逆置)

- Ubuntu14.04设置固定的DNS

- npm 常用命令详解

- js中Object定义的几种方法

- Ubuntu 使用 Android Studio 编译 TensorFlow android demo

- 10 Minutes to pandas

- linux下.vimrc文件配置

- QT转换字节大小为最接近的大小单位

- ajax请求后台时前端没有反应

- Validate插件验证表单

- librec运行方法

- 关于设备号的思考

- protobuf nanopb 使用步骤

- HA 高可用