Caffe源码解析7:Pooling_Layer

来源:互联网 发布:qq飞车黄金守护神数据 编辑:程序博客网 时间:2024/06/06 00:43

转载:http://home.cnblogs.com/louyihang-loves-baiyan/

Pooling 层一般在网络中是跟在Conv卷积层之后,做采样操作,其实是为了进一步缩小feature map,同时也能增大神经元的视野。在Caffe中,pooling层属于vision_layer的一部分,其相关的定义也在vision_layer.hpp的头文件中。Pooling层的相关操作比较少,在Caffe的自带模式下只有Max pooling和Average poooling两种

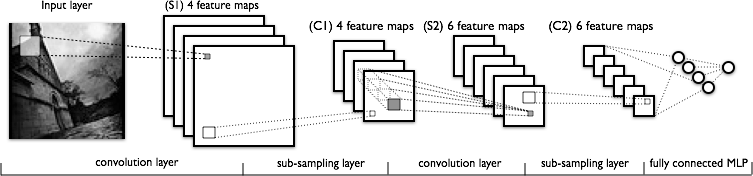

下图是一个LeNet的网络结构图,全连接之前主要有2个卷基层,2个池化层,其中sub_sampling layer就是pooling的操作。pooling的范围是给定的一个region。

PoolingLayer

caffe中Pooling的操作相对比较少,结构也简单,首先看它的Forward_cpu函数,在forward的时候根据相应的Pooling_method选择相应的pooling方法

forward_cpu

void PoolingLayer<Dtype>::Forward_cpu(const vector<Blob<Dtype>*>& bottom, const vector<Blob<Dtype>*>& top) { const Dtype* bottom_data = bottom[0]->cpu_data(); Dtype* top_data = top[0]->mutable_cpu_data(); const int top_count = top[0]->count(); //将mask信息输出到top[1],如果top大于1 const bool use_top_mask = top.size() > 1; int* mask = NULL; // suppress warnings about uninitalized variables Dtype* top_mask = NULL; switch (this->layer_param_.pooling_param().pool()) { case PoolingParameter_PoolMethod_MAX://这里的case主要是实现max pooling的方法 // Initialize if (use_top_mask) { top_mask = top[1]->mutable_cpu_data(); caffe_set(top_count, Dtype(-1), top_mask); } else { mask = max_idx_.mutable_cpu_data(); caffe_set(top_count, -1, mask); } caffe_set(top_count, Dtype(-FLT_MAX), top_data); // The main loop for (int n = 0; n < bottom[0]->num(); ++n) { for (int c = 0; c < channels_; ++c) { for (int ph = 0; ph < pooled_height_; ++ph) { for (int pw = 0; pw < pooled_width_; ++pw) { int hstart = ph * stride_h_ - pad_h_;//这里的hstart,wstart,hend,wend指的是pooling窗口在特征图中的坐标,对应左上右下即x1 y1 x2 y2 int wstart = pw * stride_w_ - pad_w_; int hend = min(hstart + kernel_h_, height_); int wend = min(wstart + kernel_w_, width_); hstart = max(hstart, 0); wstart = max(wstart, 0); const int pool_index = ph * pooled_width_ + pw; for (int h = hstart; h < hend; ++h) { for (int w = wstart; w < wend; ++w) { const int index = h * width_ + w;//记录index偏差 if (bottom_data[index] > top_data[pool_index]) {//不停迭代 top_data[pool_index] = bottom_data[index]; if (use_top_mask) { top_mask[pool_index] = static_cast<Dtype>(index);//记录当前最大值的的坐标索引 } else { mask[pool_index] = index; } } } } } } // 计算偏移量,进入下一张图的index起始地址 bottom_data += bottom[0]->offset(0, 1); top_data += top[0]->offset(0, 1); if (use_top_mask) { top_mask += top[0]->offset(0, 1); } else { mask += top[0]->offset(0, 1); } } } break; case PoolingParameter_PoolMethod_AVE://average_pooling for (int i = 0; i < top_count; ++i) { top_data[i] = 0; } // The main loop for (int n = 0; n < bottom[0]->num(); ++n) {//同样是主循环 for (int c = 0; c < channels_; ++c) { for (int ph = 0; ph < pooled_height_; ++ph) { for (int pw = 0; pw < pooled_width_; ++pw) { int hstart = ph * stride_h_ - pad_h_; int wstart = pw * stride_w_ - pad_w_; int hend = min(hstart + kernel_h_, height_ + pad_h_); int wend = min(wstart + kernel_w_, width_ + pad_w_); int pool_size = (hend - hstart) * (wend - wstart); hstart = max(hstart, 0); wstart = max(wstart, 0); hend = min(hend, height_); wend = min(wend, width_); for (int h = hstart; h < hend; ++h) { for (int w = wstart; w < wend; ++w) { top_data[ph * pooled_width_ + pw] += bottom_data[h * width_ + w]; } } top_data[ph * pooled_width_ + pw] /= pool_size;//获得相应的平均值 } } // compute offset同理计算下一个图的起始地址 bottom_data += bottom[0]->offset(0, 1); top_data += top[0]->offset(0, 1); } } break; case PoolingParameter_PoolMethod_STOCHASTIC: NOT_IMPLEMENTED; break; default: LOG(FATAL) << "Unknown pooling method."; }backward_cpu

对于误差的反向传导

对于pooling层的误差传到,根据下式

这里的Upsample具体可以根据相应的pooling方法来进行上采样,upsample的基本思想也是将误差进行的平摊到各个采样的对应点上。在这里pooling因为是线性的所以h这一项其实是可以省略的。

具体的计算推导过程请结合http://www.cnblogs.com/tornadomeet/p/3468450.html有详细的推导过程,结合代码中主循环中的最里项会更清晰的明白

template <typename Dtype>void PoolingLayer<Dtype>::Backward_cpu(const vector<Blob<Dtype>*>& top, const vector<bool>& propagate_down, const vector<Blob<Dtype>*>& bottom) { if (!propagate_down[0]) { return; } const Dtype* top_diff = top[0]->cpu_diff();//首先获得上层top_blob的diff Dtype* bottom_diff = bottom[0]->mutable_cpu_diff(); caffe_set(bottom[0]->count(), Dtype(0), bottom_diff); // We'll output the mask to top[1] if it's of size >1. const bool use_top_mask = top.size() > 1; const int* mask = NULL; // suppress warnings about uninitialized variables const Dtype* top_mask = NULL; switch (this->layer_param_.pooling_param().pool()) { case PoolingParameter_PoolMethod_MAX: // The main loop if (use_top_mask) { top_mask = top[1]->cpu_data(); } else { mask = max_idx_.cpu_data(); } for (int n = 0; n < top[0]->num(); ++n) { for (int c = 0; c < channels_; ++c) { for (int ph = 0; ph < pooled_height_; ++ph) { for (int pw = 0; pw < pooled_width_; ++pw) { const int index = ph * pooled_width_ + pw; const int bottom_index = use_top_mask ? top_mask[index] : mask[index];//根据max pooling记录的mask位置,进行误差反转 bottom_diff[bottom_index] += top_diff[index]; } } bottom_diff += bottom[0]->offset(0, 1); top_diff += top[0]->offset(0, 1); if (use_top_mask) { top_mask += top[0]->offset(0, 1); } else { mask += top[0]->offset(0, 1); } } } break; case PoolingParameter_PoolMethod_AVE: // The main loop for (int n = 0; n < top[0]->num(); ++n) { for (int c = 0; c < channels_; ++c) { for (int ph = 0; ph < pooled_height_; ++ph) { for (int pw = 0; pw < pooled_width_; ++pw) { int hstart = ph * stride_h_ - pad_h_; int wstart = pw * stride_w_ - pad_w_; int hend = min(hstart + kernel_h_, height_ + pad_h_); int wend = min(wstart + kernel_w_, width_ + pad_w_); int pool_size = (hend - hstart) * (wend - wstart); hstart = max(hstart, 0); wstart = max(wstart, 0); hend = min(hend, height_); wend = min(wend, width_); for (int h = hstart; h < hend; ++h) { for (int w = wstart; w < wend; ++w) { bottom_diff[h * width_ + w] += top_diff[ph * pooled_width_ + pw] / pool_size;//mean_pooling中,bottom的误差值按pooling窗口中的大小计算,从上一层进行填充后,再除窗口大小 } } } } // offset bottom_diff += bottom[0]->offset(0, 1); top_diff += top[0]->offset(0, 1); } } break; case PoolingParameter_PoolMethod_STOCHASTIC: NOT_IMPLEMENTED; break; default: LOG(FATAL) << "Unknown pooling method."; }} 0 0

- Caffe源码解析7:Pooling_Layer

- Caffe源码解析7:Pooling_Layer

- Caffe源码解析7:Pooling_Layer

- Caffe源码解析7:Pooling_Layer

- Caffe源码:pooling_layer.cpp

- Caffe源码(六): pooling_layer 分析

- CAFFE源码学习笔记之池化层pooling_layer

- Caffe源码解读:pooling_layer的前向传播与反向传播

- 梳理caffe代码pooling_layer(二十)

- Caffe源码解析caffe.cpp

- 【Caffe源码解析】DataLayer

- caffe.proto 源码解析

- caffe源码解析-inner_product_layer

- caffe源码解析-BaseConvolutionLayer

- caffe源码解析-im2col

- Caffe源码:math_functions 解析

- Caffe源码解析

- caffe 源码解析系列

- maven dependency全局排除

- JDK源码——java.util.concurrent(二)

- c++第四次作业

- C 和 C++ 混合编程

- COM连接点事件event

- Caffe源码解析7:Pooling_Layer

- Linux下gcc-编译动态库

- css3动画产生圆圈从小到大

- @serializedname注解的意思

- jQuery 动态生成多个表格

- c++第四次实验—项目六—输出星号图

- SQL优化的14条经验总结

- tf.reduce_mean

- 最全加快Android Studio的编译速度