转:『Sklearn』数据划分方法及python代码

来源:互联网 发布:在线数据库设计工具 编辑:程序博客网 时间:2024/06/03 13:08

原理介绍

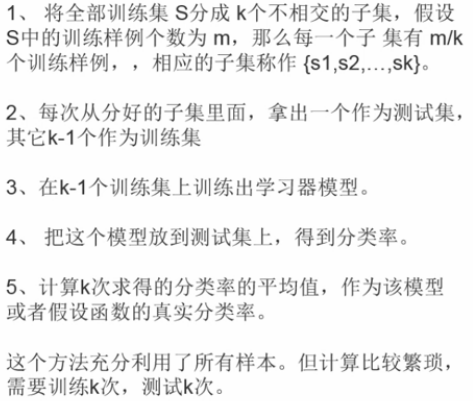

K折交叉验证:

KFold,GroupKFold,StratifiedKFold,

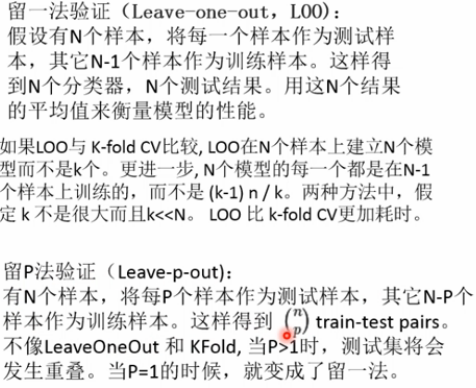

留一法:

LeaveOneGroupOut,LeavePGroupsOut,LeaveOneOut,LeavePOut,

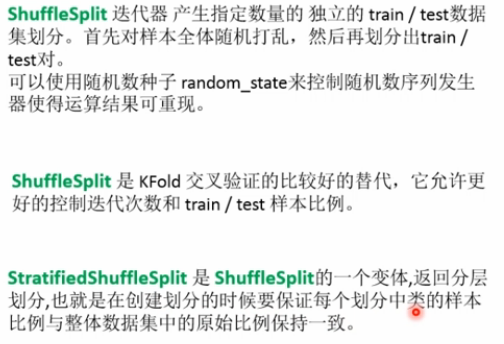

随机划分法:

ShuffleSplit,GroupShuffleSplit,StratifiedShuffleSplit,

代码实现

流程:

实例化分类器 -> 迭代器迭代组[.split()]

KFold(n_splits=2)

1

2

3

4

5

6

7

8

9

10

11

12

#KFold<br>import numpy as npfrom sklearn.model_selection import KFoldX=np.array([[1,2],[3,4],[5,6],[7,8],[9,10],[11,12]])y=np.array([1,2,3,4,5,6])kf=KFold(n_splits=2) # 定义分成几个组# kf.get_n_splits(X) # 查询分成几个组print(kf)for train_index,test_index in kf.split(X): print("Train Index:",train_index,",Test Index:",test_index) X_train,X_test=X[train_index],X[test_index] y_train,y_test=y[train_index],y[test_index] #print(X_train,X_test,y_train,y_test)GroupKFold(n_splits=2)

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

# GroupKFold,不是很懂这个划分方法import numpy as npfrom sklearn.model_selection import GroupKFoldX=np.array([[1,2],[3,4],[5,6],[7,8],[9,10],[11,12]])y=np.array([1,2,3,4,5,6])groups=np.array([1,2,3,4,5,6])group_kfold=GroupKFold(n_splits=2)group_kfold.get_n_splits(X,y,groups)print(group_kfold)for train_index,test_index in group_kfold.split(X,y,groups): print("Train Index:",train_index,",Test Index:",test_index) X_train,X_test=X[train_index],X[test_index] y_train,y_test=y[train_index],y[test_index] #print(X_train,X_test,y_train,y_test)#GroupKFold(n_splits=2)#Train Index: [0 2 4] ,Test Index: [1 3 5]#Train Index: [1 3 5] ,Test Index: [0 2 4]StratifiedKFold(n_splits=3)

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

# stratifiedKFold:保证训练集中每一类的比例是相同的(尽量)import numpy as npfrom sklearn.model_selection import StratifiedKFoldX=np.array([[1,2],[3,4],[5,6],[7,8],[9,10],[11,12]])y=np.array([1,1,1,2,2,2])skf=StratifiedKFold(n_splits=3)skf.get_n_splits(X,y)print(skf)for train_index,test_index in skf.split(X,y): print("Train Index:",train_index,",Test Index:",test_index) X_train,X_test=X[train_index],X[test_index] y_train,y_test=y[train_index],y[test_index] #print(X_train,X_test,y_train,y_test)#StratifiedKFold(n_splits=3, random_state=None, shuffle=False)#Train Index: [1 2 4 5] ,Test Index: [0 3]#Train Index: [0 2 3 5] ,Test Index: [1 4]LeaveOneOut()

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

# leaveOneOut:测试集就留下一个import numpy as npfrom sklearn.model_selection import LeaveOneOutX=np.array([[1,2],[3,4],[5,6],[7,8],[9,10],[11,12]])y=np.array([1,2,3,4,5,6])loo=LeaveOneOut()loo.get_n_splits(X)print(loo)for train_index,test_index in loo.split(X,y): print("Train Index:",train_index,",Test Index:",test_index) X_train,X_test=X[train_index],X[test_index] y_train,y_test=y[train_index],y[test_index] #print(X_train,X_test,y_train,y_test)#LeaveOneOut()#Train Index: [1 2 3 4 5] ,Test Index: [0]#Train Index: [0 2 3 4 5] ,Test Index: [1]#Train Index: [0 1 3 4 5] ,Test Index: [2]#Train Index: [0 1 2 4 5] ,Test Index: [3]#Train Index: [0 1 2 3 5] ,Test Index: [4]#Train Index: [0 1 2 3 4] ,Test Index: [5]LeavePOut(p=3)

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

LeavePOut:测试集留下P个import numpy as npfrom sklearn.model_selection import LeavePOutX=np.array([[1,2],[3,4],[5,6],[7,8],[9,10],[11,12]])y=np.array([1,2,3,4,5,6])lpo=LeavePOut(p=3)lpo.get_n_splits(X)print(lpo)for train_index,test_index in lpo.split(X,y): print("Train Index:",train_index,",Test Index:",test_index) X_train,X_test=X[train_index],X[test_index] y_train,y_test=y[train_index],y[test_index] #print(X_train,X_test,y_train,y_test)#LeavePOut(p=3)#Train Index: [3 4 5] ,Test Index: [0 1 2]#Train Index: [2 4 5] ,Test Index: [0 1 3]#Train Index: [2 3 5] ,Test Index: [0 1 4]#Train Index: [2 3 4] ,Test Index: [0 1 5]#Train Index: [1 4 5] ,Test Index: [0 2 3]#Train Index: [1 3 5] ,Test Index: [0 2 4]#Train Index: [1 3 4] ,Test Index: [0 2 5]#Train Index: [1 2 5] ,Test Index: [0 3 4]#Train Index: [1 2 4] ,Test Index: [0 3 5]#Train Index: [1 2 3] ,Test Index: [0 4 5]#Train Index: [0 4 5] ,Test Index: [1 2 3]#Train Index: [0 3 5] ,Test Index: [1 2 4]#Train Index: [0 3 4] ,Test Index: [1 2 5]#Train Index: [0 2 5] ,Test Index: [1 3 4]#Train Index: [0 2 4] ,Test Index: [1 3 5]#Train Index: [0 2 3] ,Test Index: [1 4 5]#Train Index: [0 1 5] ,Test Index: [2 3 4]#Train Index: [0 1 4] ,Test Index: [2 3 5]#Train Index: [0 1 3] ,Test Index: [2 4 5]#Train Index: [0 1 2] ,Test Index: [3 4 5]ShuffleSplit(n_splits=3,test_size=.25,random_state=0)

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

#ShuffleSplit 把数据集打乱顺序,然后划分测试集和训练集,训练集额和测试集的比例随机选定,训练集和测试集的比例的和可以小于1 import numpy as npfrom sklearn.model_selection import ShuffleSplitX=np.array([[1,2],[3,4],[5,6],[7,8],[9,10],[11,12]])y=np.array([1,2,3,4,5,6])rs=ShuffleSplit(n_splits=3,test_size=.25,random_state=0)rs.get_n_splits(X)print(rs)for train_index,test_index in rs.split(X,y): print("Train Index:",train_index,",Test Index:",test_index) X_train,X_test=X[train_index],X[test_index] y_train,y_test=y[train_index],y[test_index] #print(X_train,X_test,y_train,y_test)print("==============================")rs=ShuffleSplit(n_splits=3,train_size=.5,test_size=.25,random_state=0)rs.get_n_splits(X)print(rs)for train_index,test_index in rs.split(X,y): print("Train Index:",train_index,",Test Index:",test_index)#ShuffleSplit(n_splits=3, random_state=0, test_size=0.25, train_size=None)#Train Index: [1 3 0 4] ,Test Index: [5 2]#Train Index: [4 0 2 5] ,Test Index: [1 3]#Train Index: [1 2 4 0] ,Test Index: [3 5]#==============================#ShuffleSplit(n_splits=3, random_state=0, test_size=0.25, train_size=0.5)#Train Index: [1 3 0] ,Test Index: [5 2]#Train Index: [4 0 2] ,Test Index: [1 3]#Train Index: [1 2 4] ,Test Index: [3 5]StratifiedShuffleSplit(n_splits=3,test_size=.5,random_state=0)

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

# StratifiedShuffleSplitShuffleSplit 把数据集打乱顺序,然后划分测试集和训练集,# 训练集额和测试集的比例随机选定,训练集和测试集的比例的和可以小于1,但是还要保证训练集中各类所占的比例是一样的import numpy as npfrom sklearn.model_selection import StratifiedShuffleSplitX=np.array([[1,2],[3,4],[5,6],[7,8],[9,10],[11,12]])y=np.array([1,2,1,2,1,2])sss=StratifiedShuffleSplit(n_splits=3,test_size=.5,random_state=0)sss.get_n_splits(X,y)print(sss)for train_index,test_index in sss.split(X,y): print("Train Index:",train_index,",Test Index:",test_index) X_train,X_test=X[train_index],X[test_index] y_train,y_test=y[train_index],y[test_index] #print(X_train,X_test,y_train,y_test)#StratifiedShuffleSplit(n_splits=3, random_state=0, test_size=0.5,train_size=None)#Train Index: [5 4 1] ,Test Index: [3 2 0]#Train Index: [5 2 3] ,Test Index: [0 4 1]#Train Index: [5 0 4] ,Test Index: [3 1 2]阅读全文

0 0

- 转:『Sklearn』数据划分方法及python代码

- Sklearn.cross_validation模块和数据划分方法

- 用sklearn.model_selection.train_test_split进行机器学习数据及划分

- Python sklearn数据分析中常用方法

- 『sklearn学习』利用 Python 练习数据挖掘

- sklearn常用模块及类及方法----机器学习Python

- Python机器学习——Sklearn——划分数据集——交叉检验

- sklearn中的交叉验证和数据划分

- python数据挖掘包Sklearn

- Python机器学习——sklearn常用模块及类及方法

- sklearn常用分类器及代码实现

- Python作人脸数据分解(sklearn例子)

- python 下数据挖掘包sklearn

- Python 之 sklearn 交叉验证 数据拆分

- Python 之 sklearn 交叉验证 数据拆分

- 【python 数据标准化】利用sklearn做标准化

- Python数据扩展包之Sklearn

- python sklearn-03:特征提取方法基础知识

- 设计模式六大原则(4):接口隔离原则

- force_index优化sql

- 普通构造函数、复制构造函数以及等号重载

- ssh免密码登录

- Objects.requireNonNull方法解释

- 转:『Sklearn』数据划分方法及python代码

- Cloudera、Hortonworks 和 MapR —— Hadoop商业发行版的对比分析

- Android Studio 中xmlns:android="http://schemas.android.com/apk/res/android"报错moudle为灰色处理

- 整数转Base64字符串C

- S1 Setup

- Mybatis判断int类型是否为空

- leetcode 605[easy]---Can Place Flowers

- 有人问我:AI这么火,要不要去追赶AI的热潮?

- 重要信息!Google 给安卓开发者的三条指示!